[Editor – This post was updated in November 2023 to rename the project from NGINX Kubernetes Gateway to NGINX Gateway Fabric.]

Having worked the past several years to help you succeed on your Kubernetes journey, F5 NGINX has reached another milestone – we’ve released the first major version of the newest addition to the NGINX family: F5 NGINX Gateway Fabric!

NGINX Gateway Fabric is a controller that implements the Kubernetes Gateway API specification, which evolved from the Kubernetes Ingress API specification. Gateway API is an open source project managed by the Kubernetes Network Special Interest Group (SIG‑NETWORK) community to improve and standardize service networking in Kubernetes. NGINX is an active contributor to this project.

If you are actively working with Kubernetes, you may already be familiar with the Ingress API object and the many available Ingress implementations. Gateway API is the newest way to expose your Kubernetes apps and APIs. To learn more about the other currently available options, see Kubernetes Networking 101.

As organizations have deployed Ingress controllers over the past few years in production‑grade Kubernetes clusters across diverse hybrid, multi‑cloud environments, they have come to recognize the need for Gateway API to help solve various challenges:

-

One challenge is that the original Ingress resource is designed with only one user role in mind – the Kubernetes operator or administrator who oversees the entire configuration. That model doesn’t fit many organizations, where multiple teams – application developers, platform operators, security administrators, and others – need to control different aspects of the Ingress configuration as they collaborate on app development and delivery. Gateway API recognizes the new model and makes it easy to delegate control over networking configuration across multiple roles.

-

Another challenge is the proliferation of annotations and custom resource definitions (CRDs) in many Ingress implementations, where they unlock the capabilities of different data planes and implement features that aren’t built into the Ingress resource, like header‑based matching, traffic weighting, and multi‑protocol support. Gateway API delivers such capabilities as part of the core API standard.

What Is NGINX Gateway Fabric?

As the evolution of an Ingress controller, NGINX Gateway Fabric addresses the challenges of enabling multiple teams to manage Kubernetes infrastructures in modern customer environments. It also helps simplify deployment and administration by delivering many capabilities without the need to implement CRDs. NGINX Gateway Fabric leverages proven NGINX technology as its data plane to deliver best-in-class performance, visibility, and security.

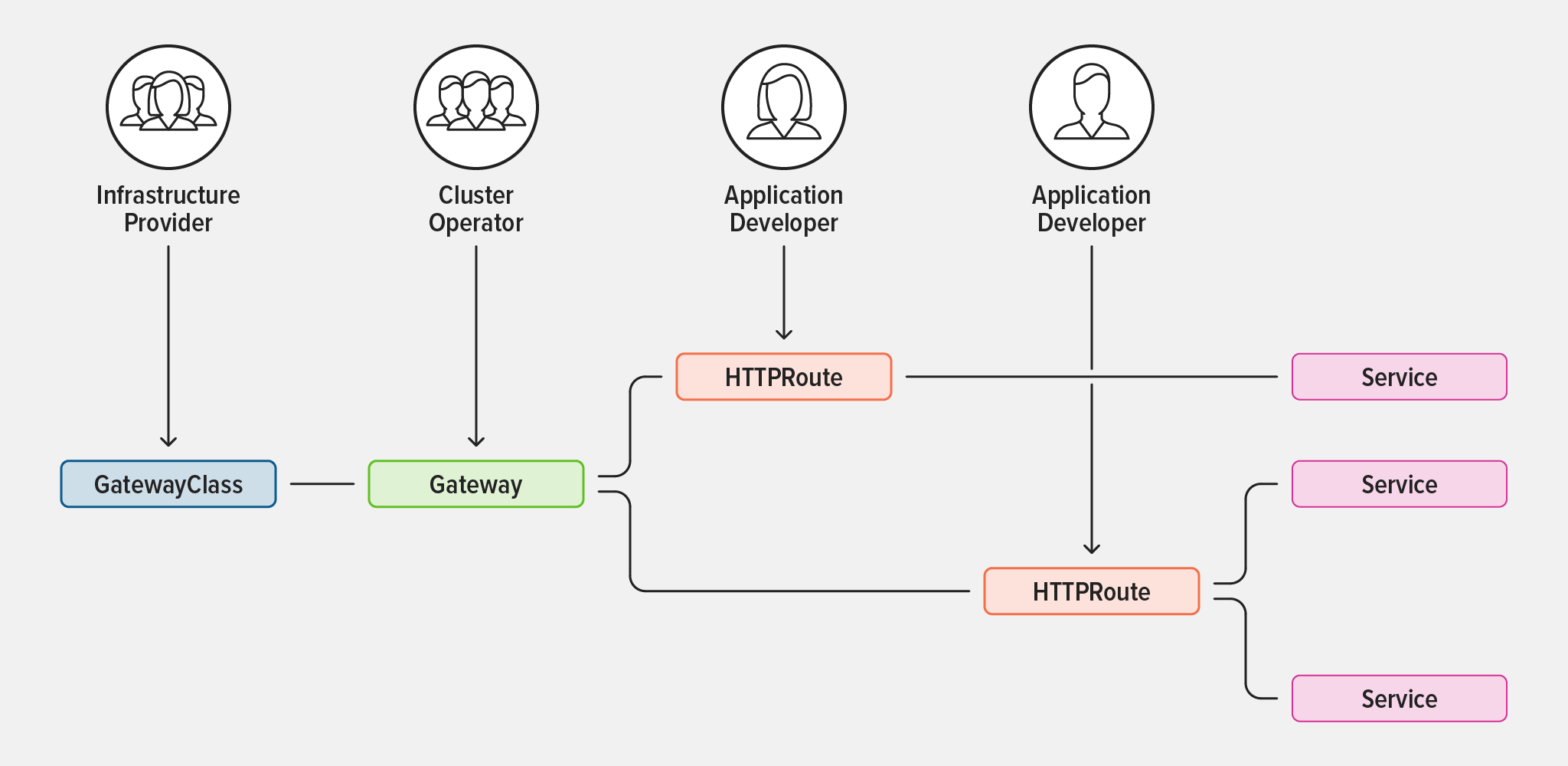

NGINX Gateway Fabric standardizes on three primary Gateway API resources (GatewayClass, Gateway, and Routes) with role‑based access control (RBAC) mapping to the associated roles (infrastructure providers, cluster operators, and application developers).

Clearly defining the scope of responsibility and separation for different roles streamlines and simplifies administration. Specifically, infrastructure providers define GatewayClasses for Kubernetes clusters while cluster operators deploy and configure Gateways within a cluster, including policies. Application developers are then free to attach Routes to Gateways to expose their applications externally.

In addition, NGINX Gateway Fabric simplifies deployment and management of service networking in Kubernetes environments by standardizing built‑in core functionality for most use cases, thereby reducing the need for CRDs. The implementation of NGINX Gateway Fabric is focused on delivering Ingress controller functionality with Layer 7 (HTTP and HTTPS) routing. Future capabilities will be driven by community feedback and use cases, including Layer 4 routing capabilities. The long‑term roadmap for the Gateway API and NGINX Kubernetes Gateway is eventually to deliver a superset of features that are offered by Ingress controllers.

How Is NGINX Gateway Fabric Different from NGINX Ingress Controller?

F5 NGINX Ingress Controller implements the Ingress API specification to deliver core functionality, using custom annotations, CRDs, and NGINX Ingress resources for expanded capabilities. NGINX Gateway Fabric conforms to the Gateway API specification, simplifies implementation, and aligns better with the organizational roles that deal with service networking configurations.

The following table compares the key high‑level features of the standard Ingress API, NGINX Ingress Controller with CRDs, and Gateway API to illustrate their capabilities.

| Feature | Standard Ingress API | NGINX Ingress Controller with CRDs | Gateway API |

|---|---|---|---|

| API specification | Ingress API | Ingress API + CRDs | Gateway API |

| Multi-user management | ❌ | ✅ | ✅ |

| Layer 7 protocols (HTTP/HTTPS, gRPC) | ✅ | ✅ | ✅ |

| Layer 7 load balancing | ✅ | ✅ | Custom policy |

| Request routing | ✅ | ✅ | ✅ |

| Request manipulation | Limited | ✅ | ✅ |

| Response manipulation | Limited | ✅ | ❌ |

| Layer 4 protocols (TLS, TCP, UDP) | ❌ | ✅ | ✅ |

| Layer 4 load balancing | ❌ | ✅ | Custom policy |

| Allow/deny lists | ❌ | ✅ | Custom policy |

| Certificate validation | ❌ | ✅ | Custom policy |

| Authentication (OIDC) | ❌ | ✅ | Custom policy |

| Rate limiting | ❌ | ✅ | Custom policy |

Is NGINX Gateway Fabric Going to Replace NGINX Ingress Controller?

NGINX Gateway Fabric is not replacing NGINX Ingress Controller. Rather, it is an emerging technology based on the first generally available release of the Gateway API specification. NGINX Ingress Controller is a mature, stable technology used in production by many customers. It can be tailored for specific use cases through custom annotations and CRDs. For example, to implement the role‑based approach, NGINX Ingress Controller uses NGINX Ingress resources, including VirtualServer, VirtualServerRoute, TransportServer, and Policy.

We don’t expect NGINX Gateway Fabric to replace NGINX Ingress Controller any time soon – if that transition does happen, it’s likely to be years away. NGINX Ingress Controller will continue to play a critical role in managing north‑south network traffic for a diverse variety of environments and use cases, including load balancing, traffic limiting, traffic splitting and security.

Is NGINX Gateway Fabric an API Gateway?

While it’s reasonable to think something named “Gateway API” is an “API gateway”, this is not the case. As we discuss in How Do I Choose? API Gateway vs. Ingress Controller vs. Service Mesh, “API gateway” describes a set of use cases that can be implemented via different types of proxies – most commonly an ADC or load balancer and reverse proxy, and increasingly an Ingress controller or service mesh. That said, much like NGINX Ingress Controller, NGINX Gateway Fabric can be used for API gateway use cases, including routing requests to specific microservices, implementing traffic policies, and enabling canary and blue‑green deployments. This release is focused on processing HTTP/HTTPS traffic. More protocols and use cases are planned for future releases.

How Do I Get Started?

Ready to try this exciting new technology? Get the release of NGINX Gateway Fabric.

For deployment instructions, see the README.

For detailed information on the Gateway API specifications, refer to the Kubernetes Gateway API documentation.

We encourage you to submit feedback, feature requests, use cases, and any other suggestions so that we can help you solve your challenges and succeed. Please share your feedback at our GitHub repo.