Running the public‑facing website for any organization is no easy task. Considered by the business to be mission critical, a website requires 100% uptime and flawless stability. And yet, the same website is also expected to be updated frequently to meet the needs of the organization. This dichotomy of requirements between stability and constant change is one of the key challenges facing operations teams. But perhaps a bigger challenge is defending against bad actors, hackers, and “script kiddies”.

Homepage defacement is the digital equivalent of graffiti across the front of your storefront or headquarters. Homepage defacement is a constant threat, and when it happens, it can make for unwanted attention and much embarrassment. Those responsible for running the website may also find that homepage defacement can result in a difficult discussion with the CEO, or even become a career‑limiting event.

Security requires a multi‑layered approach. In this blog post, we’ll discuss how NGINX Plus can be used as part of a deep security stack that might also include firewalls, intrusion detection systems, and robust account management. We use the active health check capabilities of NGINX Plus to proactively monitor the website homepage for unexpected changes and to automatically serve the previous version if any such changes are detected.

Note: Active health checks with NGINX Plus are typically used for testing the general availability and end‑to‑end application health of backend systems. In this blog post, we’ll look at a security use case which can be used to supplement existing health checks. For more information on configuring health checks, see the NGINX Plus Admin Guide.

Detecting Changes with ETag

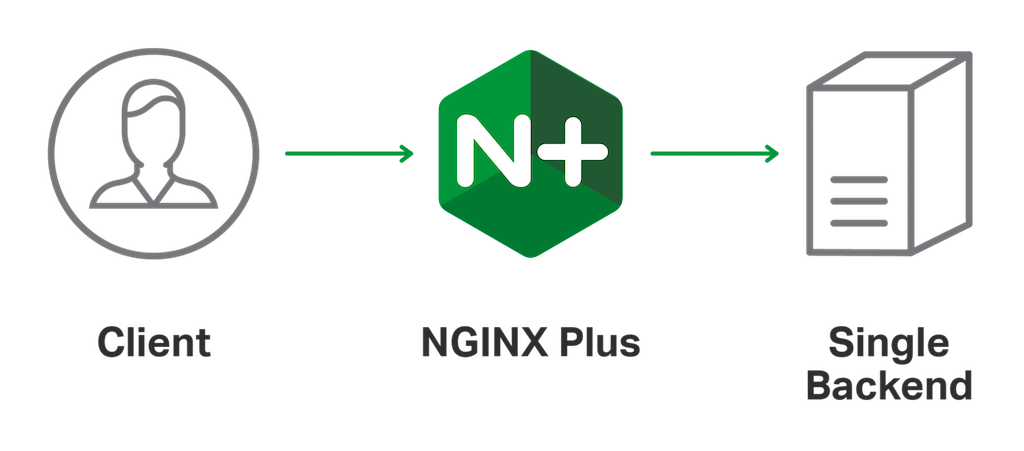

To explore how NGINX Plus can be used to detect homepage defacement, we’ll use a simple test environment that represents the key components of a public‑facing website.

This simple solution uses the HTTP ETag of the homepage resource on the backend web server. Typically used for detecting changes to cached resources, by definition, the ETag value changes whenever the resource changes. We can obtain the ETag value by examining the headers for the home page.

$ curl -i http://10.0.0.1/

HTTP/1.1 200 OK

Date: Fri, 21 Jul 2017 15:05:25 GMT

Content-Type: text/html

Content-Length: 612

ETag: "58ad6e69-264"We then define a health check that passes, provided that the same ETag is returned by the backend web server.

The match block defines the conditions that must be met by the health_check directive on line 14, in order to pass. Here we require that the HTTP header ETag exactly matches the value obtained from the backend web server. By default, the health_check directive tests the root URI (/) so we need no further configuration to check the homepage.

This configuration effectively prevents any unauthorized changes to the homepage from being served. If the ETag changes, the health check fails, and the backend web server is considered unavailable. Clearly, this level of protection can have undesirable consequences. A site that is offline is arguably just as bad as one that has been defaced. Furthermore, routine and planned changes cause an outage until the ETag value is updated in the NGINX configuration.

To address these issues, we enable content caching within NGINX and configure it so that when the health check fails, we continue to serve the last known good version. This configuration has the additional benefit of improving overall website performance by having NGINX serve cached content directly, instead of continually fetching it from the backend web server.

The proxy_cache_path directive on line 10 defines the location for the cached content. The keys_zone parameter defines a shared memory zone for the cache index (called hc_cache) and allocates 1 MB. The max_size parameter defines the point at which NGINX Plus starts to remove the least recently requested resources from the cache to make room for new cached items. The inactive parameter defines how long NGINX will keep the cached content on disk since it was last requested, regardless of Cache-Control attributes received from the backend web server.

Within the location block, the proxy_cache directive enables caching for this location by specifying the name of the shared memory zone used for storing the cache keys (hc_cache). The proxy_cache_valid directive tells NGINX how long it can serve a resource from cache before it’s considered to have expired. This value is overridden by any Cache-Control attributes received from the backend web server.

To meet the requirement of serving the last known good version of the homepage while the backend web server is considered unhealthy, we use the proxy_cache_use_stale directive to allow expired content to be served even when the backend is unavailable.

Scaling Out Change Detection with JavaScript Hashing

[Editor – The following use case is just one of many for the NGINX JavaScript module. For a complete list, see Use Cases for the NGINX JavaScript Module.]

The code in this section is updated as follows to reflect changes to the implementation of NGINX JavaScript since the blog was originally published:

- To use the refactored session (

s) object for the Stream module, which was introduced in NGINX JavaScript 0.2.4. - To use the

js_importdirective, which replaces thejs_includedirective in NGINX Plus R23 and later. For more information, see the reference documentation for the NGINX JavaScript module – the Example Configuration section shows the correct syntax for NGINX configuration and JavaScript files.

]

Detecting changes to the ETag is a simple and effective solution, but it doesn’t scale from reverse proxying to load balancing. In a load balancing environment, a single instance of NGINX Plus might receive different ETag values from each of the backend web servers even though the content is the same.

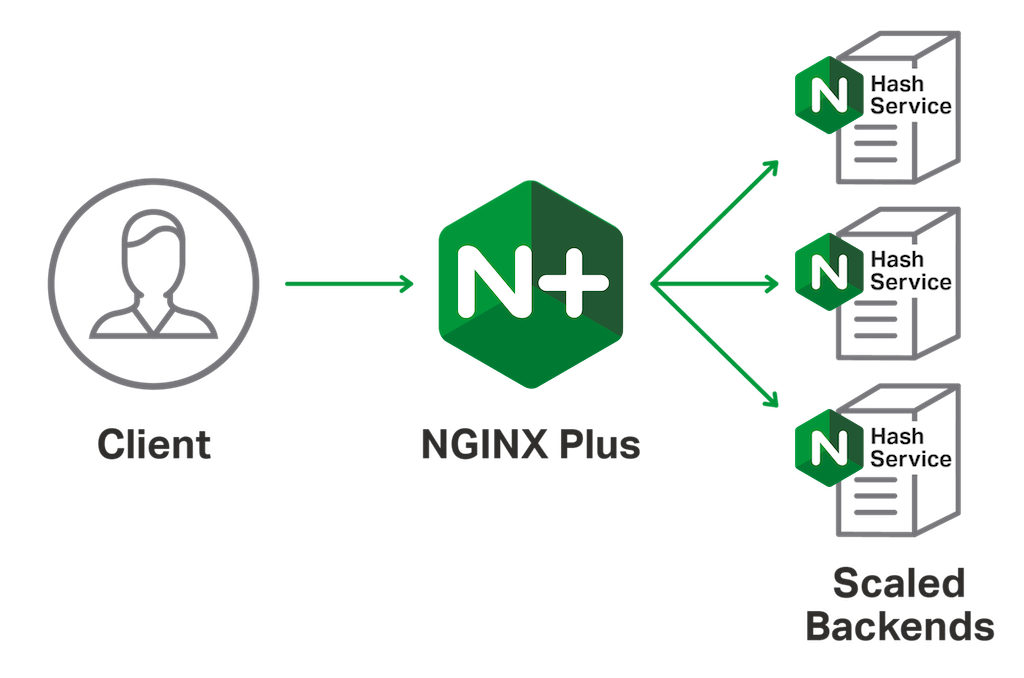

Instead of relying on the ETag, a more robust way of detecting unexpected changes is by using a cryptographic hash of the actual content served from each backend web server. To do this, we provide an adjacent web service to each backend web server that provides the SHA‑256 hash value for any given URL. This adjacent web service, our hash service, will be used only by the NGINX Plus health check to obtain a hash value for the current homepage content on each backend web server.

The backend web server doesn’t need to run NGINX or NGINX Plus in order to provide the hash service. NGINX can be installed alongside the existing web server software for this purpose, and requires very little configuration.

The hash service is implemented as a simple TCP proxy within the Stream context and uses the NGINX JavaScript module to perform the hash operation on the HTTP response received from the local web server on port 80. For more information, see Harnessing the Power and Convenience of JavaScript for Each Request with the NGINX JavaScript Module. The code for the hash service appears below.

With this configuration in place, we obtain the SHA‑256 hash of the homepage by querying the hash service or by sending the homepage output to an external hashing library. Here we use OpenSSL to check if the results of the hash service are correct.

$ curl -i http://127.0.0.1:4256/

HTTP/1.1 204 No Content

Hash: 38ffd4972ae513a0c79a8be4573403edcd709f0f572105362b08ff50cf6de521

$ curl -s http://127.0.0.1/ | openssl dgst -sha256

(stdin)= 38ffd4972ae513a0c79a8be4573403edcd709f0f572105362b08ff50cf6de521The hash service returns a minimal HTTP response, with only the hash value as a single HTTP header. The NGINX Plus configuration can now be modified to use the hash service for its health check.

The match block now defines a healthy response as one which includes a status code of 204 and a header of hash, with the value obtained from the hash service. In order for NGINX Plus to use the hash service on each backend web server, the health_check directive specifies the port number used by the hash service, which overrides the port specified in each server directive in the upstream group (lines 7–9).

We can expect the homepage content to be identical for each backend web server, so NGINX Plus removes a backend server from the upstream group if the homepage content changes. However, NGINX Plus continues to serve the last known good content from the cache so that users are unaffected. The NGINX Plus dashboard can then be used to highlight any backend servers that have failed health checks – another way in which NGINX Plus helps you deliver your site with performance, security, reliability, and scale.