Application delivery is changing fast. TechTarget recently published a great article on the topic, ‘Load balancers’ to ‘ADP’: The latest on app delivery.

In this piece, John Burke of Nemertes Research highlights how the technology for making your applications fast and always available has evolved:

- First, load balancers – Purpose‑built hardware devices with code embedded in firmware

- Then, application delivery controllers (ADCs) – Purpose‑built hardware devices with more capabilities, such as SSL offload, proxying, and reverse proxying

- Today, application delivery platforms (ADPs) – Flexible, programmable, software‑based offerings which provide a wide range of services

In the TechTarget piece, Burke spells out four key characteristics an ADP needs to meet today’s requirements for application delivery:

NGINX has seen this same evolution, and we have specifically architected our software to exceed those requirements. Below is a short summary of how NGINX functions as an ADP for the modern Web.

Flexible Form Factor

In early load balancers, the software was inextricably married to the hardware. A typical argument given by hardware ADC vendors in favor of this approach is that specialized hardware components, such as a cryptographic module, perform better than a software‑only solution. This might have been true when ADCs first became widely available, but processor speeds have improved dramatically since then. A modern ADP is completely independent of hardware, without any noticeable loss of performance or capability.

When NGINX is running as a software application delivery solution on off‑the‑shelf hardware, it performs at least as well as a hardware ADC for a fraction of the cost. In fact, because it’s easier to add features, a software ADC is often well ahead on capability.

According to Burke, a further advantage of software is that the ADP can “follow a workload if the workload gets moved”. NGINX and NGINX Plus offer the ultimate flexibility in deployment: the same software runs on commodity hardware and in cloud deployments – instead of (or in conjunction with) cloud‑native services such as AWS Elastic Load Balancer. With NGINX handling load balancing across multiple platforms, applications can be moved between clouds, from physical servers to the cloud, and so on, without changing the load‑balancing approach. With NGINX, your team can “learn once, use everywhere” instead of having to keep track of differences between the on‑premises and cloud versions of proprietary ADC software.

Scalability

Today’s ADPs have to deal with huge amounts of traffic from different kinds of clients, along with growing “east‑west” traffic among backend services. (Traffic amongst servers is termed east-west, while client-server traffic is known as north-south, according to quora.com.) To keep up, you might need to scale your ADP vertically (buy a bigger box), horizontally (buy more boxes), or, more typically, both.

NGINX natively supports multiprocessor environments with a shared memory‑based architecture. NGINX can run a “worker process” per core to fully utilize even the most vertically scaled hardware. (For details, see Owen Garrett’s blog post on the design of NGINX.) NGINX features shared memory core features such as session persistence, caching, and rate limiting in a multiprocessor architecture.

The first step in enabling scalability is often the deployment of NGINX as a reverse proxy server. Putting NGINX up front buffers application servers from direct exposure to web traffic and allows more servers to be deployed as needed. Common responses can be cached by NGINX to reduce load on application servers.

With NGINX in place, it’s easy to scale both vertically – putting bigger boxes or instances in place – and horizontally, sharing loads across multiple physical devices, virtual instances, or cloud instances. This is why NGINX is used by so many of the world’s busiest sites and web applications.

Manageability

The old approach to manageability was for each vendor to offer specialized software that worked only with its own load balancer, with a separate management view for each physical server behind it. The new approach is to use open source tools flexibly across physical devices, virtual instances, and cloud instances.

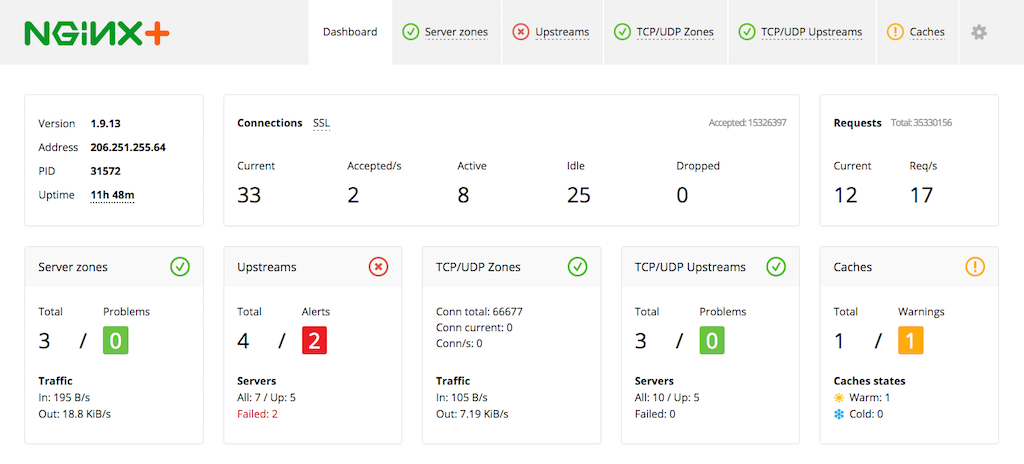

NGINX Plus includes advanced monitoring and management tools, offering what Burke calls a single pane of glass interface to the NGINX software and all upstream devices.

NGINX also integrates readily with Ansible, Chef, and Puppet for configuration management. These tools can be used to fully manage configuration of NGINX instances in both production and staging environments. By using tools such as these, you get a common interface for deploying and configuring both NGINX and other pieces of your application infrastructure.

NGINX Plus also provides live activity monitoring data in JSON format, making it easy to export critical stats, such as requests/sec and bandwidth usage, to your tool of choice. There are prebuilt plug‑ins for AppDynamics, Data Dog, and New Relic. Just as with configuration, the use of NGINX Plus gives you a common monitoring interface for NGINX along with other pieces of your application infrastructure.

NGINX also works with Docker, Kubernetes, and other high‑level containerization and orchestration platforms. NGINX is one of the most popular apps on Docker Hub.

Extensibility

An ADP has to work with a wide range of clients and provide a wide range of services, contributing strongly to the mix of servers and services that together host and deliver the web application.

NGINX Plus, in particular, provides all the functionality of a complete ADP – not only load balancing, but caching for static files, SSL/TLS and HTTP/2 termination, IP geolocation of clients, security improvements, manageability, and more.

NGINX’s IP Geolocation feature is a real plus in an ADP. NGINX can route clients from different countries to the language‑appropriate site for them. Users from France will automatically be directed to the French language site, US users to the US English site, and so on. You can also leverage this feature to block users from a particular country or location, for example if export or copyright restrictions prohibit making resources accessible to users outside of your own country.

NGINX Plus provides HTTP‑based API gateway services, including the ability to rate limit API calls to match the capacity of servers that provide them, and caching support for static files.

Market Changes

The thesis of Burke’s article is that IT professionals are moving from hardware‑based load balancers with a narrow range of functionality to software‑based ADPs with a wide range of capabilities. A recent Gartner report, the Application Delivery Controller Magic Quadrant, shows how the move from hardware to software is affecting the load-balancing market. For device makers like F5 and Citrix, growth is stalling; the excitement is in the app-centric (that is, DevOps‑oriented) world of small, fast‑moving companies. In recognition of this, NGINX was named as one of Gartner’s “Cool Vendors” for Web Scale Platforms.

DevOps buyers, and those moving to microservices, favor software‑based approaches, with load balancing available as part of a “Swiss Army” knife of capabilities and services. More fundamentally, this is also absolutely critical for the move to both public and private cloud infrastructure. Having your application delivery be software‑defined gives you the room to adapt to market changes in a way that traditional hardware‑centric approaches restrict.

Conclusion

Burke’s article helps organize what could seem like a profusion of buzzwords into a logical, evolutionary progression – from load balancers, to application delivery controllers (ADCs), and now to application delivery platforms (ADPs). The key attributes to look for in an ADP are flexible form factor, scalability, manageability, and extensibility.

NGINX meets and surpasses the article’s requirements for an advanced ADP, with caching and the ability to terminate SSL/TLS and HTTP/2 connections. These and other features are described in our comprehensive blog post, 10 Tips for 10x Application Performance. It discusses the core concepts and how‑tos for making web apps run faster, including load balancing, caching, and monitoring.

NGINX Plus offers a wide range of load balancing features and monitoring capabilities, plus support and service – highly valued in complex, multivendor IT environments.

The software can be used on premises, where NGINX is the #1 server at the world’s 1 million busiest websites, and in the cloud – NGINX is also the leading web server for Amazon Web Services. Once NGINX is part of an application delivery solution, it can be reused in on‑premises servers, in virtual machines, in private clouds, and in public clouds.

Burke’s analysis captures the broad sweep of development, from the first, hardware‑based load balancers to today’s highly capable, flexible, software‑ and service‑based ADPs. We recommend it as a framework for evaluating your own needs as you continue to expand and deepen your application portfolio.