This post is adapted from a presentation at nginx.conf 2016 by Nathan Moore of StackPath. This is the third of three parts of the adaptation. In Part 1, Nathan described SPDY and HTTP/2, proxying under HTTP/2, HTTP/2’s key features and requirements, and NPN and ALPN. In Part 2, he talked about implementing HTTP/2 in NGINX, running benchmarks, and more. This part includes the conclusions, a summary of criticisms of HTTP/2, closing thoughts, and a Q&A.

You can view the complete presentation on YouTube.

Table of Contents

| Part 1 | |

| Part 2 | |

| 22:15 | Conclusions |

| 24:05 | Second-System Effect |

| 24:43 | Criticism of HTTP/2 |

| 25:32 | W. Edwards Deming |

| 25:40 | Theory of Constraints |

| 26:40 | W. Edwards Deming, Revisited |

| 26:57 | Theory of Constraints, Revisited |

| 28:07 | Buzzword Compliance |

| 28:21 | Questions |

22:15 Conclusions

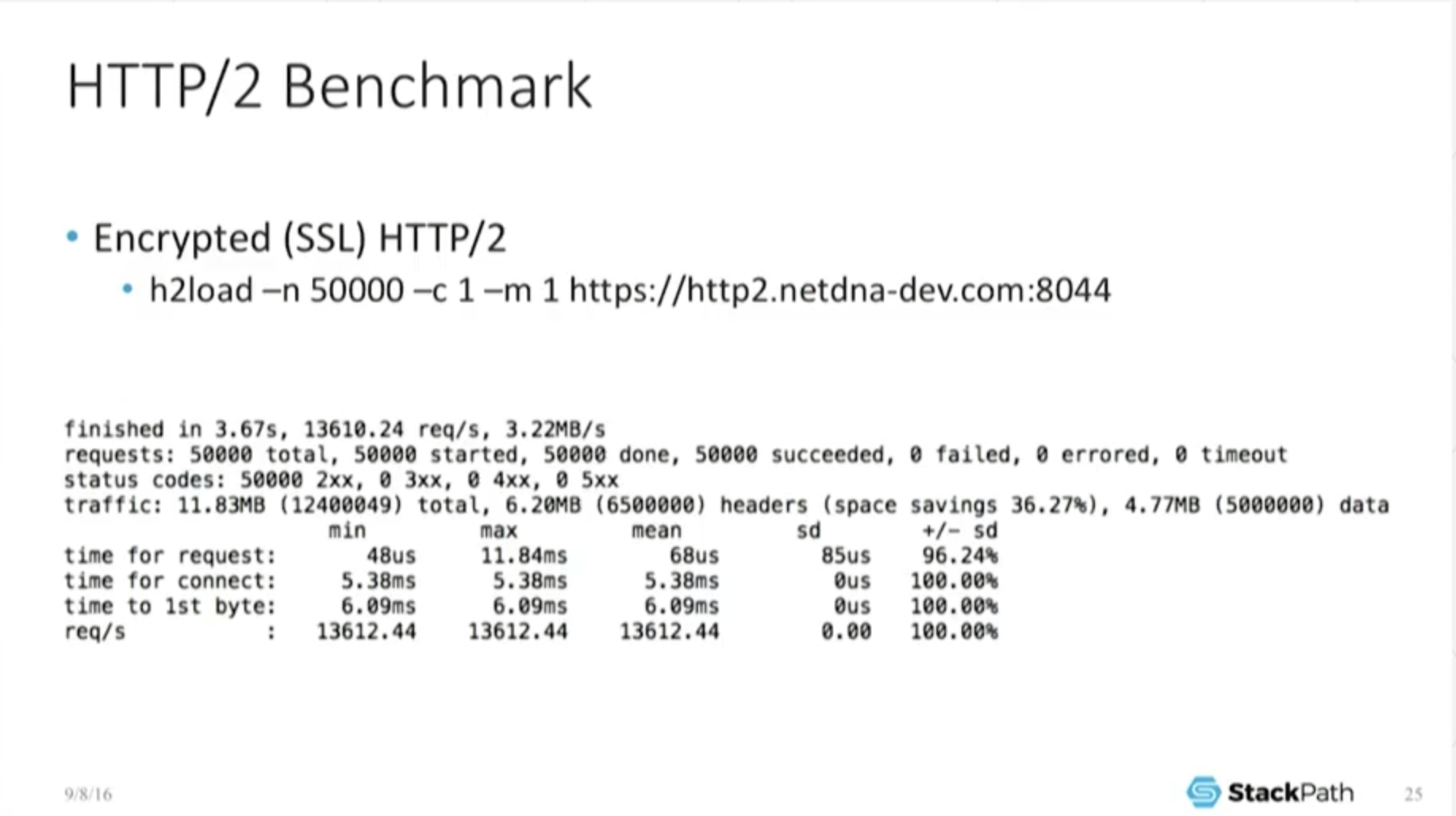

One thing I really want to emphasize here is that engineering is always done with numbers. The moment someone comes out, “I think this, I think that,” well, that’s great, but that’s opinion.

You need some sort of numbers. You really need to take the time to understand what your app is doing. You need to take the time to benchmark, make sure this actually works for you.

You really, really have to optimize. You really have to make sure that you’re playing to its strengths. If you [need a feature] like header compression, it’s there, but you better make sure that’s the use case that you’re using it for and it actually works for you.

If you look a little more globally in terms of future proofing, H2 is not going away. Now it’s been ratified as a standard [RFC 7540]. Reference implementations are out [in the field]. It’s now been pulled into NGINX and other main lines. It’s very common. It’s pulled into Apache. Support for this is basically uniform. It sits pretty much everywhere, so the protocol itself isn’t going to disappear. You need to make sure that it’s working correctly for you.

The last thing I wanted to mention in terms of future proofing is: you noticed that it reduced the number of connections? If you are an app developer, you probably don’t care so much about this because this is really your SRE’s responsibility, making sure there’s enough compute resources available for it. That’s not really your problem.

But if you’re in the SRE [site reliability engineering] role…[acting] as a cache, [you] adore this. [You can] run a server with 50,000 simultaneous connections, 100,000 simultaneous connections, and not break a sweat.

If I have a protocol which reduces [100,000] down to [somewhere between] 25,000 and 50,000, that’s a major benefit to me. Maybe not to you, but it certainly benefits me. A lot of pros and cons are associated with it. You have to be very, very careful in evaluating it, you cannot just push the button.

[Editor – Although Nathan does not talk about it at this point, the slide mentions HTTP/2 Push, also called Server Push. It was introduced in NGINX Open Source 1.13.9 and NGINX Plus Release 15 a few months after Nathan made this presentation.]

24:05 Second-System Effect

I still have a little bit of time here, and I want to go into some of the criticisms [of HTTP/2]. In the academic world, also, there have been some criticisms of the protocol itself. There really is a thing called the second‑system effect, and this has been noted by a lot of other guys. I’m just stealing from Wikipedia here: [it’s] “the tendency of a small, elegant, and successful system to have elephantine, feature‑laden monstrosities as their successors due to inflated expectations”.

This is unfortunately very common. IPv4 versus IPv6 is left as an exercise for the reader. This is absolutely something that we’ve seen in the past.

24:43 Criticism of HTTP/2

There is a gentleman by the name of Poul‑Henning Kamp. He is one of the developers behind Varnish (one of the competitor products to NGINX) and he wrote this letter and it got published in the journal of the ACM.

This [journal] is very high up in the academic world, about as high up as you can go publicly. . . . He has this wonderful, wonderful quote which I’ve just ripped off shamelessly, “HTTP/2 – The IETF is Phoning It In: Bad protocol, bad politics.” The part I love is that last sentence: ‘“Uh-oh, Second Systems Syndrome.”. . . . If that sounds underwhelming, it’s because it is.’

There are very legitimate complaints about what’s going on with this. Again, I have to go back to my earlier comment that you have to benchmark. You have to know it’s right for you. You have to know that the use case works for you.

25:32 W. Edwards Deming

I’m not that cynical. And the reason why I’m not that cynical about it is. . . an older concept I’m going to rip off shamelessly from Deming, which is his definition of a system.

And his system is a “network of. . . interdependent components that work together to try to accomplish the aim of the system”. So Linux, NGINX, your network card – those are your network of interdependent components and they all work together to try to accomplish the aim of the system, which is to serve out a web page, to serve out an HTTP object. That sure looks like a system to me.

25:40 Theory of Constraints

And if I have a system, then I can apply the Theory of Constraints.

A guy named Dr. Goldratt came up with this, wrote a book about it in the 80s. . . . It’s a methodology for identifying the bottleneck [in a system]. Theory of Constraints says that given a system, if you’re interested in performance, you’re always looking for the bottleneck; you’re looking for the slowest part of the system.

Then you go alleviate the bottleneck, you rerun your benchmarks, find out where the new bottleneck is. Lather, rinse, repeat. And you continue this iterative cycle until you get something that meets your minimum specified criteria.

26:40 W. Edwards Deming, Revisited

[That iterative cycle is] also known as the Shewhart cycle: Plan Do Study Act. You Plan: I think the bottleneck is here. You Do: you alleviate that. You Study: did it actually work? And Act: If it did? Great. Move on to the next one. If it didn’t, then I have to review and go back and replan how I’m going to affect this particular problem.

26:57 Theory of Constraints, Revisited

Is H2 the bottleneck in your system? And that’s where you’re stuck – you have to do the benchmarking work. You can’t just take my word for it, you can’t take anybody else’s word for it, you have to make sure that you are playing to its strengths, that it works for you.

You have to put the leg work in on this one, you cannot just phone it in. To go back to the very first story that I started out with: as I said, I was supposed to give this talk with a couple of other gentlemen who unfortunately bailed on me.

What they were really interested in [exploring], when we sat down to talk about it, was [how] H2 was probably a feature that a lot of customers were asking for, but in our own benchmark and testing, we didn’t really see a major benefit to doing it, for most people.

On the other hand, it didn’t seem to cost us an awful lot in order to offer it, which is one of the reasons why we went ahead and did it. We were responding to the customers, but we were kind of indifferent. It didn’t really do much of anything for us.

What do you call it when you have the situation [where] sales and marketing want you. . . to add a feature, and it doesn’t really do anything but it doesn’t really hurt? What do you call that?

28:07 Buzzword Compliance

Well, that’s kind of the definition of buzzword compliance, which was the original topic of the talk.

28:21 Questions

[The first question is asked at 28:45.]

Q: So is StackPath implementing Server Push?

A: We’re talking about it. For the initial product launch, the answer is no.

Q: Do you think in a reasonable time you could have come up with a benchmark that would have actually demonstrated the performance improvement of HTTP/2, let’s say, by interleaving?

A: . . . Interleaving and the ability to do multiple requests over one connection. . . it’s totally possible to come up with a benchmark that does that.

I didn’t, for the obvious reason: I was trying to show the difference [between HTTP/2, encrypted HTTP/1, and unencrypted HTTP/1] there. But you’re absolutely right, there are such benchmarks. There are use cases where H2 outperforms the older protocols, especially in encrypted scenarios.

Q: In your benchmarks, was it a single SSL connection and you were just putting multiple requests on it, or was it multiple sessions as well?

A: Just one connection. This particular benchmark is a very, very stupid one because I’m asking for the same object over and over.

Even if I tried to interleave these, it didn’t matter because it was the exact same object. It looked the same if I was requesting 1234 or 1324.

[There is a pause before the next question at 30:38.]

Q: So for the batching of multiple requests into one connection, it feels like it would have a significant improvement on the performance for the SSL side.

Is that what you would expect in an actual deployment, in a CDN deployment, or would you still expect that the number of connections required would still, for other reasons, have to go large?

A: My expectation is that the number of connections is going to decrease and that therefore I should have more CPU and other resources available to handle the encryption overhead. But a lot of this comes down to systems design because the moment the connection bottleneck is alleviated, I can start to afford to spend CPU resources on other things.

Ideally, I can offload even encryption from the CPU. That will result in lower latencies, which is ultimately what I’m very, very deeply interested in. So I want to get to the point where I can run incredibly high‑clock‑speed CPUs with a minimal number of cores. I can do that if I can offload the SSL operations to a secondary card.

. . . [With] TCP, the packets come in, it’s basically a queue, and the faster I can pull things off of that queue – the faster I can satisfy the request – the lower my total object latency time is. And unfortunately, that correlates very, very tightly with CPU speed, not so much with the number of CPUs. . . . CDNs largely compete on latencies. . . . That’s our value proposition. Anything I can do to [improve performance by] even a millisecond is a major, major win.

Q: Yesterday it was mentioned that one of the criticisms [of HTTP/2 is that] you can still have head‑of‑line blocking in HTTP/2, and I’m wondering if you can explain. . . what he was mentioning there versus no head‑of‑line blocking that the protocol is supposed to –

A: That was an excellent, excellent point, and one [aspect] of it is: NGINX has worker processes – and I’m sure Valentin can fill in more details if I get something wrong – you have multiple worker processes. That worker process now has to handle the HTTP/2 pipeline.

Within that one pipeline, the HTTP/2 requests can be interleaved. There’s no head‑of‑line blocking within that pipeline, but the pipeline itself can block. So if it’s stalled out on something, then forget about it. It doesn’t matter how many things are queued up within the H2 connection; those are all now blocked because the process itself is blocked.

Q: Why are many connections a performance problem?

A: Each connection chews up a fair amount of memory. One of the things that we found, especially comparing different SSL terminators, was that. . . if you have too many connections, [the amount of memory reserved for each connection] winds up blowing past a reasonable amount

. . . In a given caching server, we have to reserve some amount of the memory for the actual objects we’re interested in, and we have to use some amount for the networking stack. We tend to push our buffers really, really big because we’re really interested in performance and we want to make sure that our server itself, and certainly our network, is never the bottleneck.

As a result. . . we allow our congestion windows to run up really, really high and we allow our TCP windows to go incredibly, incredibly big. Unfortunately that just chews up constant, huge amounts of memory. And it correlates with a number of connections. So the fewer connections we have, the less memory we use; the more connections we have, the more memory we need.

Q: So is it about memory or cache?

A: Well, those are the same answer, right? Because we use it for both purposes.

Q: When you talk about encryption, you’re talking about symmetric encryption. Modern processors. . . have specialized instructions for that. Why do you think the specialized card will do better?

A: One of the things you’re referring to is Intel’s AES-NI instructions.

A little quick side story about that: I’ve actually taken receipt of servers which, for some bizarre reason, had the AES-NI disabled in the BIOS. I’ve actually made the mistake of putting in servers which I thought were configured correctly but came from the manufacturer incorrectly configured. I was wondering: why is my CPU load so high? What’s wrong with these servers? It took a little bit of diving down into it to realize that the reason. . . was the AES-NI instructions had been disabled. Enabling it, I very luckily was able to bring our CPU load back down into line with what we were expecting to see.

Currently there are a couple of competitors in the space for SSL‑offload boards. Intel and other companies like Cavium have them. . . . The promise of these boards is the ability not only to offload encryption overhead from the CPU, but also [to do] other things like compression.

You can play some other games with these things, and anything that we can do to decrease the cycle time. . . ([when] a content request comes in, goes into a queue, has to get satisfied, and [the response] goes back out) and to increase our throughput. . . is of tremendous interest. So that’s sort of a very broad answer to why we would be interested in looking at a card.

Q: Do you guys use H2 to deliver videos and if so, what’s the performance like?

A: . . . I’m going to talk from the MaxCDN side, from the legacy business. It’s used to. . . negotiate either HLS or HDS content, so we’re not using it for a lot of others.

Little quick side note: at one point, we took advantage of NGINX’s Slice module and wrote a little package we call Ranger. It is designed to assist with video delivery by taking a very large video MP4 file and slicing it into smaller chunks which we can then deliver, taking advantage of range requests.

So from that perspective, . . . we’re not getting complaints about it. When you actually start hitting it with benchmarks, it performs well enough that it’s not the bottleneck. So again, the protocol here (at least for us) is not yet the bottleneck for this. We have bottlenecks elsewhere in the system, so we haven’t really noticed a difference.

Q: So obviously it’s trivial to look at the H1 wire protocol through just netcat or tcpdump or something like that – just read the ASCII protocol and see what’s going on. You can’t do that with H2 because it’s binary and because it’s encrypted because browsers are forcing encryption.

Have you guys done any work on building Wireshark plug‑ins or doing any custom work to do any wire debugging for your inbound traffic?

A: We haven’t monkeyed much with Wireshark for that particular purpose. Obviously we use it elsewhere, tremendously. However, . . . if you have the key. . . there are ways to use Wireshark. . . to go ahead and decrypt the contents that are contained within the packet.

. . . As a little side note: when I’m using Wireshark. . . [and] doing a TCP dump, I tend to set the snaplens really, really tiny because I actually don’t want the content. I’m trying to find out: what’s the metadata? How is it actually performing? Am I dropping packets? Where is the delay actually occurring? Am I getting timeouts? . . . Fortunately, metadata is not encrypted, and so that stuff I can actually read. But no, no, we haven’t played much with it yet.

Q: A lot of HTTP queries go through transparent proxies on the Internet from various services. Do you know which percentage of those might support HTTP/2 and whether it’s a problem [if they don’t]? Will it result in a lot of downgrading to HTTP/1?

A: You’re absolutely right. . . . In practice, this is what we have to do with NGINX. Yes, if NGINX is that transparent proxy, you can only do H2 on the upstream side [that is, between client and proxy]. My mod_proxy [ngx_http_proxy_module] [does HTTP] 1.1 and 1.0 only.

There will be a lot of these games going where we have to switch the protocol version. Luckily since the object is the same, we can bounce it across the protocols. At least the end user still gets the exact same content; he gets what he requests.

But, you’re absolutely right, it’s going to be stuck passing through multiple protocol versions which means that you have to now optimize for those middle layers as well as for the edge layer.

When you start looking at how do I want my topology to look and how can I take advantage of things like keeplives or. . . very big TCP buffers that [mean] I can get very, very fast responses between my midtier level and the edge, then. . . maybe you can ameliorate some of the issues.

But you’re absolutely right, you are going to see protocol renegotiations going on and you’re going to see different connections.

Q: Do you have any numbers to dish out, like: if you had 10 million unique HTTP users, do you know which percentage would actually manage to get HTTP/2 end‑to‑end and which percentage would need to be downgraded?

A: [With respect to] end‑to‑end, anybody who’s talking directly to an NGINX origin can negotiate the full H2 connection. So you’ve actually kind of asked two questions. . . one of which is what percentage of websites actually offer H2 negotiation right now? And that’s surprisingly small. Let’s call it 20, 30, 40 percent, if that. This is from Google statistics.

However, the other question you’re asking is if I can go end‑to‑end, because CDNs are so common and serve so much of the world’s traffic.

In practice. . . a very, very tiny percentage is actually fully end‑to‑end because most guys are flowing through a transparent proxy. . . . For most guys, you’re only gonna be able to do the H2 termination from the edge on. Everything else internally is going to be H1.1.

This post is adapted from a presentation at nginx.conf 2016 by Nathan Moore of StackPath. This is the third of three parts of the adaptation. In Part 1, Nathan described SPDY and HTTP/2, proxying under HTTP/2, HTTP/2’s key features and requirements, and NPN and ALPN. In Part 2, he talked about implementing HTTP/2 in NGINX, running benchmarks, and more.

You can view the complete presentation on YouTube.