Balancing cost and risk is top of mind for enterprises today. But without sufficient visibility, it is impossible to know if resources are being used effectively or consistently.

Kubernetes enables complex deployments of containerized workloads, which are often transient and consume variable amounts of cluster resources. That makes cloud environments a great fit for Kubernetes, because they offer pricing models where you only pay for what you use, instead of having to overprovision in anticipation of peak loads. Of course, cloud vendors charge a premium for that convenience. What if you could unlock the dynamic load balancing of public cloud, without the cost? And what if you could use the same solution for your on‑premises and public cloud deployments?

Now you can. Kubecost and NGINX are helping Kubernetes users reduce complexity and costs in countless deployments. When you use these solutions together, you get optimum performance and the ultimate visibility into that performance and associated costs.

With the insight from Kubecost, you can dramatically reduce the cost of your Kubernetes deployments while increasing performance and security. Examples of what you can achieve with Kubecost include:

- Identify misconfiguration where a pod is creating significant egress traffic to a storage bucket in another region.

- Consolidate load balancer and Ingress controller tooling across a multi‑cluster Kubernetes footprint to reduce costs and improve performance.

- Understand how your containers are performing so you can correctly size them to reduce costs without risks.

NGINX Delivers the Performance You Need

NGINX Ingress Controller is one of the most widely used Ingress technologies – with more than a billion pulls on Docker Hub to date – and is synonymous with high‑performance, scalable, and secure modern apps running in production.

NGINX Ingress Controller runs alongside NGINX Open Source or NGINX Plus instances in a Kubernetes environment. It monitors standard Kubernetes Ingress resources and NGINX custom resources to discover requests for services that require Ingress load balancing. NGINX Ingress Controller then automatically configures NGINX or NGINX Plus to route and load balance traffic to these services.

NGINX Ingress Controller can be used as a universal tool to combine API gateway, load balancer, and Ingress controller functions, simplifying operations and reducing cost and complexity.

Kubecost Reveals the True Cost of Network Operations

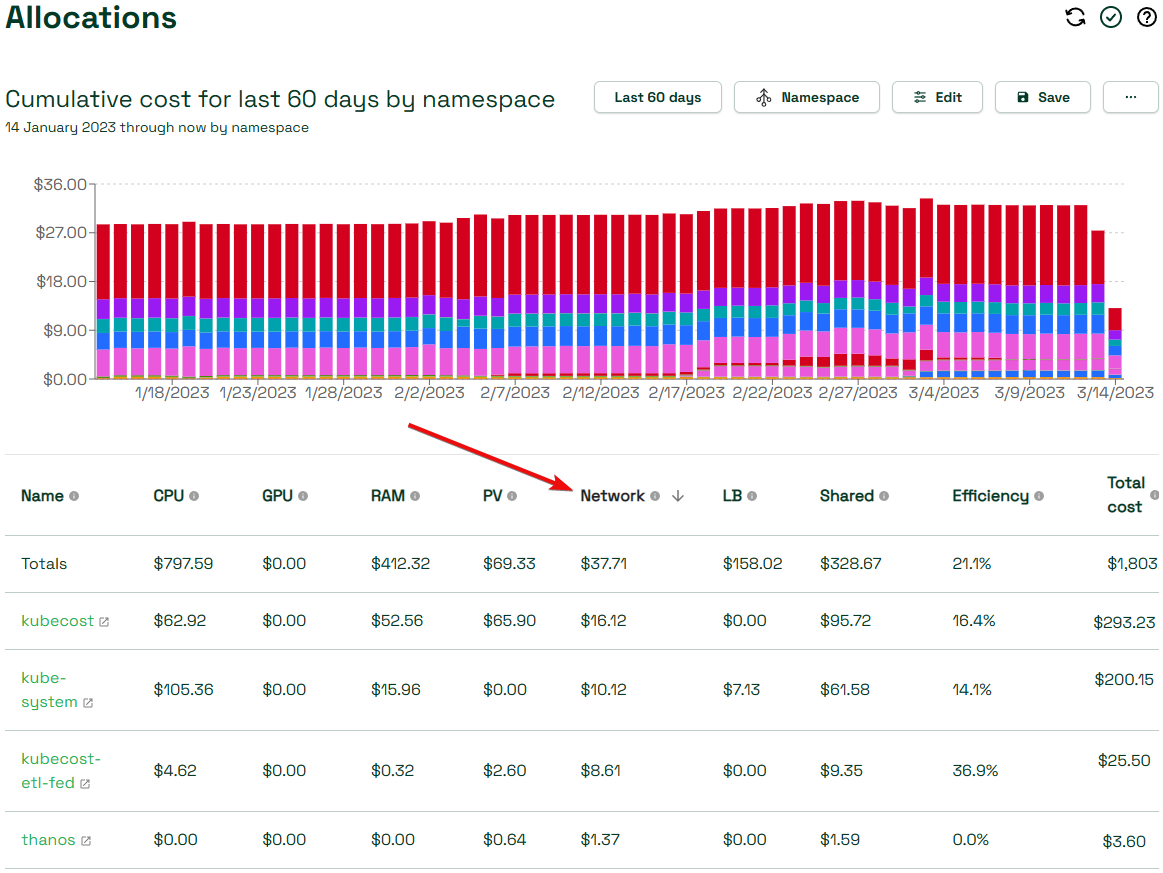

Kubecost gives Kubernetes users visibility into the cost of running each container in their clusters. This includes the obvious CPU, memory, and storage costs on each node. But Kubecost goes beyond those basics to reveal per‑pod network transfer costs which are typically incurred on data egress from the cloud provider.

There are two configuration options that determine how accurately Kubecost allocates costs to the correct workloads.

The first option is integrated cloud billing. Kubecost pulls billing data from the cloud provider, including the network transfer costs associated with the node that handled the traffic. Kubecost distributes this cost among the pods on that node by their share of container traffic.

While the total reported network costs are accurate, this method is not ideal. For many pods, the only significant traffic is within its own zone (and thus free), but Kubecost shows network costs for these workloads.

The second option, network cost configuration, addresses this limitation of cloud billing integration by looking at the source and destination of all traffic. The Kubecost Allocations dashboard displays the proportion of spend across multiple categories including Kubernetes concepts – like namespace, label, and service – and organizational divisions like team, product, project, department, and environment.

Get All the Details at Our Upcoming Webinar

Join us on April 11 at 10:00 a.m. Pacific Time for a joint webinar, Managing Kubernetes Cost and Performance with NGINX & Kubecost. In live demos and how‑tos, we’ll show you how to implement the Kubecost configuration options mentioned here to reduce the cost and optimize the performance of your Kubernetes deployments.