Building Customized Upstream Selection with JavaScript Code

[Editor – This post is one of several that explore use cases for the NGINX JavaScript module. For a complete list, see Use Cases for the NGINX JavaScript Module.

Author – As mentioned when this post was published at the release of F5 NGINX Plus R10, we’re continuing to extend and enhance the NGINX JavaScript module. We have updated the configuration of the frontend service and the name of the JavaScript function in this post to reflect updates to the module in NGINX Plus R11 (NGINX 1.11.5).

The code in this post is updated to use the js_import directive, which replaces the js_include directive in NGINX Plus R23 and later. For more information, see the reference documentation for the NGINX JavaScript module – the Example Configuration section shows the correct syntax for NGINX configuration and JavaScript files.]

With the launch of NGINX Plus R10, we introduced a preview release of our next‑generation programmatic configuration language: the NGINX JavaScript module, formerly called nginScript. The module is a unique JavaScript implementation for NGINX and NGINX Plus, designed specifically for server‑side use cases and per‑request processing.

One of the key benefits of NGINX JavaScript is the ability to read and set NGINX configuration variables. The variables can then be used to make custom routing decisions unique to the needs of the environment. This means that you can use the power of JavaScript to implement complex functionality that directly impacts request processing.

This post applies to both NGINX Open Source and NGINX Plus, and the term NGINX represents both.

The Use Case – Transitioning to a New Application Server

In this blog post we show how to use NGINX JavaScript to implement a graceful switchover to a new application server. Instead of taking a disruptive “big‑bang approach” where everything is transitioned all at once, we define a time window during which all clients are progressively transitioned to the new application server. In this way, we can gradually – and automatically – increase traffic to the new application server.

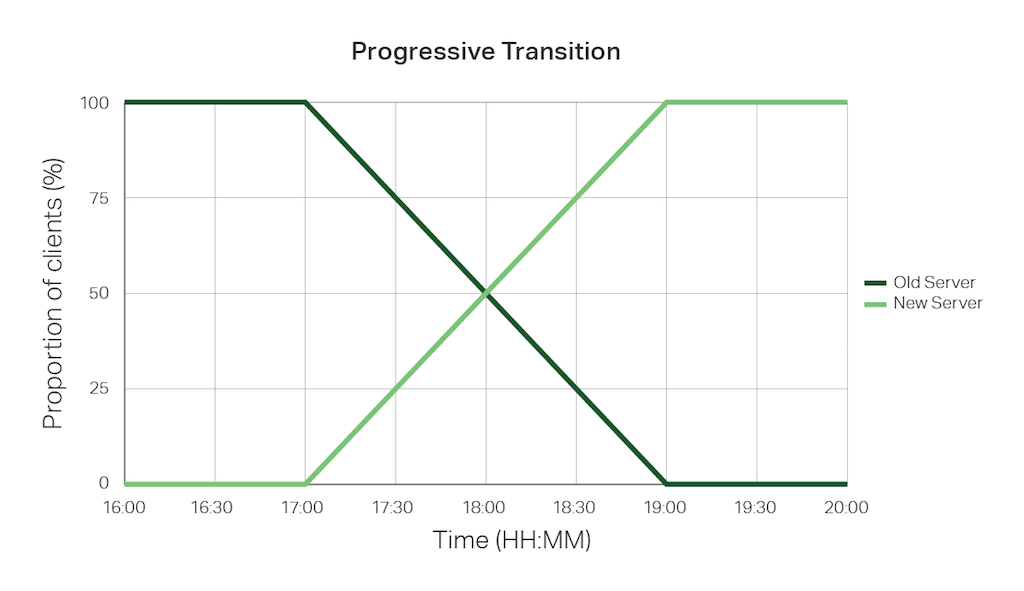

We’re defining a two‑hour window during which we want our progressive switchover to take place, in this example from 5 to 7 p.m. After the first 12 minutes we expect 10% of clients to be directed to the new application server, then 20% of clients after 24 minutes, and so on. The following graph illustrates the transition.

One important requirement of this “progressive transition” configuration is that transitioned clients don’t revert back to the original server – once a client has been directed to the new application server, it continues to be directed there for the remainder of the transition window (and afterward, of course).

We will describe the complete configuration below, but in brief, when NGINX processes a new request that matches the application that is being transitioned, it follows these rules:

- If the transition window has not started, direct the request to the old application server.

- If the transition window has elapsed, direct the request to the new application server.

- If the transition is in progress:

- Calculate the current (time) position in the transition window.

- Calculate a hash for the client IP address.

- Calculate the position of the hash in the range of all possible hash values.

- If the hash position is greater than the current position in the transition window, direct the request to the new application server; otherwise direct the request to the old application server.

Let’s get started!

NGINX and NGINX Plus Configuration for HTTP Applications

In this example we are using NGINX as a reverse proxy to a web application server, so all of our configuration is in the http context. For details about the configuration for TCP and UDP applications in the stream context, see below.

First, we define separate upstream blocks for the sets of servers that host our old and new application code respectively. Even with our progressive transition configuration, NGINX will continue to load balance between the available servers during the transition window.

Next we define the frontend service that NGINX presents to clients.

We are using NGINX JavaScript to determine which upstream group to use, so we need to specify where the NGINX JavaScript code resides. In NGINX Plus R11 (NGINX 1.11.5) and later, all NGINX JavaScript code must be located in a separate file and the js_import directive specifies its location.

The js_set directive sets the value of the $upstream variable. Note that the directive does not instruct NGINX to call the NGINX JavaScript function transitionStatus. NGINX variables are evaluated on demand, that is, at the point during request processing that they are used. So the js_set directive tells NGINX how to evaluate the $upstream variable when it is needed.

The server block defines how NGINX handles incoming HTTP requests. The listen directive tells NGINX to listen on port 80 – the default for HTTP traffic, although a production configuration normally uses SSL/TLS to protect data in transit.

The location block applies to the entire application space (/). Within this block we use the set directive to define the transition window with two new variables, $transition_window_start and $transition_window_end. Note that dates can be specified in RFC 2822 format (as in the snippet) or ISO 8601 format (with milliseconds). Both formats must include their respective local time zone designator. This is because the JavaScript Date.now function always returns the UTC date and time and so an accurate time comparison is possible only if the local time zone is specified.

The proxy_pass directive directs the request to the upstream group that is calculated by the transitionStatus function.

Finally, the error_log directive enables NGINX JavaScript logging of events with severity level info and higher (by default only events at level warn and higher are logged). By placing this directive inside the location block and naming a separate log file, we avoid cluttering the main error log with all info messages.

NGINX JavaScript Code for HTTP Applications

We assume that you have already enabled the NGINX JavaScript module. Instructions appear at the end of this article.

We put our NGINX JavaScript code in /etc/nginx/progressive_transition.js as specified by the js_import directive. All of our functions appear in this file.

Dependent functions must appear before those that call them, so we start by defining a function that returns a hash of the client’s IP address. If our application server is predominantly used by users on the same LAN then all of our clients have very similar IP addresses, so we need the hash function to return an even distribution of values even for a small range of input values.

In this example we are using the FNV‑1a hash algorithm, which is compact, fast, and has reasonably good distribution characteristics. Its other advantage is that it returns a positive 32‑bit integer, which makes it trivial to calculate the position of each client IP address in the output range. The following code is a JavaScript implementation of the FNV‑1a algorithm.

Next we define the function, transitionStatus, that sets the $upstream variable in the js_set directive in our NGINX configuration.

The transitionStatus function has a single parameter, req, which is the JavaScript object representing the HTTP request. The variables property of the request object contains all of the NGINX configuration variables, including the two we set to define the transition window, $transition_window_start and $transition_window_end.

The outer if/else block determines whether the transition window has started, finished, or is in progress. If it’s in progress, we obtain the hash of the client IP address by passing req.remoteAddress to the fnv32a function.

We then calculate where the hashed value sits within the range of all possible values. Because the FNV‑1a algorithm returns a 32‑bit integer we can simply divide the hashed value by 4,294,967,295 (the decimal representation of 32 bits).

At this point we invoke req.log() to log the hash position and the current position of the transition time window. This is logged at the info level to the error_log defined in our NGINX configuration and produces log entries such as the following example. The js: prefix serves to denote a log entry resulting from JavaScript code.

2016/09/08 17:44:48 [info] 41325#41325: *84 js: timepos = 0.373333, hashpos = 0.840858Finally, we compare the hashed value’s position within the output range with our current position within the transition time window, and return the name of the corresponding upstream group.

NGINX and NGINX Plus Configuration for TCP and UDP Applications

The sample configuration for HTTP applications in the previous section is appropriate when NGINX acts as a reverse proxy for an HTTP application server. We can adapt the configuration for TCP and UDP applications by moving the entire configuration snippet to the stream context.

Just one change and one check are required:

-

Define the

$transition_window_startand$transition_window_endvariables in thetransitionStatusfunction instead of with thesetdirective in the NGINX configuration, because thesetdirective is not yet supported in thestreamcontext. -

Verify that the NGINX JavaScript module for Stream traffic is enabled with the following

load_moduledirective in the top‑level (“main”) context of the nginx.conf configuration file. Step 2 in the instructions referenced below for NGINX Plus and shown below for NGINX Open Source show this directive along with the one for the HTTP module.load_module modules/ngx_stream_js_module.so;Then reload the NGINX software as directed in Step 3.

Summary

We’ve presented the progressive transition use case in this blog post as an illustration of the type of complex programmatic configuration you can achieve with NGINX JavaScript. Deploying custom logic to control the selection of the most appropriate upstream group is one of a large number of solutions now possible with NGINX JavaScript.

We will continue to extend and enhance the capabilities of NGINX JavaScript to support ever‑more useful programmatic configuration solutions for NGINX and NGINX Plus. In the meantime, we would love to hear about the problems you are solving with NGINX JavaScript – please add them to the comments section below.

To try out NGINX JavaScript with NGINX Plus yourself, start your free 30-day trial today or contact us to discuss your use cases.

Enabling NGINX JavaScript for NGINX and NGINX Plus

- Loading the NGINX JavaScript Module for NGINX Plus

- Loading the NGINX JavaScript Module for NGINX Open Source

- Compiling NGINX JavaScript as a Dynamic Module for NGINX Open Source

Loading the NGINX JavaScript Module for NGINX Plus

NGINX JavaScript is available as a free dynamic module for NGINX Plus subscribers. For loading instructions, see the NGINX Plus Admin Guide.

Loading the NGINX JavaScript Module for NGINX Open Source

The NGINX JavaScript module is included by default in the official NGINX Docker image. If your system is configured to use the official prebuilt packages for NGINX Open Source and your installed version is 1.9.11 or later, then you can install NGINX JavaScript as a prebuilt package for your platform.

-

Install the prebuilt package.

-

For Ubuntu and Debian systems:

$ sudo apt-get install nginx-module-njs -

For RedHat, CentOS, and Oracle Linux systems:

$ sudo yum install nginx-module-njs

-

-

Enable the module by including a

load_moduledirective for it in the top‑level ("main") context of the nginx.conf configuration file (not in thehttporstreamcontext). This example loads the NGINX JavaScript modules for both HTTP and TCP/UDP traffic.load_module modules/ngx_http_js_module.so; load_module modules/ngx_stream_js_module.so; -

Reload NGINX to load the NGINX JavaScript modules into the running instance.

$ sudo nginx -s reload

Compiling NGINX JavaScript as a Dynamic Module for NGINX Open Source

If you prefer to compile an NGINX module from source:

- Follow these instructions to build either or both the HTTP and TCP/UDP NGINX JavaScript modules from the open source repository.

- Copy the module binaries (ngx_http_js_module.so, ngx_stream_js_module.so) to the modules subdirectory of the NGINX root (usually /etc/nginx/modules).

- Perform Steps 2 and 3 in Loading the NGINX JavaScript Module for NGINX Open Source.