We are pleased to announce that the fifteenth version of NGINX Plus, our flagship product, is now available. Since our initial release in 2013, NGINX Plus has grown tremendously, in both its feature set and its commercial appeal. There are now more than 1,500 NGINX Plus customers and 350 million users of NGINX Open Source.

Based on NGINX Open Source, NGINX Plus is the only all-in-one load balancer, content cache, web server, and API gateway. NGINX Plus includes exclusive enhanced features designed to reduce complexity in architecting modern applications, along with award‑winning support.

NGINX Plus R15 introduces new gRPC support, HTTP/2 server push, improved clustering support, enhanced API gateway functionality, and more:

- Native gRPC support – gRPC is the new remote procedure call (RPC) standard developed by Google. It’s a lightweight and efficient way for clients and servers to communicate. With this new functionality NGINX Plus can SSL‑terminate, route, and load balance gRPC traffic to your backend servers.

- HTTP/2 server push – With HTTP/2 server push, NGINX Plus can send resources before clients actually request them, improving performance and reducing round trips.

- State sharing across a cluster – With this release, the shared‑memory data used for Sticky Learn session persistence can be shared across all NGINX Plus instances in a cluster. The next few releases of NGINX Plus will build on our clustering capabilities and introduce new cluster‑aware features.

- OpenID Connect integration – You can now provide single sign‑on (SSO) to any web application with NGINX Plus, using the OpenID Connect Authorization Code Flow and issuing JSON Web Tokens (JWTs) to clients. NGINX Plus integrates with CA Single Sign‑On (formerly SiteMinder), ForgeRock OpenAM, Keycloak, Okta, OneLogin, Ping Identity, and other popular identity providers.

- NGINX JavaScript (njs) module enhancements – The njs modules (formerly nginScript) enable you to run JavaScript code during NGINX Plus request processing. The njs module for HTTP now provides support for issuing HTTP subrequests that are independent from, and asynchronous to, the client request. This is helpful in API gateway use cases, giving you the flexibility to modify and consolidate API calls using JavaScript. The njs modules for both HTTP and TCP/UDP also now include crypto libraries enabling implementation of common hash functions.

Rounding out the release are more new features, including a new ALPN variable, updates to multiple dynamic modules, and more.

Changes in Behavior

- NGINX Plus R13 saw the introduction of the all‑new NGINX Plus API, which enables functions that were previously implemented in separate APIs, including dynamic configuration of upstream groups and extended metrics. The previous APIs, configured with the

upstream_confandstatusdirectives, are deprecated. We encourage you to check your configuration for these directives and convert to the newapidirective as soon as is practical (for details, see Announcing NGINX Plus R13 on our blog). Starting with the next release, NGINX Plus R16, the deprecated APIs will no longer be shipped. - The NGINX Plus API is updated to version 3 at this release. Previous versions are still supported. If you are considering updating your API clients, consult the API compatibility documentation.

- NGINX Plus packages available at the official repository now have a new numbering scheme. The NGINX Plus package and all dynamic modules now indicate the NGINX Plus release number. Each package version now corresponds to the NGINX Plus version, to make it clearer which version is installed and to simplify module dependencies. This change is transparent unless you are using automated systems that reference packages by version number. In that case, please first test your upgrade process on a non‑production environment.

NGINX Plus R15 Features in Detail

gRPC Support

With this release, NGINX Plus can proxy and load balance gRPC traffic, which many organizations are already using for communication with microservices. gRPC is an open source, high‑performance remote procedure call (RPC) framework designed by Google for highly efficient, low‑latency service-to-service communication. gRPC mandates HTTP/2, rather than HTTP 1.1, as its transport mechanism because the features of HTTP/2 – flow control, multiplexing, and bidirectional traffic streaming with low latency – are ideally suited to connecting microservices at scale.

Support for gRPC was introduced in NGINX Open Source 1.13.10 and is now included in NGINX Plus R15. You can now inspect and route gRPC method calls, enabling you to:

- Apply NGINX Plus features – including HTTP/2 TLS encryption, rate limiting, IP‑address‑based access control lists, and logging – to published gRPC services.

- Publish multiple gRPC services through a single endpoint by inspecting and proxying gRPC connections to internal services.

- Scale your gRPC services when in need of additional capacity by load balancing gRPC connections to upstream backend pools.

- Use NGINX Plus as an API gateway for both gRPC and RESTful endpoints.

To learn more, see Introducing gRPC Support with NGINX 1.13.10 on our blog.

HTTP/2 Server Push

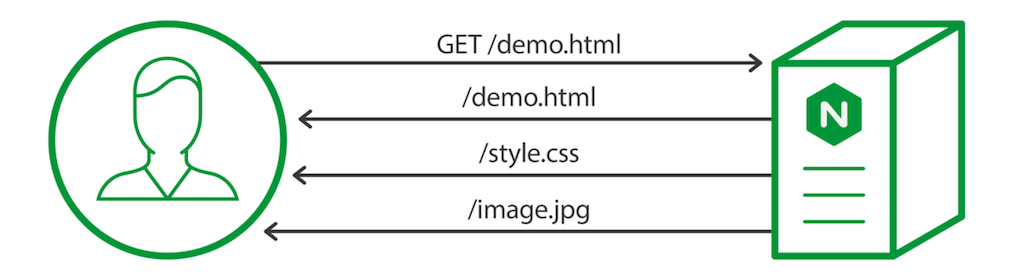

First impressions are important, and page load time is a critical factor in determining whether users will revisit your website. One way to provide faster responses to users with HTTP/2 server push, which reduces the number of RTTs (round‑trip times, the time needed for a request and response) the user has to wait for.

HTTP/2 server push was a highly requested and anticipated feature from the NGINX open source community, introduced in NGINX Open Source 1.13.9. Now included in NGINX Plus R15, it allows a server to preemptively send data that is likely to be required to complete a previous client‑initiated request. For example, a browser needs a whole range of resources – style sheets, images, and so on – to render a website page, so it may make sense to send those resources immediately when a client first accesses the page, rather than waiting for the browser to request them explicitly.

In the configuration example below, we use the http2_push directive to prime a client with style sheets and images that it will need to render the demo web page:

In some cases, however, it isn’t possible to determine the exact set of resources needed by clients, making it impractical to list specific files in the NGINX configuration file. In these cases, NGINX can intercept HTTP Link headers and push the resources that are marked preload in them. To enable interception of Link headers, set the http2_push_preload directive to on.

To learn more, see Introducing HTTP/2 Server Push with NGINX 1.13.9 on our blog.

State Sharing Across a Cluster

Configuring multiple NGINX Plus servers into a high‑availability cluster makes your applications more resilient and eliminates single points of failure in your application stack. NGINX Plus clustering is designed for mission‑critical, production deployments where resilience and high availability are paramount. There are many solutions for deploying high availability clustering with NGINX Plus.

Clustering support was introduced in previous releases of NGINX Plus, providing two tiers of clustering:

- Network resiliency using the keepalived package – Handles failover if an NGINX Plus server goes down.

- Configuration synchronization using the

nginx-syncpackage – Ensures configuration is in sync across all NGINX Plus servers.

NGINX Plus R15 introduces a third tier of clustering – state sharing during runtime, allowing you to synchronize data in shared memory zones among cluster nodes. More specifically, the data stored in shared memory zones for Sticky Learn session persistence can now be synchronized across all nodes in the cluster using the new zone_sync module for TCP/UDP traffic.

New zone_sync Module

With state sharing, there is no primary node – all nodes are peers and exchange data in a full mesh topology. Additionally, the state‑sharing clustering solution is independent from the high availability solution for network resiliency. Therefore, a state‑sharing cluster can span physical locations.

An NGINX Plus state‑sharing cluster must meet three requirements:

- Network connectivity between all cluster nodes

- Synchronized clocks

- Configuration on every node enables the

zone_syncmodule, as in the following:

The zone_sync directive enables synchronization of shared memory zones in a cluster. The zone_sync_server directive identifies the other NGINX Plus instances in the cluster. NGINX Plus supports DNS service discovery so cluster members can be identified by hostname, and so the configuration is identical for each cluster member.

The minimal configuration above lacks the security controls necessary to protect synchronization data in a production deployment. The following configuration snippet employs several such safeguards:

- SSL/TLS encryption of synchronization data

- Client certificate authentication, so each cluster node identifies itself to the others (mutual TLS)

- Access control lists (ACLs) using IP addresses, so that only NGINX Plus nodes on the same physical network can connect for synchronization

State Sharing for Sticky Learn Session Persistence

The first supported NGINX Plus feature that uses state data shared across a cluster is Sticky Learn session persistence. Session persistence means that requests from a client are always forwarded to the server that fulfilled the client’s first request, which is useful when session state is stored at the backend.

In the following configuration, the sticky learn directive defines a shared memory zone called sessions. The sync parameter enables cluster‑wide state sharing by instructing NGINX Plus to publish messages about the contents of its shared memory zone to the other nodes in the cluster.

Note that for synchronization of state data to work correctly, the configuration on every NGINX Plus node in the cluster must include the same upstream block with the sticky learn directive, as well as the directives that enable the zone_sync module (described above).

Note: Clustering support and Sticky Learn session persistence are exclusive to NGINX Plus.

OpenID Connect Integration

Many enterprises use identity and access management (IAM) solutions to manage user accounts and provide a Single Sign‑On (SSO) environment for multiple applications. They often look to extend SSO across new and existing applications to minimize complexity and cost.

NGINX Plus R10 introduced support for validating OpenID Connect tokens. In this release we extend that capability so that NGINX Plus can also control the Authorization Code Flow for authentication with OpenID Connect 1.0, communicating with the identity provider and issuing the access token to the client. This enables integration with most major identity providers, including CA Single Sign‑On (formerly SiteMinder), ForgeRock OpenAM, Keycloak, Okta, OneLogin, and Ping Identity. The newly extended capability also lets you:

- Extend SSO to legacy applications without modifying or modernizing those applications

- Integrate SSO into new applications without implementing SSO or authentication in the application code

- Eliminate vendor lock in; you get standards‑based SSO without having to deploy proprietary IAM vendor agent software with the application

OpenID Connect integration with NGINX Plus is available as a reference implementation on GitHub. The GitHub repo includes sample configuration with instructions on installation, configuration, and fine‑tuning for specific use cases.

Enhancements to the NGINX JavaScript Module

[Editor – The following examples are just some of the many use cases for the NGINX JavaScript module. For a complete list, see Use Cases for the NGINX JavaScript Module.

The code in this section is updated as follows to reflect changes to the implementation of NGINX JavaScript since the blog was originally published:

- To use the refactored session (

s) object for the Stream module, which was introduced in NGINX JavaScript 0.2.4. - To use the

js_importdirective, which replaces thejs_includedirective in NGINX Plus R23 and later. For more information, see the reference documentation for the NGINX JavaScript module – the Example Configuration section shows the correct syntax for NGINX configuration and JavaScript files.

]

With the NGINX JavaScript module, njs (formerly nginScript), you can include JavaScript code within your NGINX configuration so it is evaluated at runtime, as HTTP or TCP/UDP requests are processed. This enables a wide range of potential use cases such as gaining finer control over traffic, consolidating JavaScript functions across applications, and defending against security threats.

NGINX Plus R15 includes two significant updates to njs: subrequests and hash functions.

Subrequests

You can now issue simultaneous HTTP requests that are independent of, and asynchronous to, the client request. This enables a multitude of advanced use cases.

The following sample JavaScript code issues an HTTP request to two different backends simultaneously. The first response is sent to NGINX Plus for forwarding to the client and the second response is ignored.

The js_import directive in the corresponding NGINX Plus configuration reads in the JavaScript code from fastest_wins.js. All requests matching the root location (/) are passed to the sendFastest() function which generates subrequests to the /server_one and /server_two locations. The original URI with query parameters is passed to the corresponding backend servers. Both subrequests execute the done callback function. However, since this function includes r.return(), only the subrequest that completes first sends its response to the client.

Hash Functions

The njs modules for both HTTP and TCP/UDP traffic now include a crypto library with implementations of:

- Hash functions: MD5, SHA‑1, SHA‑256

- HMAC using: MD5, SHA‑1, SHA‑256

- Digest formats: Base64, Base64URL, hex

A sample use case for hash functions is to add data integrity to application cookies. The following njs code sample includes a signCookie() function to add a digital signature to a cookie and a validateCookieSignature() function to validate signed cookies.

The following NGINX Plus configuration utilizes the njs code to validate cookie signatures in incoming HTTP requests and return an error message to the client if validation fails. NGINX Plus proxies the request if validation succeeds or no cookie is present.

Additional New Features in NGINX Plus R15

ALPN Variable for the Stream Modules

Application Layer Protocol Negotiation (ALPN) is an extension to TLS that enables a client and server to negotiate during the TLS handshake which protocol will be used for the connection being established, avoiding additional round trips which might incur latency and degrade the user experience. The most common use case for ALPN is automatically upgrading connections from HTTP to HTTP/2 when both client and server support HTTP/2.

The new NGINX variable $ssl_preread_alpn_protocols, first introduced in NGINX Open Source 1.13.10, captures the list of protocols advertised by the client in its ClientHello message at the ALPN preread phase. Given the configuration below, an XMPP client can introduce itself through ALPN such that NGINX Plus routes XMPP traffic to the xmpp_backend upstream group, gRPC traffic to grpc_backend, and all other traffic to http_backend, all through a single endpoint.

To enable NGINX Plus to extract information from the ClientHello message and populate the $ssl_preread_alpn_protocols variable, you must also include the ssl_preread on directive.

To learn more, see the documentation for the ngx_stream_ssl_preread module.

Queue Time Variable

NGINX Plus supports upstream queueing so that client requests do not have to be rejected immediately when all servers in the upstream group are not available to accept new requests.

A typical use case for upstream queueing is to protect backend servers from overload without having to reject requests immediately if all servers are busy. You can define the maximum number of simultaneous connections for each upstream server with the max_conns parameter to the server directive. The queue directive then puts requests in a queue when there are no backends available, either because they have reached their connection limit or because they have failed a health check.

In this release, the new NGINX variable $upstream_queue_time, first introduced in NGINX Open Source 1.13.9, captures the amount of time a request spends in the queue. The configuration below includes a custom log format that captures various timing metrics for each request; the metrics can then be analyzed offline as part of performance tuning. We limit the number of queued requests for the my_backend upstream group to 20. The timeout parameter sets how long requests are held in the queue before an error message is returned to the client (503 by default). Here we set it to 5 seconds (the default is 60 seconds).

To learn more, see the documentation for the queue directive.

Access Logs Without Escaping

You can now disable escaping in the NGINX Plus access log. The new escape=none parameter to the log_format directive, first introduced in NGINX Open Source 1.13.10, specifies that no escaping is applied to special characters in variables.

Update to the LDAP Auth Reference Implementation

Our reference implementation for authenticating users using an LDAP authentication system has been updated to address issues and fix bugs. Check it out on GitHub.

Transparent Proxying Without root Privilege

You can use NGINX Plus as a transparent proxy by including the transparent parameter to the proxy_bind directive. Worker processes can now inherit the CAP_NET_RAW Linux capability from the master process so that NGINX Plus no longer requires special privileges for transparent proxying.

Note: This feature applies only to Linux platforms.

JWT Grace Period

If a JWT includes a time‑based claim – nbf (not before date), exp (expiry date), or both – NGINX Plus’ validation of the JWT includes a check that the current time is within the specified time interval. If the identity provider’s clock is not synchronized with the NGINX Plus instance’s clock, however, tokens might expire unexpectedly or appear to start in the future. To set the maximum acceptable skew between the two clocks, use the auth_jwt_leeway directive.

Cookie Flag Dynamic Module

The third‑party module for setting cookie flags is now available as the Cookie-Flag dynamic module in the NGINX repository and is covered by your NGINX Plus support agreement. You can use your package management tool to install it:

$ apt-get install nginx-plus-module-cookie-flag # Ubuntu/Debian

$ yum install nginx-plus-module-cookie-flag # Red Hat/CentOSNGINX ModSecurity WAF Module Update

NGINX ModSecurity WAF, a dynamic module for NGINX Plus based on ModSecurity 3.0, has been updated with the following enhancements:

- Performance improvements in

libmodsecurity - Memory leak fixes in

ModSecurity-nginx

[Editor – NGINX ModSecurity WAF officially went End-of-Sale as of April 1, 2022 and is transitioning to End-of-Life effective March 31, 2024. For more details, see F5 NGINX ModSecurity WAF Is Transitioning to End-of-Life on our blog.]

Upgrade or Try NGINX Plus

NGINX Plus R15 includes improved authentication capabilities for your client applications, additional clustering capabilities, njs enhancements, and notable bug fixes.

If you’re running NGINX Plus, we strongly encourage you to upgrade to Release 15 as soon as possible. You’ll pick up a number of fixes and improvements, and upgrading will help us to help you when you need to raise a support ticket. Installation and upgrade instructions for NGINX Plus R15 are available at the customer portal.

Please carefully review the new features and changes in behavior described in this blog post before proceeding with the upgrade.

If you haven’t used NGINX Plus, we encourage you to try it out – for web acceleration, load balancing, and application delivery, or as a fully supported web server with enhanced monitoring and management APIs. You can get started for free today with a free 30-day evaluation. See for yourself how NGINX Plus can help you deliver and scale your applications.