We’re really pleased to announce the availability of NGINX Plus Release 5 (R5). This release brings together the features recently released in the NGINX Open Source distribution and a number of features available in NGINX Plus only.

The major new feature is load balancing for general TCP‑based protocols, such as database, RPC, and chat protocols. The related TCP Load Balancing in NGINX Plus R5 blog post provides complete details.

NGINX Plus R5 also includes a number of improvements to load balancing and caching.

Consider NGINX Plus if you are looking for a web acceleration, load balancing, or application delivery solution, or a fully supported web server with additional monitoring and management APIs.

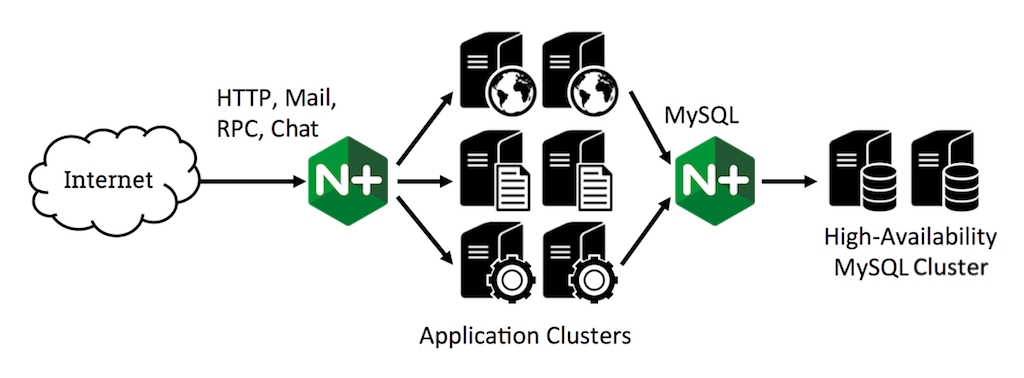

TCP Load Balancing

NGINX Plus R5 introduces load balancing for TCP connections, implemented in the stream module. You can load balance a wide range of non‑HTTP connections, such as MySQL and SSL/TLS (without decryption). You can even load balance and manage mail protocols (SMTP, POP3, IMAP) by combining the existing mail proxy module with the new stream module.

This release provides a range of load‑balancing methods (Round Robin, Least Connections, Hash, IP Hash), control over connection parameters, high availability with inline health checks, slow start for recovered servers, and the ability to manually designate servers as active, backup, or down.

For more information, check out TCP Load Balancing in NGINX Plus R5 on our blog and TCP Load Balancing in the NGINX Plus Admin Guide. This feature is unique to NGINX Plus.

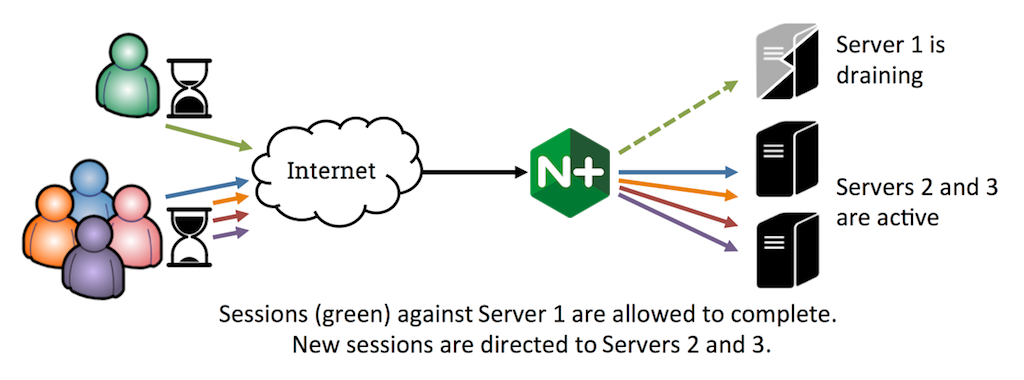

Better Control of Load‑Balanced User Sessions

Sometimes you need to take an upstream node down for maintenance or upgrade. With the new session draining feature in Release 5, you can signal NGINX Plus not to send new connections to that node, but to maintain established sessions on it until they complete.

You can use live activity monitoring to monitor traffic on the drained node, waiting to take it offline until you’re confident that user sessions have completed:

# Return the Unix epoch time in seconds (rounded to milliseconds) when

# Server 1 in upstream group 'backends' was last used

$ curl http://localhost:8080/status/upstreams/backends/1/selected

# Calculate how long the server has been idle (in milliseconds)

$ expr `date +%s000` -

`curl -s http://localhost:8080/status/upstreams/backends/1/selected`[Editor – The preceding commands use the NGINX Plus Status module (enabled by the status directive). That module is replaced and deprecated by the NGINX Plus API in NGINX Plus Release 13 (R13) and later, and will not be available after NGINX Plus R15.]

The sticky cookie mechanism for tracking user sessions has been updated so that the expiry time applies to the most recent request in the session, not the first request. This means that sessions are tracked more accurately.

The session‑draining and sticky‑cookie features are available only in NGINX Plus.

Improved Control of Traffic if a Node Fails

When a server in an upstream group fails to respond to a request, NGINX Plus automatically retries the request on other servers in the group. The new proxy_next_upstream_tries and proxy_next_upstream_timeout directives give you more control over this behavior, by limiting the number of retries and how long NGINX can continue retrying, respectively.

This feature was released in NGINX 1.7.5, and applies to proxying of HTTP, FastCGI, uWSGI, SCGI, and memcached traffic.

HTTP Vary Header is Supported for Cached Content

Some web servers deliver different versions of an resource depending on the type of client that is requesting it. For example, when a browser requests a website’s home page, the server delivers a version with high‑resolution images, but it delivers a version with no images when the client is a mobile device. Such a server can set the Vary header in its responses to tell caching proxies which headers in the client request it is using to determine the version to send (and by implication, which headers the proxy needs to use when determining which version of a cached resource to send).

A common use case is to differentiate between compressed and uncompressed versions of the same resource; in this case, the Vary: Accept-Encoding header in the server response tells the cache to use the value of the Accept-Encoding header in the client’s request to determine which version to deliver.

NGINX Plus now fully supports the Vary header to correctly cache multiple variants of the same resource. This feature was introduced in NGINX 1.7.7.

Improved Support for Serving Byte Ranges from Cache

A client can fetch a certain part of a file – for example, a segment in a video download or a page in a PDF document – by specifying the appropriate byte range in its request. NGINX Plus can comply with these requests and deliver byte ranges from cached assets to clients, even if the origin server for the content does not support byte ranges.

The first time NGINX Plus receives a request for a file (either the full file or a byte range), it requests the entire file from the origin server and caches it. NGINX Plus then satisfies byte‑range requests from the cache. This reduces the load on upstream (origin) servers.

This feature was introduced in NGINX 1.7.7, and is enabled with the proxy_force_ranges directive.

More Control over Upstream Bandwidth

The new proxy_limit_rate directive limit how quickly NGINX Plus reads data from an upstream server. This prevents one large request from consuming all of the bandwidth between NGINX and the origin server. When caching is enabled, it effectively controls the rate at which content is written to the disk cache, which is useful if the disks exhibit high latency for writes.

This directive was introduced in NGINX 1.7.7.

Other Changes in NGINX Plus R5

The third‑party RTMP module has been added to the NGINX Plus Extras package.

NGINX Plus is now available for Ubuntu 14.10, for ARMv8 (aarch64) on Ubuntu 14.04, and for SUSE Linux Enterprise Server 12.

Upgrade or Try NGINX Plus

We strongly encourage our NGINX Plus customers to update to Release 5 as soon as possible. You’ll pick up a number of fixes and improvements, and it will help us to help you if you need to raise a support ticket. Installation and upgrade instructions can be found at the customer portal.

If you’ve not tried NGINX Plus, start your free 30-day trial today and start learning how NGINX Plus can help you scale out and deliver your applications.