Enterprises rely on Red Hat OpenShift’s industry‑acclaimed Kubernetes platform solution for its comprehensive feature set, robust architecture, and enterprise‑grade support. It’s no surprise that these organizations are also seeking enterprise‑grade traffic control capabilities coupled with automation to enhance their Kubernetes platform and achieve a faster cadence in their application development and deployment cycles.

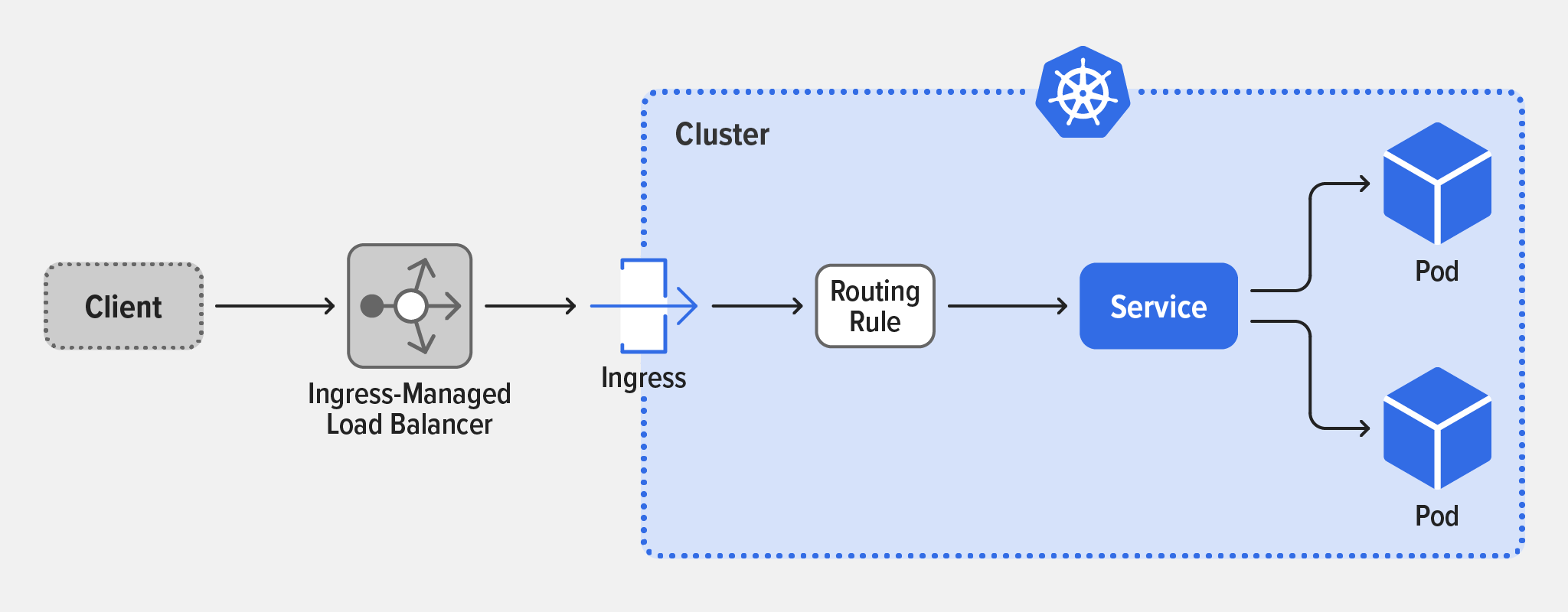

Kubernetes mandates the use of Ingress interfaces to handle external traffic coming into a cluster. In practice, external clients accessing Kubernetes applications communicate through a gateway that exposes traffic at Layers 4 through 7 to Kubernetes services within the cluster.

To work, the traffic‑routing rules in an Ingress resource need to be implemented by an Ingress controller. Without an Ingress controller, the Ingress can’t do anything useful. In this diagram, the Ingress controller sends all external traffic to a single Kubernetes service.

(adapted from Kubernetes documentation)

NGINX Ingress Controller

NGINX Ingress Controller is an Ingress controller implementation that controls ingress and egress traffic for Kubernetes applications, enhancing the capabilities of the NGINX load balancer with automated software configuration. It provides the robust traffic‑management features that are vital to production Kubernetes deployments, going beyond the basic functionality offered by OpenShift’s default Ingress controller.

NGINX Ingress Controller is available in two editions, one with the capabilities of NGINX Open Source and the other with the capabilities of NGINX Plus. NGINX Open Source Ingress Controller is free, while NGINX Plus Ingress Controller is a commercially supported implementation with advanced, enterprise‑grade features.

In general, Ingress controller implementations support only HTTP and HTTPS, but the NGINX implementations also support TCP, UDP, gRPC, and WebSocket, a much broader set of protocols which extends Ingress support to many new application types. It also supports TLS Passthrough, an important enhancement that enables it to route TLS‑encrypted connections without having to decrypt them or access TLS certificates and keys.

Beyond those capabilities, NGINX Ingress Controller allows for fine‑grained customization that can be scoped to specific applications or clusters, as well as the use of policies. Policies can be defined once, then applied as needed to various application areas and by different teams. A policy can be used to limit request rates, to validate mTLS authentication, and to allow or deny traffic based on IP addresses or subnets. JWT validation and WAF policies are also supported. This sample policy limits requests from each client IP address to 1 request per second.

apiVersion: k8s.nginx.org/v1

kind: Policy

metadata:

name: rate-limit-policy

spec:

RateLimit:

rate: 1r/s

key: ${binary_remote_addr}

zoneSize: 10MUse Cases for NGINX Ingress Controller

NGINX has identified at least three reasons to use NGINX Ingress Controller:

- Deliver production‑grade features

- Secure containerized apps

- Provide total traffic management

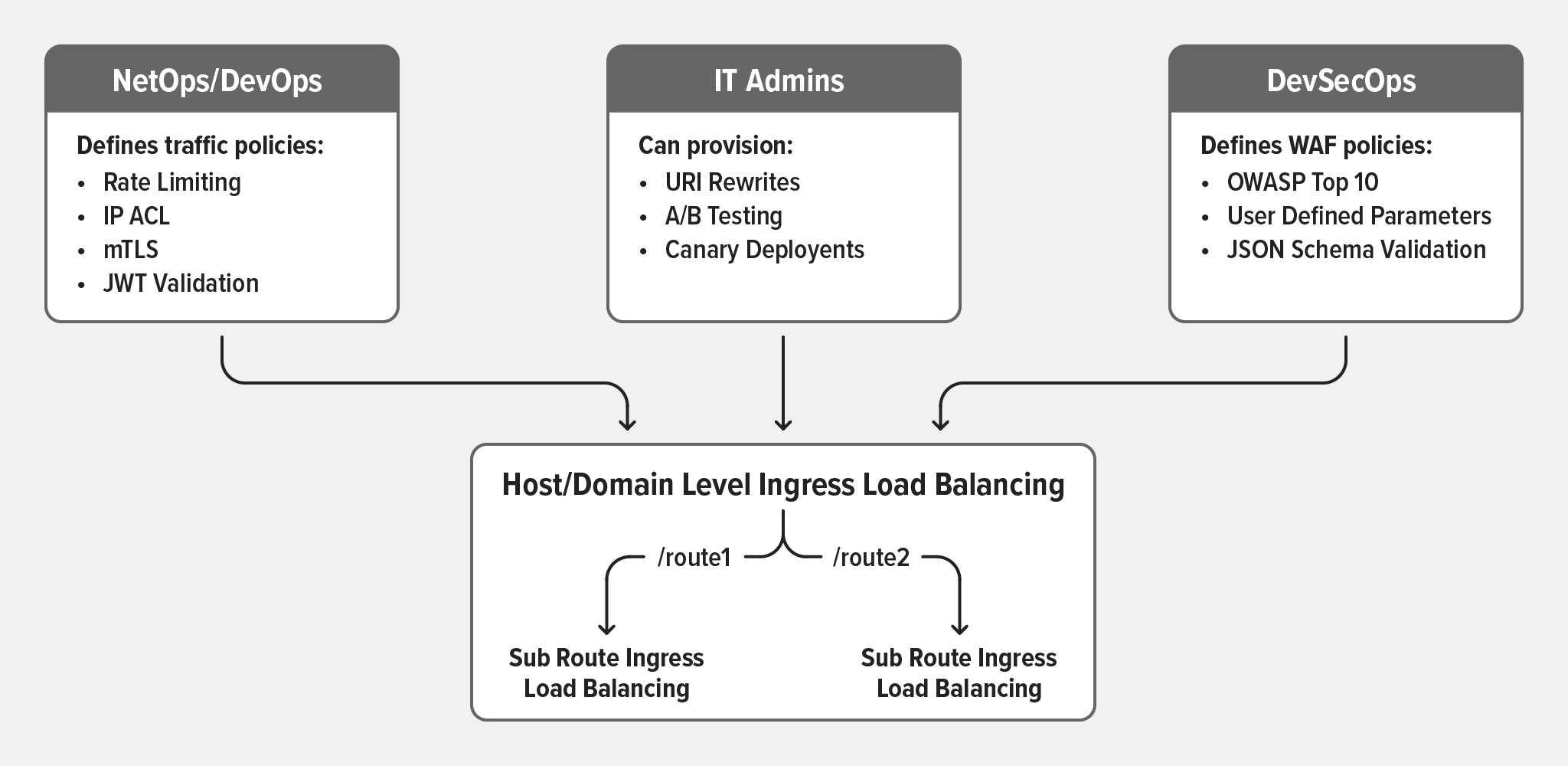

The diagram below provides a better view of potential use cases, some of which (traffic routing and WAF policies), were discussed in the previous section.

An interesting sample use case is the ability to implement blue‑green deployments, where you switch production traffic from the current app version (blue) to a new version (green) and verify that the new version operates correctly. As in the following configuration example, you might begin by directing 90% of the traffic to the blue app (current version) and 10% to the green app (new version).

You monitor traffic to detect whether the green user base experiences any issues. If issues are encountered, the configuration can be rolled back, rerouting all green users back to the blue version. In the opposite case, if the new apps perform well, you can adjust the traffic split to direct more traffic to the green version and verify how the green app performs under increased load, eventually leading to routing of all traffic to the new green app and the decommissioning of the old blue app.

apiVersion: k8s.nginx.org/v1

kind: VirtualServer

metadata:

name: app

spec:

host: app.example.com

upstreams:

- name: products-v1

service: products-v1-svc

port: 80

- name: products-v2

service: products-v2-svc

port: 80

routes:

- path: /products

splits:

- weight: 90

action:

pass: products-v1

- weight: 10

action:

pass: products-v2There are more examples than we can possibly cover, but NGINX has a dedicated GitHub repository with several samples.

Conclusion

Ingress controllers are about much more than load balancing. For simple, early‑stage use cases, a default Ingress controller might suffice, but for organizations and development teams seeking to fully reap the benefit of cloud‑native development models, production‑grade capabilities are essential.

Furthermore, advanced Ingress controllers must provide not only sophisticated traffic management, but also enterprise‑grade security. This is achieved by implementing mutual TLS (mTLS) authentication, encrypted traffic passthrough, and WAF protection.

Finally, NetOps and NetSecOps teams are also impacted by Ingress controllers. As they work hard to automate network configuration and policy‑based traffic control, they cannot allow emerging cloud‑native, OpenShift‑based workloads to become a weak spot where manual configuration activities are required. On the contrary, they seek tools that seamlessly integrate with existing security solutions to ensure consistent configurations across devices and platforms.

NGINX Ingress Controller delivers across all these requirements, providing organizations running their Kubernetes environments on OpenShift with a flexible, secure, scalable, and fully supported solution that helps them achieve more business outcomes while delivering tremendous and immediate value.

Learn more about NGINX and OpenShift: