Customer demand for goods and services over the past two years has underlined how crucial it is for organizations to scale easily and innovate faster, leading many of them to accelerate the move from a monolithic to a cloud‑native architecture. According to the recent F5 report, The State of Application Strategy 2021, the number of organizations modernizing applications increased 133% in the last year alone. Cloud‑enabled applications are designed to be modular, distributed, deployed, and managed in an automated way. While it’s possible simply to lift-and-shift an existing monolithic application, doing so provides no advantage in terms of costs or flexibility. The best way to benefit from the distributed model that cloud computing services afford is to think modular – enter microservices.

Microservices is an architectural approach that enables developers to build an application as a set of lightweight application services that are structurally independent of each other and the underlying platform. Each microservice runs as a unique process and communicates with other services through well‑defined and standardized APIs. Each service can be developed and deployed by a small independent team. This flexibility provides organizations greater benefits in terms of performance, cost, scalability, and the ability to quickly innovate.

Developers are always looking for ways to increase efficiency and expedite application deployment. APIs enable software-to-software communication and provide the building blocks for development. To request data from servers using HTTP, web developers originally used SOAP, which sends details of the request in an XML document. However, XML documents tend to be large, require substantial overhead, and take a long time to develop.

Many developers have since moved to REST, an architectural style and set of guidelines for creating stateless, reliable web APIs. A web API that obeys these guidelines is called RESTful. RESTful web APIs are typically based on HTTP methods to access resources via URL‑encoded parameters and use JSON or XML to transmit data. With the use of RESTful APIs, applications are quicker to develop and incur less overhead.

Advances in technology bring new opportunities to advance application design. In 2015 Google developed Google Remote Procedure Call (gRPC) as a modern open source RPC framework that can run in any environment. While REST is built on the HTTP 1.1 protocol and uses a request‑response communication model, gRPC uses HTTP/2 for transport and a client‑response model of communication, implemented in protocol buffers (protobuf) as the interface description language (IDL) used to describe services and data. Protobuf is used to serialize structured data and is designed for simplicity and performance. gRPC is approximately 7 times faster than REST when receiving data and 10 times faster when sending data, due to the efficiency of protobuf and use of HTTP/2. gRPC also allows streaming communication and serves multiple requests simultaneously.

Developers find building microservices with gRPC an attractive alternative to using RESTful APIs due to its low latency, support for multiple languages, compact data representation, and real‑time streaming, all of which make it especially suitable for communication among microservices and over low‑power, low‑bandwidth networks. gRPC has increased in popularity because it makes it easier to build new services rapidly and efficiently, with greater reliability and scalability, and with language independence for both clients and servers.

Although the open nature of the gRPC protocol offers several positive benefits, the standard doesn’t provide any protection from the impact that a DoS attack can have on an application. A gRPC application can still suffer from the same types of DoS attacks as a traditional application.

Why Identifying a DoS Attack on a gRPC App Is Challenging

While microservices and containers give developers more freedom and autonomy to rapidly release new features to customers, they also introduce new vulnerabilities and opportunities for exploitation. One type of cyberattack that has gained in popularity is denial-of-service (DoS) attacks, which in recent years have been responsible for an increasing number of common vulnerabilities and exposures (CVEs), many caused by the improper handling of gRPC requests. Layer 7 DoS attacks on applications and APIs have spiked by 20% in recent years while the scale and severity of impact has risen by nearly 200%.

A DoS attack commonly sends large amounts of traffic that appears legitimate, to exhaust the application’s resources and make it unresponsive. In a typical DoS attack, a bad actor floods a website or application with so much traffic that the servers become overwhelmed by all the requests, causing them to stall or even crash. DoS attacks are designed to slow or completely disable machines or networks, making them inaccessible to the people who need them. Until the attack is mitigated, services that depend on the machine or network – such as e‑commerce sites, email, and online accounts – are unusable.

Increasingly, we have seen more DoS attacks using HTTP and HTTP/2 requests or API calls to attack at the application layer (Layer 7), in large part because Layer 7 attacks can bypass traditional defenses that are not designed to defend modern application architectures. Why have attackers switched from traditional volumetric attacks at the network layers (Layers 3 and 4) to Layer 7 attacks? They are following the path of least resistance. Infrastructure engineers have spent years building effective defense mechanisms against Layer 3 and Layer 4 attacks, making them easier to block and less likely to be successful. That makes such attacks more expensive to launch, in terms of both money and time, and so attackers have moved on.

Detecting DoS attacks on gRPC applications is extremely hard, especially in modern environments where scaling out is performed automatically. A gRPC service may not be designed to handle high‑volume traffic which makes it an easy target for attackers to take down. gRPC services are also vulnerable to HTTP/2 flood attacks with tools such as h2load. Additionally, gRPC services can easily be targeted when the attacker exploits data definitions that are properly declared in a protobuf specification.

A typical, if unintentional, misuse of a gRPC service is when a bug in a script causes it to produce excessive requests to the service. For example, suppose an automation script issues an API call when a certain condition occurs, which the designers expect to happen every two seconds. Due to a mistake in the definition of the condition, however, the script issues the call every two milliseconds, creating an unexpected burden on the backend gRPC service.

Other examples of DoS attacks on a gRPC application include:

- The insertion of a malicious field in a gRPC message may cause the application to fail.

- A Slow

POSTattack sends partial requests in the gRPC header. Anticipating the arrival of the remainder of the request, the application or server keep the connection open. The concurrent connection pool might become full, causing rejection of additional connection attempts from clients. - An HTTP/2 Settings Flood (CVE-2019-9515), in which the attacker sends empty

SETTINGframes to the gRPC protocol, consumes NGINX resources, making it unable to serve new requests.

Unleash the Power of Dynamic User and Site Behavior Analysis to Mitigate gRPC DoS Attacks with NGINX App Protect DoS

Securing applications from today’s DoS attacks requires a modern approach. To protect complex and adaptive applications, you need a solution that provides highly precise, dynamic protection based on user and site behavior and removes the burden from security teams while supporting rapid application development and competitive advantage.

F5 NGINX App Protect DoS is a lightweight software module for NGINX Plus, built on F5’s market‑leading WAF and behavioral protection. Designed to defend against even the most sophisticated Layer 7 DoS attacks, NGINX App Protect DoS uses unique algorithms to create a dynamic statistical model that provides adaptive machine learning and automated protection. It continuously measures mitigation effectiveness and adapts to changing user and site behavior and performs proactive server health checks. For details, see How NGINX App Protect Denial of Service Adapts to the Evolving Attack Landscape on our blog.

Behavioral analysis is provided for both traditional HTTP apps and modern HTTP/2 app headers. NGINX App Protect DoS mitigates attacks based on both signatures and bad actor identification. In the initial signature‑mitigation phase, NGINX App Protect DoS profiles the attributes associated with anomalous behavior to create dynamic signatures that then block requests that match this behavior going forward. If the attack persists, NGINX App Protect DoS moves into the bad‑actor mitigation phase.

Based on statistical anomaly detection, NGINX App Protect DoS successfully identifies bad actors by source IP address and TLS fingerprints, enabling it to generate and deploy dynamic signatures that automatically identify and mitigate these specific patterns of attack traffic. This approach is unlike traditional DoS solutions on the market that detect when a volumetric threshold is exceeded. NGINX App Protect DoS can block attacks where requests look completely legitimate and each attacker might even generate less traffic than the average legitimate user.

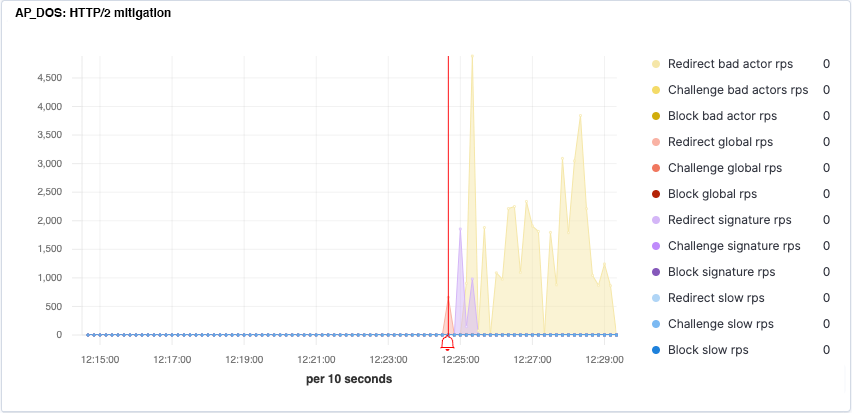

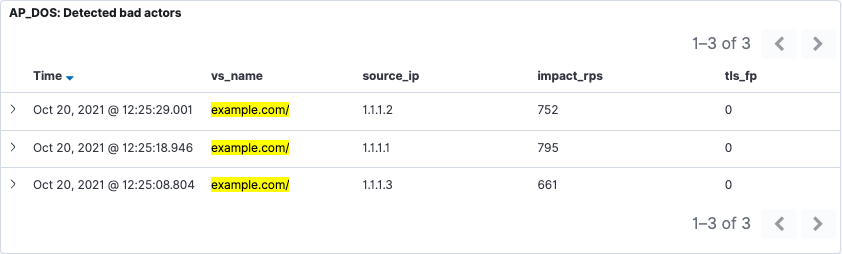

The following Kibana dashboards show how NGINX App Protect DoS quickly and automatically detects and mitigates a DoS flood attack on a gRPC application.

Figure 1 displays a gRPC application experiencing a DoS flood attack. In the context of gRPC, the critical metric is datagrams per second (DPS) which corresponds to the rate of messages per second. In this image, the yellow curve represents the learning process: when the Baseline DPS prediction converges toward the Incoming DPS value (in blue), NGINX App Protect has learned what “normal” traffic for this application looks like. The sharp rise in DPS at 12:25:00 indicates the start of an attack. The red alarm bell indicates the point when NGINX App Protect DoS is confident that there is an attack in progress, and starts the mitigation phases.

Figure 2 shows NGINX App Protect DoS in the process of detecting anomalous behavior and thwarting a gRPC DoS flood attack using a phased mitigation approach. The red spike indicates the number of HTTP/2 redirections sent to all clients during the global rate‑mitigation phase. The purple graph represents the redirections sent to specific clients when their requests match a signature that models the anomalous behavior. The yellow graph represents the redirections sent to specific detected bad actors identified by IP address and TLS fingerprint.

Figure 3 shows a dynamic signature created by NGINX App Protect DoS that is powered by machine learning and profiles the attributes associated with the anomalous behavior from this gRPC flood attack. The signature blocks requests that match it during the initial signature‑mitigation phase.

Figure 4 shows how NGINX App Protect DoS moves from signature‑based mitigation to bad‑actor mitigation when an attack persists. By analyzing user behavior, NGINX App Protect DoS has identified bad actors by the source IP address and TLS fingerprints shown here. Instead of looking at every request for specific signatures that indicate anomalous behavior, here service is denied to specific attackers. This enables generation of dynamic signatures that identify these specific attack patterns and mitigate them automatically.

With gRPC APIs, you use the gRPC interface to set security policy in the type library (IDL) file and the proto definition files for the protobuf. This provides a zero‑touch security policy solution – you don’t have to rely on the protobuf definition to protect the gRPC service from attacks. gRPC proto files are frequently used as a part of CI/CD pipelines, aligning security and development teams by automating protection and enabling security-as-code for full CI/CD pipeline integration. NGINX App Protect DoS ensures consistent security by seamlessly integrating protection into your gRPC applications so they that are always protected by the latest, most up-to-date security policies.

While gRPC provides the speed and flexibility developers need to design and deploy modern applications, the inherent open nature of its framework makes it highly vulnerable to DoS attacks. To stay ahead of severe Layer 7 DoS attacks that can result in performance degradation, application and website outages, abandoned revenue, and damage to customer loyalty and brand, you need a modern defense. That’s why NGINX App Protect DoS is essential for your modern gRPC applications.

To try NGINX App Protect DoS with NGINX Plus for yourself, start your free 30-day trial today or contact us to discuss your use cases.

For more information, check out our whitepaper, Securing Modern Apps Against Layer 7 DoS Attacks.