InkaBinka is revolutionizing the way news is delivered and consumed on the Internet, by distilling full‑length news articles into four essential bullet points that readers can absorb in 20 seconds or less, accompanied by animated imagery. InkaBinka’s news aggregator engine is growing rapidly and needs a web infrastructure that can hyperscale while delivering an outstanding customer experience.

In this recap of my talk at nginx.conf 2014, I describe how InkaBinka has deployed HP Moonshot and NGINX Plus to achieve better performance and enable rapid, modular expansion of our web infrastructure. I also share insights on best practices to help you deploy your own web infrastructure in a box.

InkaBinka delivers news content on our website and via mobile apps for iOS and Android. On the backend, a combination of third‑party and proprietary software—for web scraping, image selection and enhancement, and natural language processing (NLP)—finds news stories and distills each one into four bullet points with associated images. Our focus on attracting quality users as opposed to a large quantity of them seems to be working. A common way to measure an app’s success is user retention rate, the percentage of users who have downloaded an app that actually use it on a given day. InkaBinka’s user retention rate is about twice the industry average of 11%, and we rank among the 100 top news apps in 21 countries.

Migrating from the Cloud to HP Moonshot

Like many startups, we initially deployed InkaBinka in the cloud. But as we added more database nodes, we started to experience latency issues. Our backend is fairly complex and requires precise coordination among the components. Given the highly dynamic nature of the cloud (both compute and network resources), it simply couldn’t deliver a consistent level of performance and latency that we could count on. For example, I would get an alert saying that a specific database node was down. When I logged in, I found that it was not down, but the replication and synchronization of the database nodes was causing hiccups that resulted in a lot of errors.

At about the same time, we were introduced to HP Moonshot. It wasn’t even officially released yet, so we got some of the first units. It has delivered blazingly fast performance, enabling us to scale out easily, and achieved ROI in only four months compared to the cost of the cloud. My CEO, Kevin McGushion, forecasts that we can reduce latency by 4 to 5 times compared to the cloud.

In terms of cost, our cloud bill was substantial because of all the image processing, web scraping, and natural language processing going on in the backend. We’ve gotten a four‑month ROI, so you could say it’s almost “fiscally irresponsible” not to go with Moonshot. The best part is that I have complete control over my infrastructure and we’re not competing with other tenants. I call Moonshot my own “cloud in a box.”

The InkaBinka Stack: Couchbase, Node.js, and NGINX Plus

Couchbase Is the Database

As we developed InkaBinka, I was initially looking for a caching layer on top of Microsoft SQL Server. I came across Couchbase, which is a blend of memcached and CouchDB (a NoSQL document database). It runs in RAM and persists to disk. Super fast and a great admin interface. I chose it partly because I think it’s important to consider performance at the start of the design phase, rather than having to figure out how to achieve good performance only after your site is getting substantial traffic. Been there, done that…not fun.

We run four Couchbase server nodes with one replica copy. Couchbase distributes the data across the pool of servers (in our case, four) and puts a copy of each node on another of the nodes in the pool. If a node goes down, its replica is activated immediately and is ready to serve the data. The result has been zero downtime. We also use cross‑data center replication (XDCR) to replicate to the cloud. When performing maintenance on our network, I can use DNS to redirect traffic to the cloud without affecting the user experience.

To scale up with Couchbase, all I have to do is allocate another Moonshot cartridge, provision from our library of custom images, and add the node to the cluster. Couchbase begins evenly redistributing the data in real time across the now‑increased available pool while still serving data at ridiculous speeds.

Node.js Serves the API

We use Node.js to serve our API. Its event‑driven model provides fast performance even during floods of requests. We’re running 4 Node.js processes on each of 3 servers, for a total of 12 listeners. For now, this is way more than necessary, but I purposely overbuilt the system to avoid future growing pains. (As a reference point, even a site as big as LinkedIn needs only 3 servers for Node, down from 30.) With extensive tuning to the backend, most of our routes return data to the user in under 10 milliseconds.

When the InkaBinka app loads on a mobile device, it calls the API to fetch news stories. The delivered content is customized to the specific device size. For example, there’s a backend process that generates a separate version of every image for 74 device sizes, portrait and landscape. I also track device usage. Popular device form factors are moved to the top of the processing queue so that InkaBinka delivers images that are better sized for them.

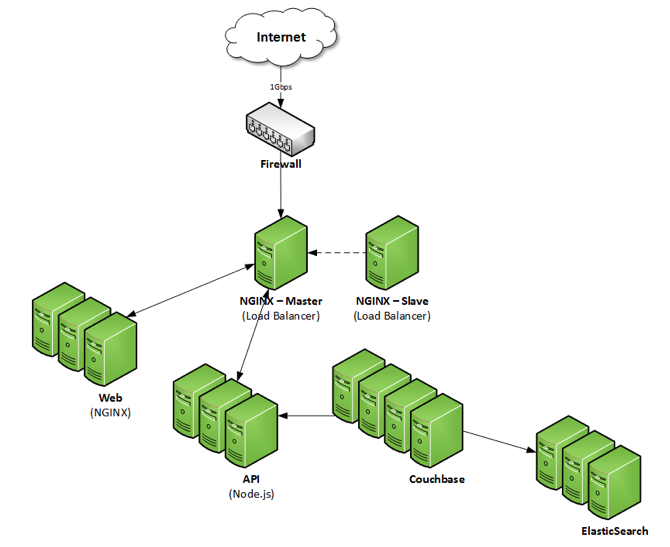

NGINX Plus Serves Static Web Content and Load Balances

We use NGINX Plus as a web server and load balancer. We have really enjoyed the enhanced features in NGINX Plus, which I describe as “ridiculously cheap for what you get”.

About 90% of InkaBinka traffic is generated by mobile users, but we have a website too. We actually deployed it first to encapsulate the mobile web code, which we then wrapped for iOS and Android. Our frontend framework, called Famo.us, allows us to “write once, run anywhere,” but that is a different blog post altogether. I have three NGINX Plus instances serving the static content (HTML, CSS, and JavaScript) for the website. The web server doesn’t need the full 8 cores and 32 GB of RAM available on a Moonshot cartridge, so I’ve taken 3 cartridges and virtualized each to use half the resources (4 cores and 16 GB) for an NGINX Plus web server and the other half for a Node.js API server.

There are two additional NGINX Plus instances that load balance the web and API servers in an active‑passive configuration. Each load balancer uses the full 8 cores and 32 GB on a cartridge. Being able to perform live config changes with NGINX Plus is AMAZING!

So the processing path on the website works like this: the active NGINX Plus load balancer distributes client requests across the three NGINX Plus web server instances to serve the static content. After the content loads in the browser, calls to the API are load balanced across the three API servers, which communicate with Couchbase. We’re also starting to leverage ElasticSearch more and more for searching. In the case of the mobile app, the static content is already loaded locally, so the app makes API calls as soon as the connection is established.

How Many Users Can InkaBinka Serve?

I was interested in figuring out how many InkaBinka users we can support with one Moonshot chassis. It can be difficult to simulate large numbers of concurrent users – it takes lots of CPU resources to generate threads and high bandwidth. Knowing that 90% of the traffic at InkaBinka is API calls from mobile clients and the most common call is to fetch a fresh batch of news stories, I decided to ask how many users accessing the site within a 1‑second period can be served their stories within 1 second.

It turned out that we ran out of resources for generating demand before NGINX Plus or our backend reached their limits. At that point we were serving 6,500 concurrent users from a single Moonshot chassis. I reviewed past usage data to find the 1 second when the largest number of InkaBinka’s current users accessed the site concurrently. Extrapolating from that number and the simulated 6,500 concurrent users per second, I determined that our current configuration can support a user base of 5.5 million…and we’re currently only using about 40% of our Moonshot cartridges. So it’s really closer to 14 million in a fully allocated Moonshot chassis.

Another way I’m maximizing performance is by using an HP content delivery network (CDN) solution to serve images from regional hubs. As a result, the only traffic at the main InkaBinka data center in California is text (HTML, CSS, JavaScript).

Enhanced Features in NGINX Plus

We’ve been really happy with the features in NGINX Plus:

- Event‑driven architecture – Like Node.js, NGINX Plus is not threadbound, so there’s no need to continually add hardware to meet increasing demand. It’s amazing how quickly NGINX Plus accepts requests and offloads them to the appropriate server resource. We have rarely observed CPU usage above 1 to 2%.

- Superfast static content serving – It basically means the website comes up very quickly.

- On‑the‑fly reconfiguration of load‑balanced resources – This is probably my favorite feature. It used to be that changing the configuration of nodes required stopping and restarting processes as quickly as possible to keep the outage short, and usually doing it at 3 AM to disturb as few customers as possible. My fingernails couldn’t grow back fast enough. With the on‑the‑fly reconfiguration feature, it’s a breeze to change the web or API servers in a load‑balanced cluster to match demand. There’s no downtime and it has always worked.

- Live activity monitoring – Very handy for troubleshooting and keeping an eye on things. I’ve caught myself staring at the dashboard in amazement for way longer than I should have.

- Proactive application health checks – Just like a prostate exam, early detection is the best prevention.

- Gzip compression – Pretty common in a good web server, but still critical.

- Upcoming optimizations for video delivery – InkaBinka will eventually serve video, and we’ll use NGINX Plus for that, running on the Moonshot cartridges that are specialized for video.

Try It Out

Download the InkaBinka app and let me know what you think!

To try NGINX Plus, start your free 30-day trial today or contact us for a demo.