This post is adapted from a webinar hosted on April 7, 2016 by Floyd Smith and Michael Hausenblas. This blog post is the first of two parts, and is focused on microservices; the second part focuses on immutable infrastructure. You can also watch a recording of the webinar.

Table of Contents

0:00 Introduction

Floyd: Good morning, good afternoon, and good evening to everybody signed onto this webinar around the world. I’m Floyd Smith from NGINX, and I’m here in San Francisco with Michael Hausenblas, a technical expert at Mesosphere and author of a recent book, Docker Networking and Service Discovery.

I’m going to start by talking about NGINX. We’ll be going over Docker, and then we’re going to go into some of the things that come up when you start using Docker at a production level and how container management and immutable infrastructure work together to help you manage complexity, and also create the freedom and opportunity for your apps to do really cool things.

1:48 The Way It Was

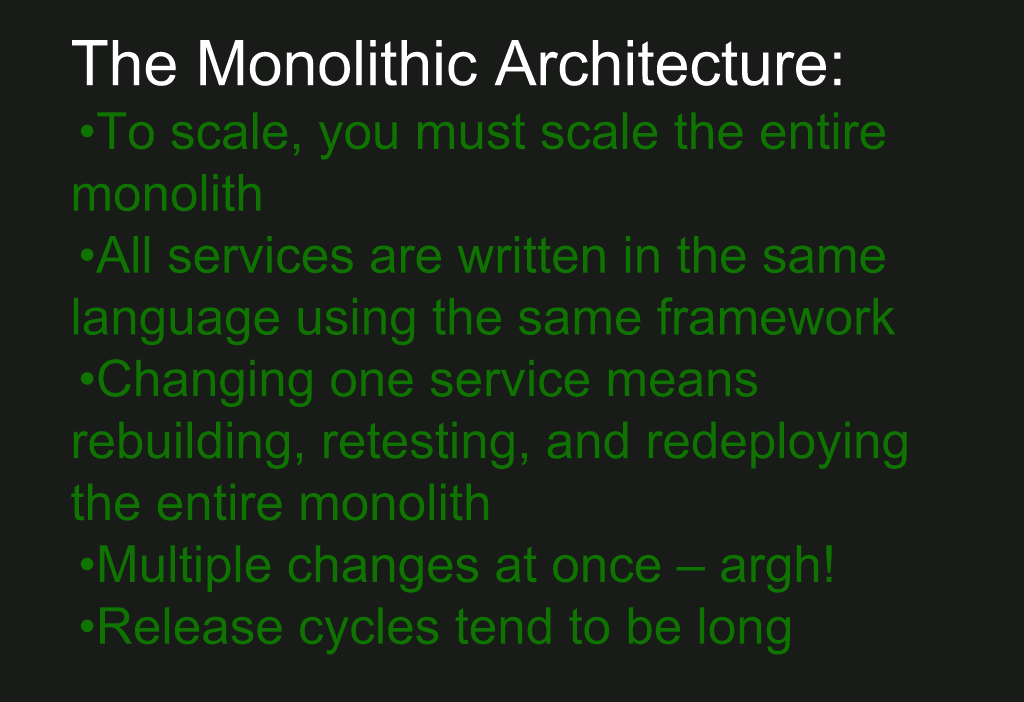

Let’s start with monolithic architecture. We’d love to hear in the comments if you’re running a monolithic application, if you’re moving to microservices, or already on microservices. We recently did a user survey on application development and delivery here at NGINX and we found a real mix, but probably most of what’s out there now is still monolithic, with some of it actively moving toward microservices.

The major problem that comes up with monolithic architecture is scaling everything.

Since everything’s written in the same language, using the same frameworks, no language ever dies – because there’s a monolith written in that language somewhere and it’s got to be maintained.

Changing one service means rebuilding, retesting, and redeploying the whole monolith. And if there are multiple teams making multiple changes at the same time, you have a combinatorial explosion of possible bugs and a combinatorial explosion of possible communication and coordination problems among your teams. The classic book, The Mythical Man‑Month, talks all about this.

Finally, release cycles tend to be long and a lot of that time is spent talking to one another rather than coding, as we’re all familiar with.

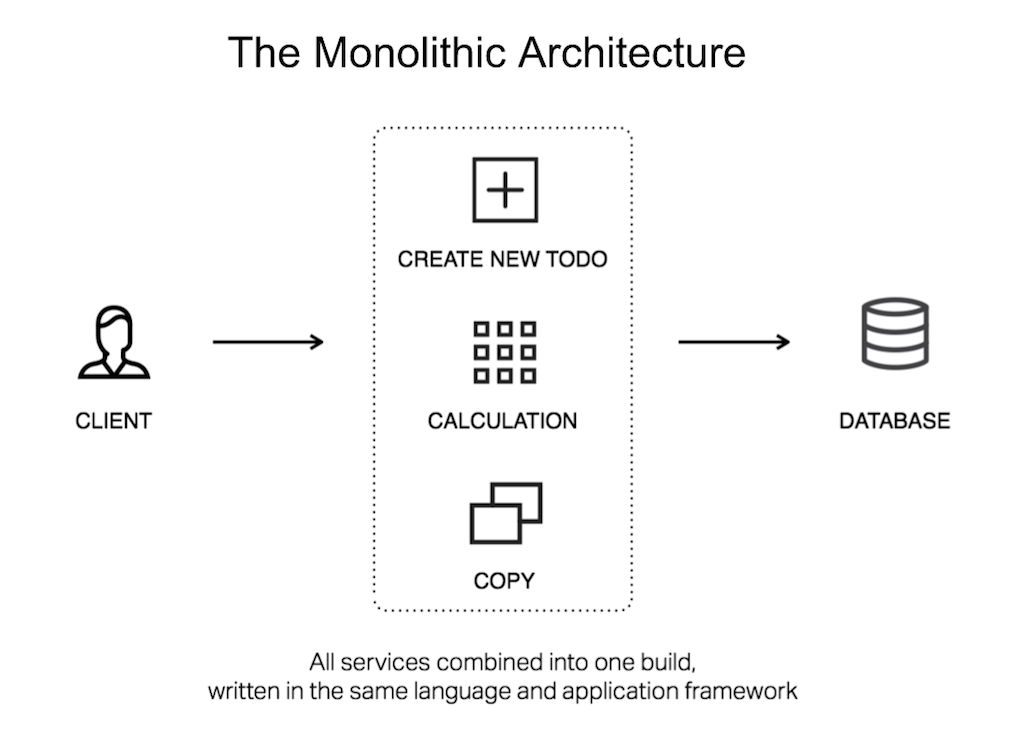

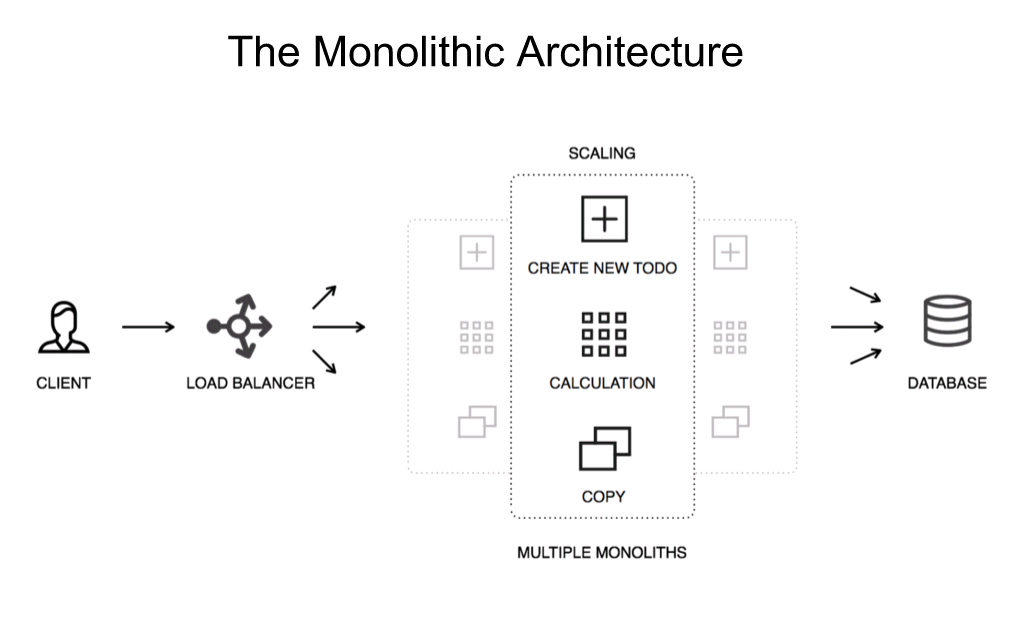

3:02 The Monolithic Architecture

Here you can see a monolithic architecture, and everything’s unitary. If you need to scale your monolith, you need to run multiple copies of the monolith, and you need to get those to coordinate with each other. Or otherwise they could each maintain state and run their own users, but neither of these options is really optimal, and they don’t give you the flexibility you want.

A lot of you are no doubt doing this right now and NGINX is a great tool for helping you execute it, but NGINX is also great for pivoting towards the new architecture.

3:43 Microservices Benefits

A microservices architecture gives you continuous delivery, rapid deployment, elasticity, and I would add, flexibility. Each service can scale and be updated independently.

You can use different languages and different frameworks. My favorite example for that is with databases. You may need very different databases for different purposes within an overall app, and it’s great to have the flexibility to use them as needed on a services level.

Each service can be changed, tested, and built independently, and this is where Docker really comes in.

Release cycles can be dramatically shortened, so people are deploying many, many times a day, instead of once every several weeks or months.

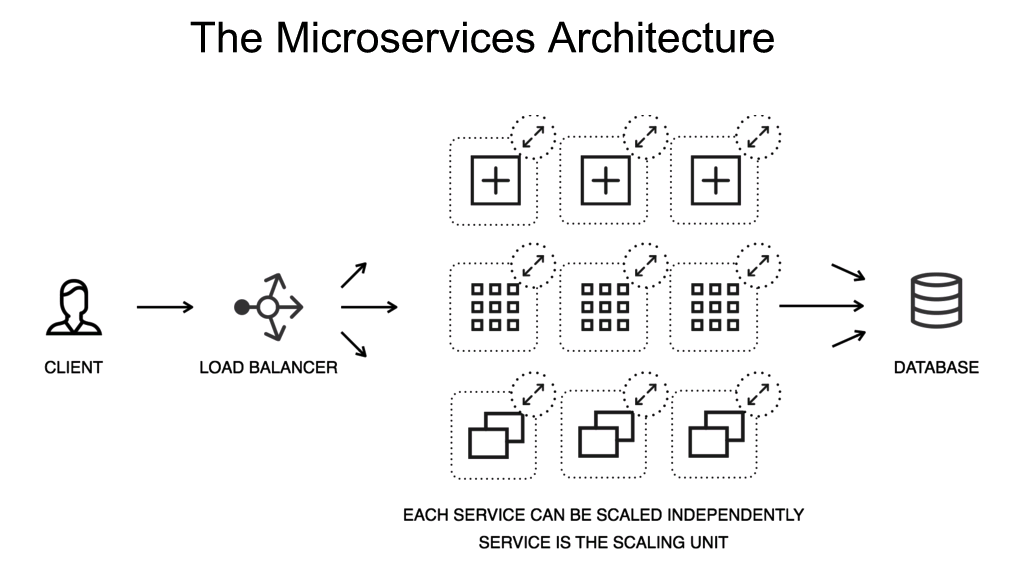

4:40 The Microservices Architecture

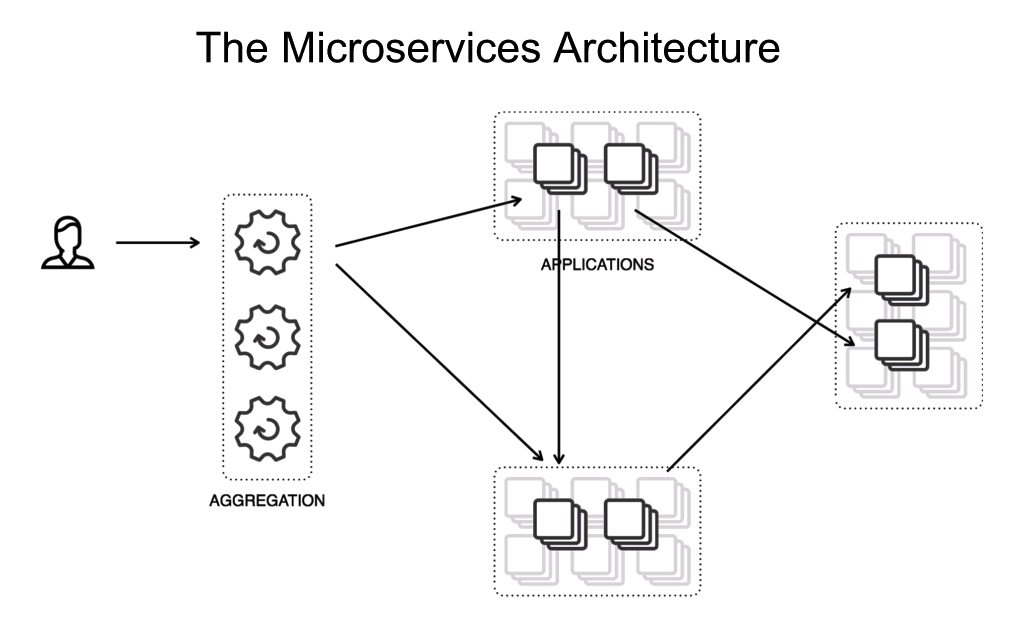

So here’s the microservices architecture, and you can see the pieces of the services talking to each other.

4:50 NGINX Plus with Monoliths

NGINX and NGINX Plus have a lot of capabilities that are great for your existing monolithic application, but also really set you up to pivot to the microservices world. At the end of Part II we’ll be presenting some resources that can help you gradually make the transition. So, if you need more detail, there’s plenty of places to follow up.

NGINX and NGINX Plus offer you caching of static content and also microcaching of dynamic content. It makes your monolithic architecture more flexible and it helps you do load balancing across multiple instances of your monolith.

NGINX, and especially NGINX Plus, can act as an HTTP router. NGINX inspects requests and decides how to satisfy each one. So, for instance with static file caching, the app server never sees that request. It gets handled at a higher level and the app server doesn’t even have to wake up for that to get handled. But also, if you’re running SSL [and/or] HTTP/2, all of that can get decoded at the load balancer/reverse proxy server level. Not bothering your app server, simplifying your app architecture, and a step in the direction of microservices.

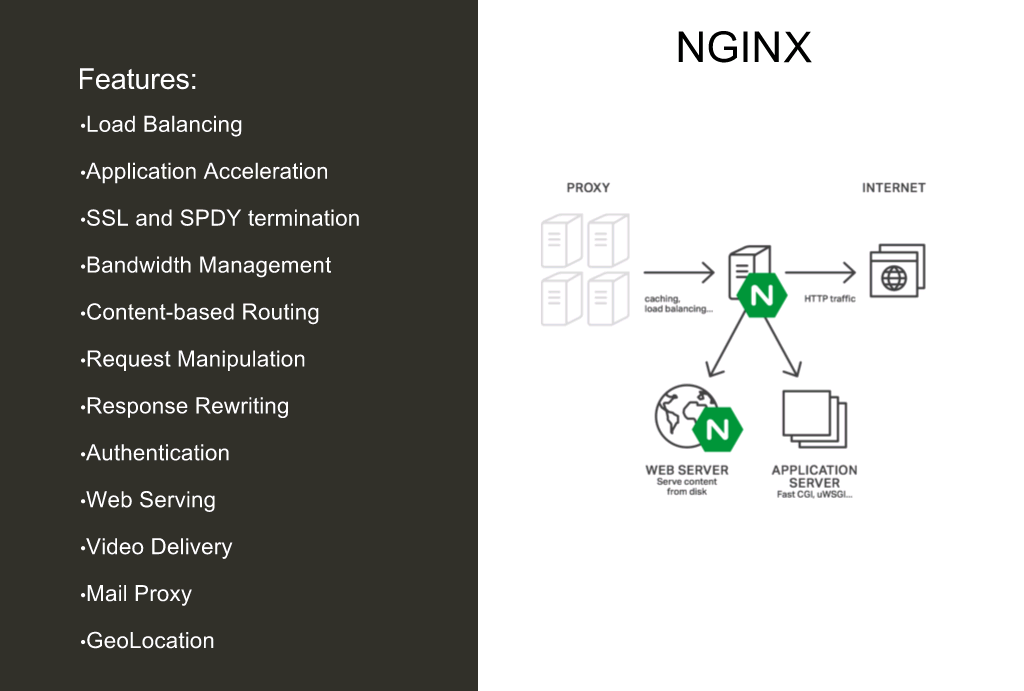

Here are some of the features of NGINX, and you can see if any of the things that you’re needing to do right now or in the near future are listed. If so, that’s a great entry point for your project.

6:37 Microservices Architecture Continued

With microservices, everything’s independent. You can scale everything separately. You can update, test, debug, fix things as you need them. So, you can make a same‑day change if there’s a problem in a service, which is incredible and entirely necessary for many apps today.

6:55 NGINX Plus and Microservices

NGINX and NGINX Plus help you step up, so you can easily integrate your app with container environments and devops tools.

You can run NGINX Plus in front of your microservices environment. You can run it as an API gateway to your microservices environment. You can run it per microservice.

We’re working on a reference architecture that we’re very excited about which integrates both DCOS and NGINX together in a really exciting way. We’re thrilled about this piece of the future that we’re seeing, and you will be too as we roll it out.

NGINX Plus gives you a stable, single entry point, and containers are just appearing and disappearing behind it. It looks like chaos at first, but it’s actually kind of lovely.

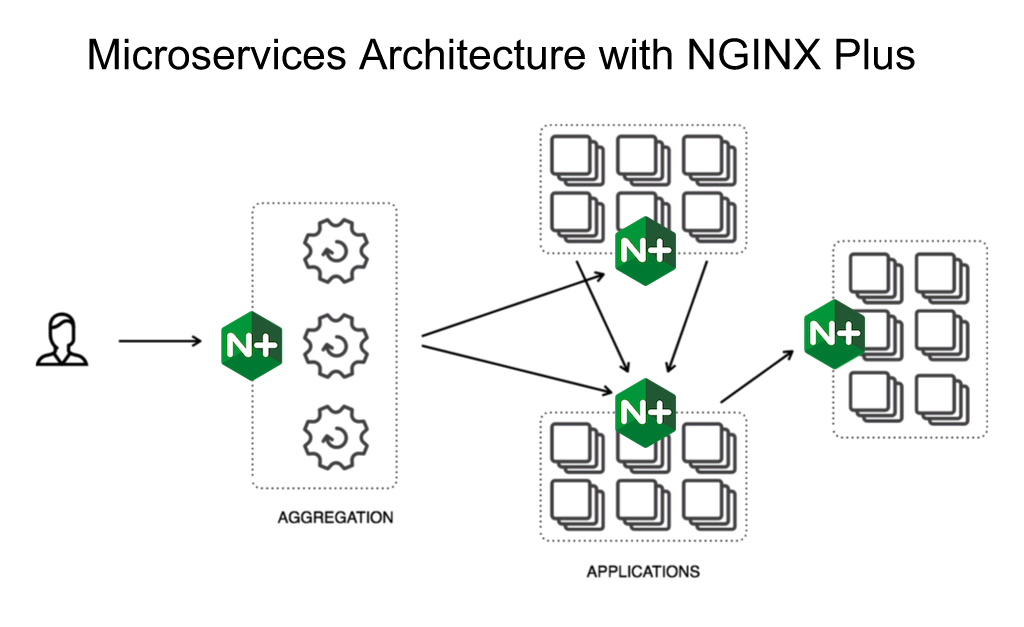

7:56 Microservices Architecture with NGINX Plus

Here’s an example architecture with NGINX Plus in front, at the aggregation level, handling communications with the end user and then handling communications back into your probably cloud‑based microservices architecture.

This blog post is the first of two parts on connecting your applications with Docker and NGINX, and is focused on microservices. The blog post based on the second part of the talk, focused on immutable infrastructure, is coming soon.

You can watch a replay of the webinar these posts are based on here.

If you are new to microservices, we recommend reading Introduction to Microservices by Chris Richardson, and continuing on through his complete, seven‑part series on microservices.