This post is adapted from a presentation at nginx.conf 2017 by Rick Nelson, Head of the Sales Engineering Team at NGINX, Inc.

You can view the complete presentation on YouTube.

Table of Contents

Introduction

My name is Rick Nelson, and I head up the pre-sales engineering team here at NGINX.

Today, I’m going to talk about some different ideas about how you can use health checks – the active health checks in NGINX Plus – when you need to worry about system resources, and specifically, when you’re running in containers.

I assume, since you’re here at an NGINX conference, that most of you know about NGINX Open Source <. You may be aware that NGINX Open Source does have a version of health checks, but the health checks are passive: when NGINX Open Source forwards a request to an upstream server on an existing connection, or tries to open a new connection, and there is an error, NGINX Open Source notices, and can mark the server as down.

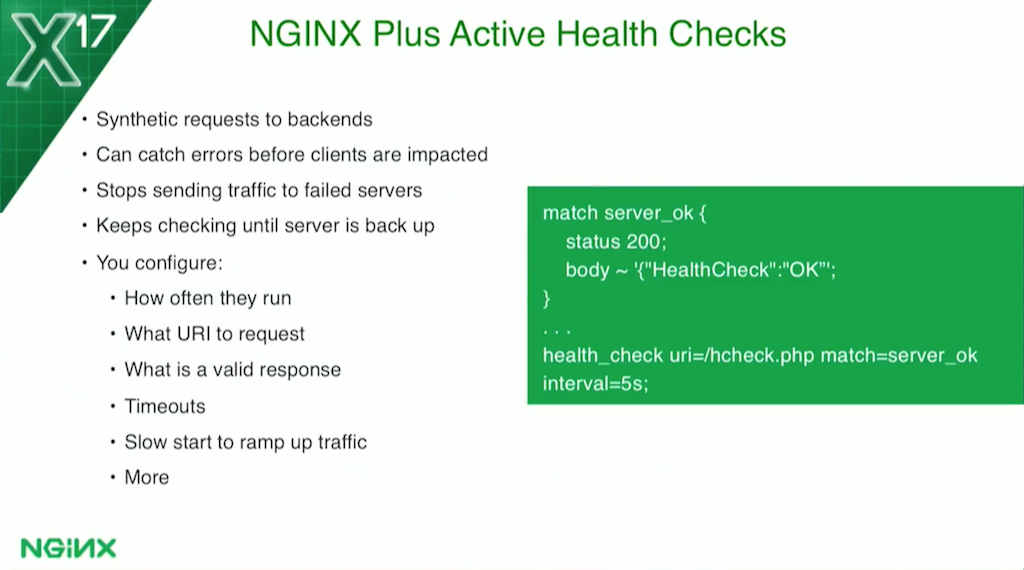

0:47 NGINX Plus Active Health Checks

NGINX Plus brings active health checks. It continually checks the backends to see if they’re healthy. NGINX Plus active health checks are actually synthetic transactions, completely separate from any actual client traffic to the backends.

They’re also very configurable. You can configure what URI to hit, how often you run them, and what a proper response status code is.

For example, if your servers need some warm‑up time when they come back to health, they may respond to the health check even though they’re still warming up caches and other things. If they then get slammed with load [because they responded successfully to a health check], they’ll fall over again. NGINX Plus health checks have a slow‑start feature where you can tell NGINX Plus to ramp the load up slowly so servers doesn’t get hammered when they first come back to life.

Once a health check fails, the server gets marked as down. NGINX Plus will stop sending any actual traffic to it, but it’ll keep checking, and it won’t send traffic to it again until it has actually verified that the server is up.

1:37 Preventing Server Overload

For the use cases today, we’re looking at services running in a container. You have a service where you’re very concerned about CPU utilization, or maybe it’s memory utilization, or maybe you have some really heavy requests and your backend can only handle so many requests at a time. These are the three use cases I’m going to talk about today.

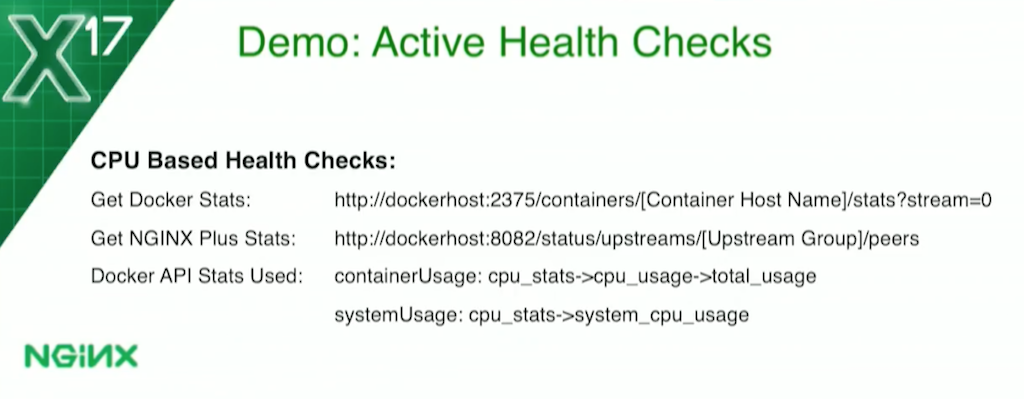

When it comes to getting system stats in a Docker container, you’ll find that you can’t really get them very easily from the container itself, so you use the Docker API. I’m using that for both my CPU‑based and memory‑based health checks.

I’m also using NGINX Plus status API because, when I’m looking at the CPU utilization, I actually need to know how many containers there are. I’ll talk about that in a little more detail in a minute.

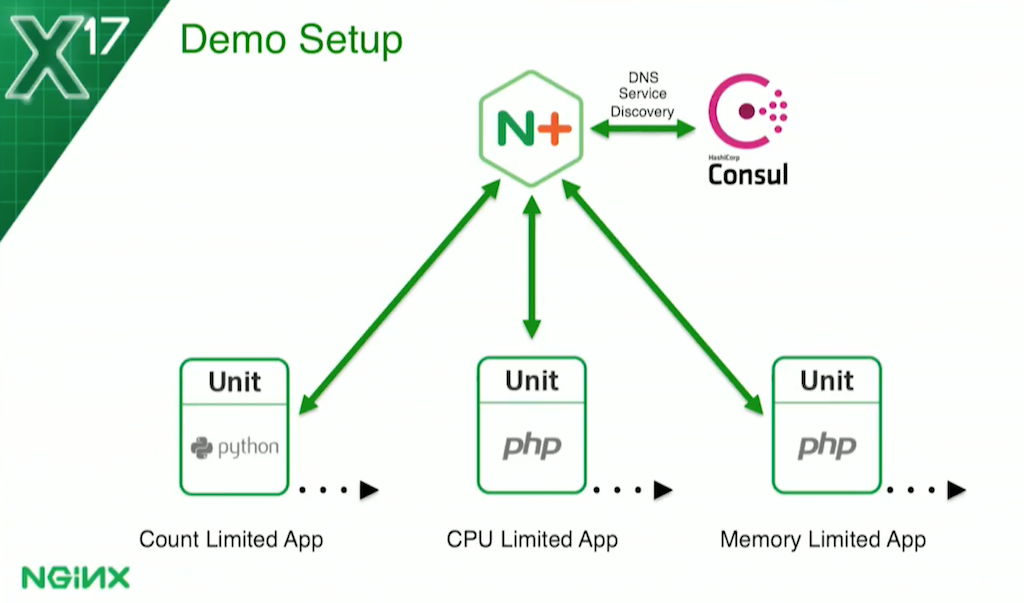

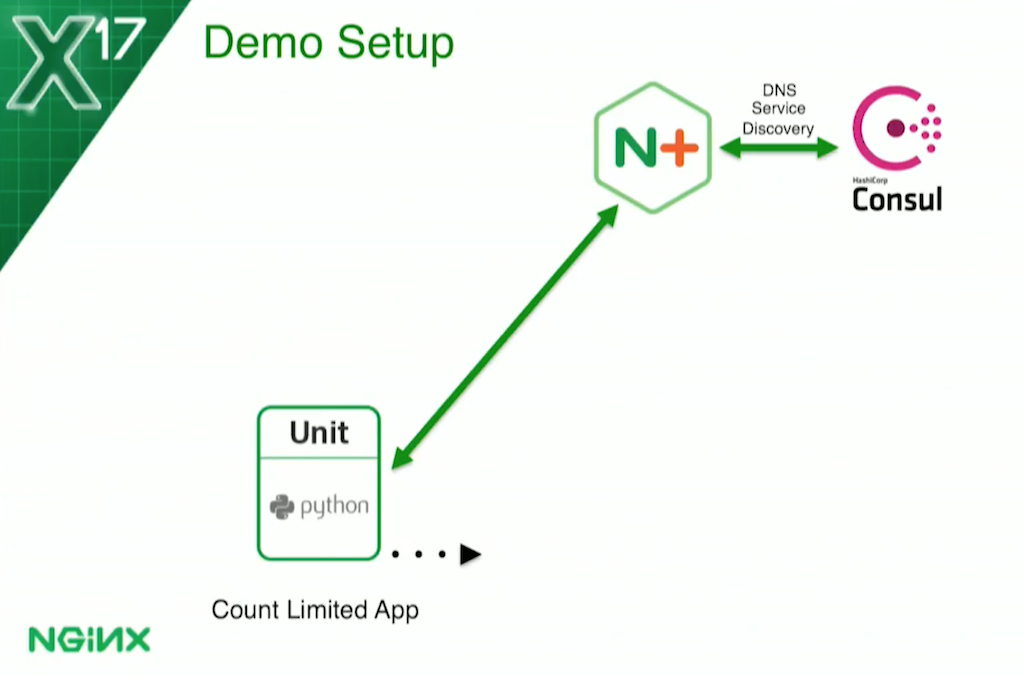

2:27 Base Topology for Demo

I’ll be showing you a demo in a few minutes.

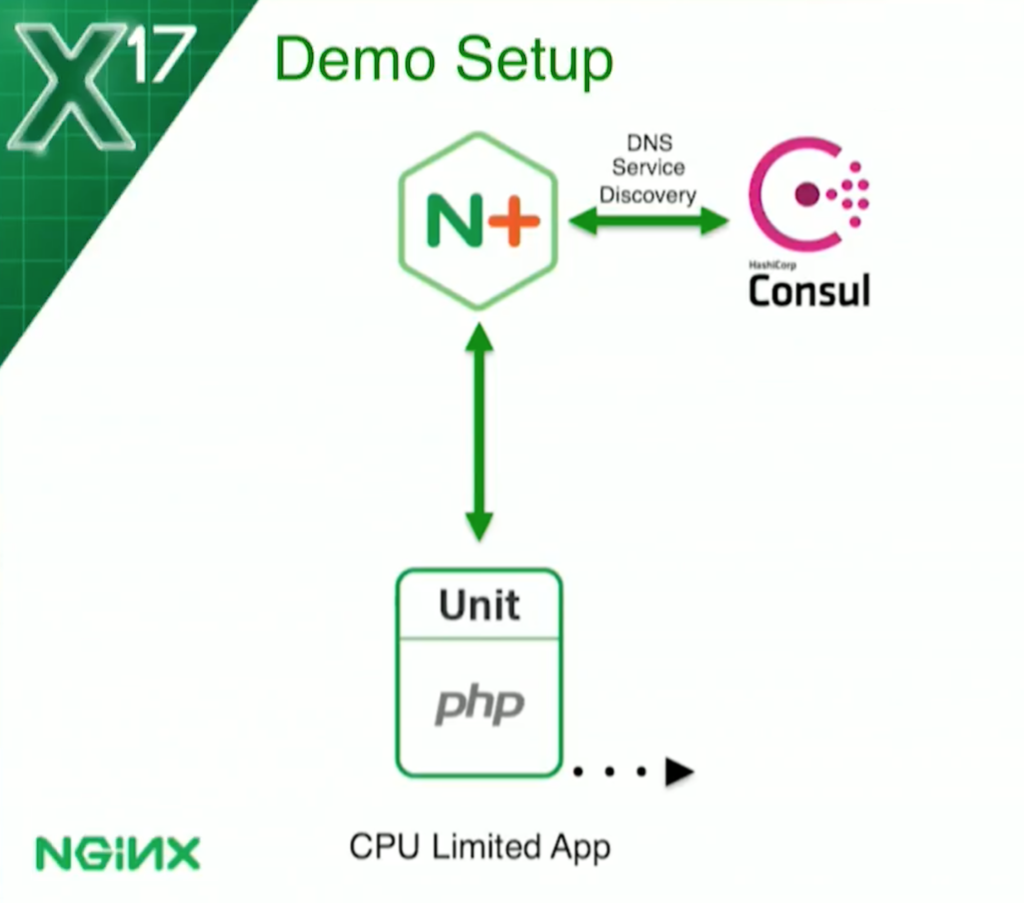

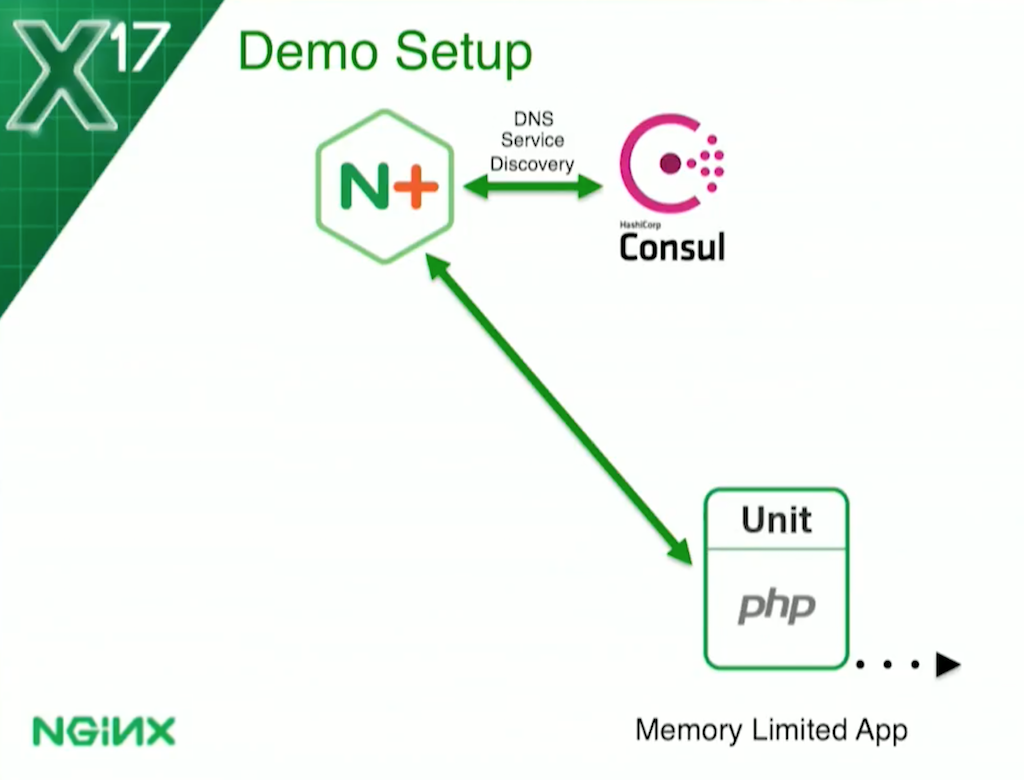

This is the basic config. I’ve got NGINX Plus at the frontend, load balancing three sets of upstreams. They’re all running NGINX Unit, which you’ve been hearing about over the last couple of days.

For the count‑based health check – the one limited by how many requests can be processed at a time – the app is written in Python. The other two, for my CPU and memory utilization checks, are written in PHP. They’re all running NGINX Unit.

For service discovery – so NGINX Plus knows how many containers to load balance – I’m using Consul. At an earlier presentation today, Cassiano Aquino from Zendesk was talking about Consul. It’s a very common thing to integrate Consul and NGINX, and especially NGINX Plus, when you’re doing service discovery.

3:08 Topology for the Three Types of Health Check

For the count‑based health check, the service is so heavyweight that it can only handle one request at a time. I have to come up with a solution so that, basically, when the service is processing a request, it’s considered unhealthy. That will cause NGINX Plus to stop sending any requests to it. When it’s done, it becomes healthy again and NGINX Plus brings it back into the load‑balancing rotation.

For the CPU‑based health check, I’ve set a threshold of 70% utilization by the application of the Docker host’s capacity.

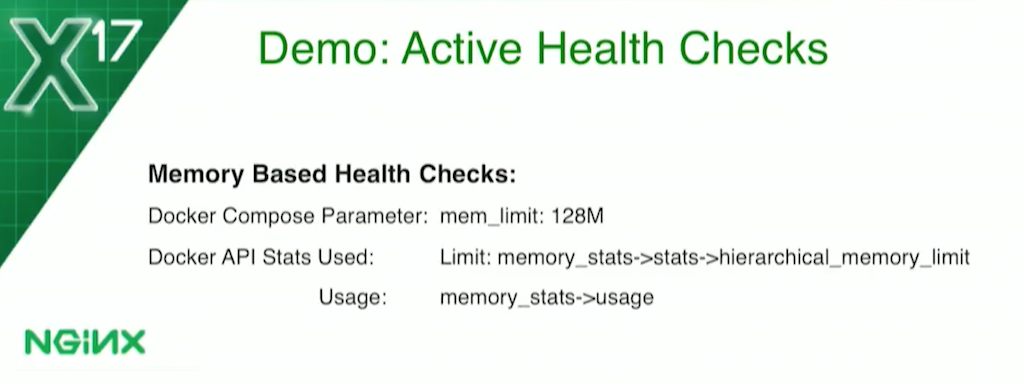

For the memory‑based health check, I’m actually limiting each container to 128 MB. Then I tell the health check that if the container is using more than 70% of that, it is unhealthy.

3:51 Expected Responses to Health Checks

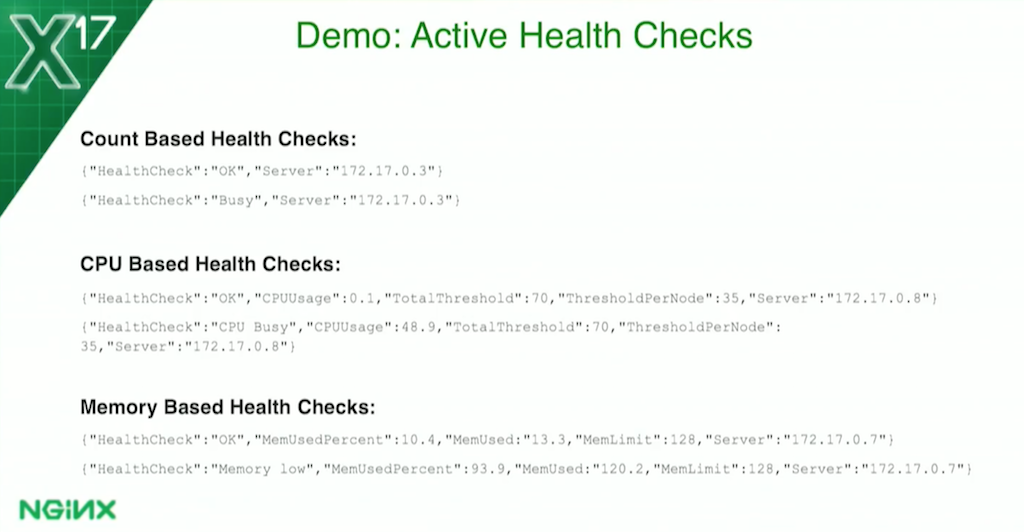

A little more detail: all three types of health check return JSON data. They return "HealthCheck":"OK" if they’re successful. If they’re not successful:

- The count‑based health check says

"HealthCheck":"Busy" - The CPU‑based health check says

"HealthCheck":"CPUBusy" - The memory‑based health check says

"HealthCheck":"Memorylow"

The CPU‑based and memory‑based health checks display the memory usage with the threshold they were calculated on, and so forth. But anything that’s not "OK" is a failure. "OK" is a success.

4:22 Implementation Details

Let’s go into a little more detail. For the count‑based health check, I’m using a simple semaphore file. When a request is received, the application will create the file /tmp/busy. The health check will look for the existence of that file. If it sees it, it marks the application as unhealthy. When the application finishes processing the request, it removes the file. The health check now sees the file is not there, and the application comes back to health. It’s very straightforward, very simple.

The CPU‑based health check is a little more complicated. I don’t know if you’ve ever run the top command in a Docker container, but you’ll find out that you’re seeing results from the Docker host. You can run the command in all five containers, and you see that they all say the same thing.

When I first went to do this [prepare the demo], I discovered that I’d never looked carefully at the results before. I said, “Oh, that’s not good.” But the Docker API does allow you to get [per‑container statistics]. The container calls the host via the Docker API, and the host can gather statistics about that container.

The statistics are about that container’s usage of the Docker host. For each container, it says, “This container is using this much of the Docker host.” I set my threshold at 70% to say, “This whole application is allowed 70% of the Docker host.”

Again, I use the Docker API to get the CPU utilization for the container, making two calls, one second apart, to gather the data. I use the NGINX Plus status API to find how many containers there are. I divide the threshold by the number of containers, which tells me how much each container can have. For example, if I have one container, it can use 70% of the host, but if I have two containers, then each one can has 35%, and so on. As the app scales by spinning up additional containers, each one is allowed a little less CPU.

For the memory‑based health check, it’s a little bit different. I’m actually limiting it – because Docker makes this easy – to 128 MB. Again, I get the data from the Docker status API, to tell me how much memory it’s using. And again, I chose 70% as my threshold. If the usage goes above 70%, it marks it unhealthy; when it goes below 70%, it becomes healthy again.

6:17 NGINX Plus Configuration for Upstream Servers, Health Checks, and Status

I’m going to briefly go through the configuration here; this will all be in [GitHub] after the conference. I’m not going to read through every line. If you know NGINX and NGINX Plus, this one is fairly straightforward and quite minimal.

You’ll notice I haven’t followed all the best practices. If you ran the configuration through NGINX Amplify, it would object to my configs and say that I’m missing server names, keepalives, and all that kind of stuff. But I’ve intentionally kept it to as few lines as possible.

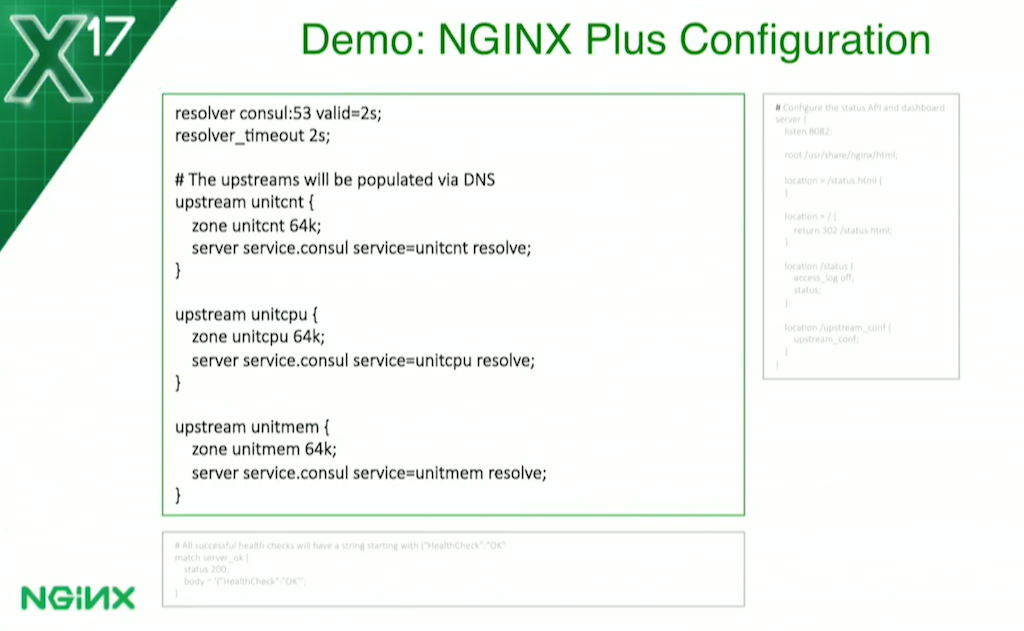

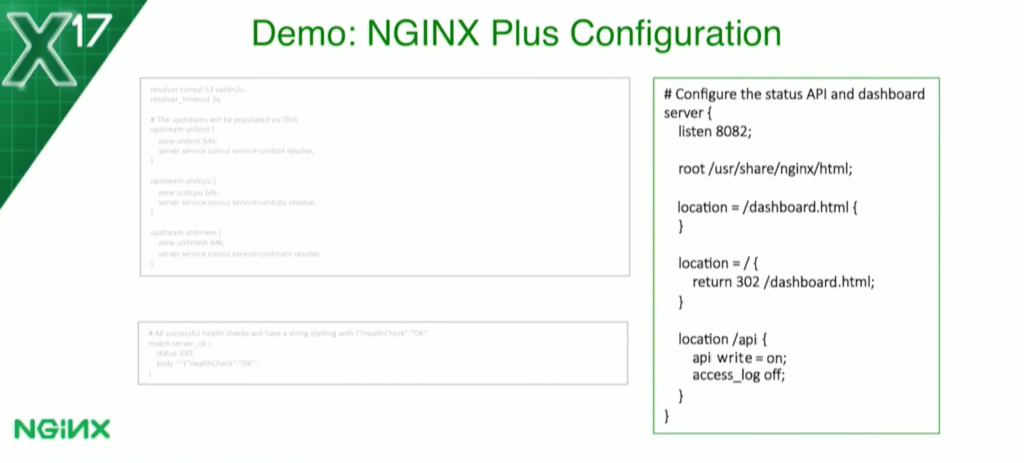

Here’s where I define my upstreams. The resolver directive at the top tells NGINX Plus to use Consul as the DNS server and dynamically re‑resolve all domain names every 2 seconds:

resolver consul:53 valid=2s;I’m ignoring the time‑to‑live specified in the DNS response and saying to re‑resolve every 2 seconds. I could make it 1 second as well, but I thought 2 was good enough for this.

You’ll see in each upstream block I have the server directive with the service parameter. That again ties us into Consul and gives us SRV record support.

I’m using standard Docker containers, so the ports are mapped dynamically. With SRV records, DNS give us port numbers, not just IP addresses.

The lower rectangle on the slide is a match block. This tells the NGINX Plus health check what a valid response is. I’ve told it I want status code 200 and I want to see the body starting with "HealthCheck":"OK" because, as I showed you a couple of slides ago, that’s the response for a successful health check. That’s what NGINX Plus is going to look for. If it doesn’t see that, it marks it unhealthy.

[Editor – The slide above and the following text has been updated to refer to the NGINX Plus API, which replaces and deprecates the separate status module originally discussed here. As such, the text differs from the recorded presentation.]

On the right side of the slide, I’ve configured the NGINX Plus API – which, again, I’m using to get the count of the number of containers. I listen to port 8082, and requests to the API will get me the raw JSON, which is what my program is using. [Editor – The NGINX Plus API does not provide the raw JSON for the entire set of metrics at a single endpoint as the deprecated status module did; for information about the available endpoints, see the reference documentation.] Requests for dashboard.html get us the NGINX Plus live activity monitoring dashboard page, which some of you may have seen, and which I’ll show you in a minute.

8:14 NGINX Plus Configuration for Virtual Servers

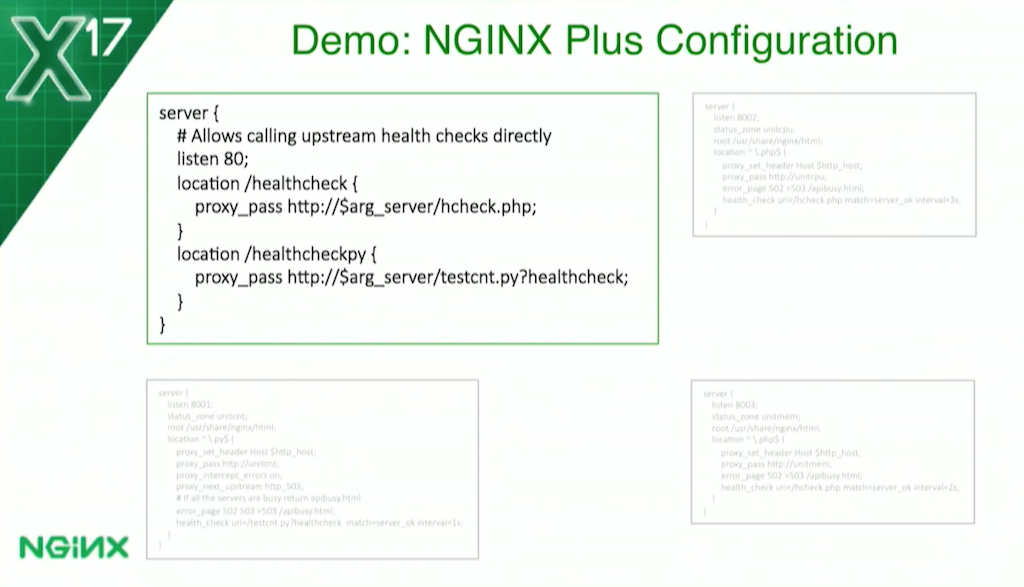

Now, for my server blocks, I’m going to go into detail here. This one in the upper left is a special one I put in just for the demo. I wanted to be able to show you an unsuccessful health check, but it turns out that with regular server blocks I can never do that, because as soon as the server fails and goes unhealthy, NGINX Plus won’t send a request to it anymore because it’s down. So I have this check going directly to the IP address and port so I can use a special URL to do that.

These other rectangles are the three applications. I’ve got my Python application [for the count‑based health check] in the lower left, and my PHP applications [for the CPU‑based and memory‑based health checks] on the right. You’ll notice that they’re all very similar, and the PHP ones are virtually identical except for the upstream group specified in the proxy_pass directives and the status_zone names.

I do have the intervals [Editor – Rick says “durations” but is referring to the interval parameter] on the health_check directives to be a bit different:

- For the count‑based health check, I have a 1‑second [interval], because I really want to get that one quickly.

- For the memory‑based health check, I have 2 seconds.

- For the CPU‑based health check, I used 3 seconds, because that one takes a bit of time: I have to make two requests to the API a second apart, and then do a calculation.

Now, in production, you might increase these to be quite a bit longer. But again, for the demo, I want things to be very quick.

The Python server block is a little bit different [from the PHP ones] because with Python and Unit, I have one Python program on each listener. (In the PHP ones – you can’t see it here, but you’ll see it in a minute during the demo – I have one program for health checks and one program as the application.) Here [with the Python server], I’m using one program, and based on the query parameter NGINX Plus knows whether to do the health check or whether to run the application.

I’ve also added the proxy_next_upstream http_503 directive [for the Python application but not the PHP ones]. With the count‑based health check, there’s a use case where the request comes, and before a second expires until the health check gets called, NGINX Plus sends another request to it because it thinks it’s healthy.

I’ve programmed the application so that if it gets another request while it’s processing one, it returns 503. The proxy_next_upstream directive tells NGINX Plus to try another server in that case.

I forgot to mention that all of the server blocks have this line here:

error_page 502 =503 /apibusy.htmlIf all the upstreams are busy and failing health checks, NGINX Plus returns a special page to the client, /apibusy.html. It just tells the client there’s nothing available at the requested URI.

10:18 Demo: Spinning Up Containers

Let’s get on with the demo. I do want to make a disclaimer here: these [health check types] have not been tested in production, they haven’t been tested at scale; they’re just some ideas I’ve been playing around with. If you want to use something like them, you’ll need to do a lot more testing than I have.

First, I’ll make sure I have a clean environment. I have no Docker containers here.

root@demo2:~# docker ps

CONTAINER ID IMAGE COMMAND CREATED STA...

I’m using Docker Compose for everything, so I’ll spin up some containers:

root@demo2:~# docker-compose up -d[The resulting set of containers is] basically the picture I showed you a moment ago, and we should have a bunch of containers now, and we do.

root@demo2:~# docker ps

...output for several containers...

We should be able to see them in the NGINX Plus status dashboard [he opens the dashboard in the left half of the window].

And if we look at our upstreams, we should see three upstreams, each with one server: [the one for] count‑based [health checks], the CPU‑based, and the memory‑based.

I’m going to scale the number of instances of each upstream application to two:

root@demo2:~# docker-compose up --scale unitcnt=2 --scale unitcpu=2 --scale unitmem=2...We can see how service discovery works with Consul. We’ll see that it’s all automatic. I just hit Return here [in the terminal], and we’ll see in a couple of seconds, they’re [the second instance of each application] going to magically appear on the NGINX Plus dashboard. It’s very quick, and it’s all automatic. I didn’t have to do anything but basically point NGINX Plus at it.

11:33 Demo: Successful Health Checks

Now let’s take a look at the different health checks. If we run a count‑based health check – port 8001 is the count‑based one – we’ll see it return the JSON I showed you earlier. It says it’s "OK".

root@demo2:~# curl https://localhost:8001/testcnt.py/healthcheck

{"HealthCheck":"OK","Host":"cdb601bf181a"}If we run it again, we’ll see requests are getting load balanced. If you look at the two outputs, you’ll see the Host has changed. That tells us that the health check went to a different server.

root@demo2:~# curl https://localhost:8001/testcnt.py/healthcheck

{"HealthCheck":"OK","Host":"d8dd08e46dc7"}Next I want to show you the CPU‑based health check. You’ll see that this one takes a while because I’m making an API request, I wait a second, I make another API request, and I do a calculation. But, you see, it says "OK", and you’ll see my CPU utilization is minimal here because nothing’s happening.

root@demo2:~# curl https://localhost:8002/hcheck.php

{"HealthCheck":"OK","CPUUsage":1,"TotalThreshold":70,"ThresholdPerNode":35,"Host":"...I can also show the memory‑based health check. This one comes back a bit faster, and it tells me that I’m "OK" for the memory check as well. That’s where I wanted to be.

root@demo2:~# curl https://localhost:8003/hcheck.php

{"HealthCheck":"OK","MemUsedPercent":24.5,"MemUsed":31.4,"MemLimit":128,"Threshold"...12:36 Demo: Failing the Count-Based Health Check

Returning to the count‑based health check, if we want to make one application instance busy, I have this program that I run. It’s going to create that semaphore file I talked about:

root@demo2:~# curl https://localhost:8001/testcnt.py/

{"Status":"Count test complete in 10 seconds","Host":"cdb601bf181a"}On the NGINX Plus dashboard, we should see one of the servers in the unitcnt group go red pretty quickly [the top one does]. By default, this does it, and it waits for 10 seconds. We’ll see, in 10 seconds, that it’s going to come back to life.

I had that special URL so I can see these things directly [he’s referring to the first server block he talked about, for seeing a failed healthcheck]. We can pass in the server name for any one of the application servers, so I’ll do the first one listed on the dashboard. [He initially mistypes the URL, resulting in a 404 error. He then corrects the URL with the following result.]

root@demo2:~# curl https://localhost/healthcheckpy?server=172.17.0.1:32804

{"HealthCheck":"OK","Host":"cdb601bf181a"}There we go. This case is "OK", so I can actually hit it either way.

I’m going to show what happens when I make them [the servers in the unitcnt upstream group] both busy. I run the following command twice and we should see one server go busy, and then the second one’s going to go busy [on the dashboard, the two servers in the unitcnt group turn red].

root@demo2:~# curl https://localhost:8001/testcng.py?sleep=15&

root@demo2:~# curl https://localhost:8001/testcng.py?sleep=15&

{"HealthCheck":"OK","Host":"cdb601bf181a"}If I try again, we’re going to get that special page I talked about which basically shows that they’re all busy.

root@demo2:~# curl https://localhost:8001/testcng.py

{"Error":"System busy"}And I can also run this and see that I get "Busy".

root@demo2:~# curl https://localhost/healthcheckpy?server=172.17.0.1:32804

{"HealthCheck":"Busy","Host":"cdb601bf181a"}That’s what a failed health check looks like on the count‑based health check, because they’re both busy.

14:22 Demo: Failing the CPU-Based Health Check

Now, I’m going to move on to the CPU‑based health checks. For that, I can actually scale [the number of application servers] back down to one to show you the difference, and what happens as we scale up and down.

root@demo2:~# docker-compose up --scale unitcnt=2 --scale unitcpu=1 --scale unitmem=2 -d

rbusyhealthchecksunit_unitmem_1 is up-to-date

rbusyhealthchecksunit_unitmem_2 is up-to-date

rbusyhealthchecksunit_unitcnt_1 is up-to-date

rbusyhealthchecksunit_unitmem_2 is up-to-date

Stopping and removing rbusyhealthchecksunit_unitcpu_2...

consul is up-to-date

bhc-nginxplus is up-to-date

Stopping and removing rbusyhealthchecksunit_unitcpu_2... done

Starting rbusyhealthchecksunit_unitcpu_1... doneScaling down with Docker‑Compose takes a few seconds longer, but we should get it down there in a second. There it goes [on the dashboard, the number of servers in the unitcpu group changes from two to one].

For this, I want to run docker stats so we can see the CPU utilization on these guys.

root@demo2:~# docker stats

CONTAINER CPU % MEM USAGE / LIMIT MEM % N...

d8dd08e46dc7 0.03% 6.395Mib / 1.981GiB 0.32% 9...

...output for 7 more containers...

Right now, I have the threshold set at 70%. I only have one container, so that container can use up to 70% of the CPU without causing a problem.

If we run this command, it should take up 50 to 60% of the CPU.

root@demo2:~# curl https://localhost:8002/testcpu.php

There, you see in the output from docker stats, the CPU utilization for one of the containers goes to 60%:

CONTAINER CPU % MEM USAGE / LIMIT MEM % N...

...output for the first 6 containers...

01957a12aa85 60.76% 12.06Mib / 1.981GiB 0.59% 1...

...output for the last container...

The health check is still going to succeed. It’s going run again every three seconds, but usage by one server will never hit 70%.

Now, let’s scale [the number of Python servers] back up to two again. [This means that each of the two servers is allowed half of the CPU limit of 70, or 35% each.]

root@demo2:~# docker-compose up --scale unitcnt=2 --scale unitcpu=2 --scale unitmem=2...

...

Creating rbusyhealthchecksunit_unitcpu_2... doneIf we run that same program again (I’m going to run it for a little longer this time):

root@demo2:~# curl https://localhost:8002/testcpu.php?timeout=45&

We’re going to see CPU utilization for one container going to go up again to 60% or so:

CONTAINER CPU % MEM USAGE / LIMIT MEM % N...

...output for the first 7 containers...

3dfb91e421ea 59.55% 11.93Mib / 1.981GiB 0.59% 1...

But now the health check is going to fail. There it goes [on the dashboard, one of the servers in the unitcpu group goes red]. That’s because that container is over the 35% that’s available for each one.

And we can go ahead and run another test that consume less CPU – 10% to 15% – and it’s going to run just fine.

root@demo2:~# curl https://localhost:8002/testcpu.php?level=2

[While the one server in the unitcpu group is marked unhealthy], we can also see a failed health check by running the health check against it [it’s on port 32808]. This one should come back with "HealthCheck":"CPU Busy", which it does, and that’s why the health check is failing.

root@demo2:~# curl https://localhost/healthcheck?server=172.17.0.1:32808

{"HealthCheck":"CPU Busy","CPUUsage":51.5,"TotalThreshold":70,"ThresholdPerNode":35...It’s letting one go through because it’s using too much CPU, and the one that’s using less CPU – I mean, it’s blocking one and letting the other go through.

16:36 Demo: Failing the Memory-Based Health Check

Finally, we have our memory‑based health check:

root@demo2:~# curl https://localhost:8003/testmem.php?sleep-15For this case, notice the second one from the top [in the output from docker stats] – it’s the one limited to 128 MB, which is how I can identify it. We’re going to see its memory usage shoot up – pretty much almost all of the memory – and that one’s going to go unhealthy as well.

CONTAINER CPU % MEM USAGE / LIMIT MEM % N...

...output for the first container...

2a582a95f032 0.5% 119.1Mib / 128MiB 93.05% 2...

We can see that one say "HealthCheck":"Memory low" as the test finishes.

root@demo2:~# curl https://localhost/healthcheck?server=172.17.0.1:32805

{"HealthCheck":"Memory low","MemUsedPercent":99.9,"MemUsed":127.9,"MemLimit":128,"T...But in a few seconds the test is going to finish it should go green on the dashboard. When the test finishes, if we send the health check again see that it’s says "OK".

root@demo2:~# curl https://localhost/healthcheck?server=172.17.0.1:32805

{"HealthCheck":"OK","MemUsedPercent":32.1,"MemUsed":41.1,"MemLimit":128,"Threshold"...That’s it for the demo. I hope that was interesting. Again, these are some ideas I’ve been playing around with. If anybody has any questions, please let me know. If not, then have a great day. If anybody wants to come up afterward, I’ll be here.