This blog post is adapted from a presentation by Valentin V. Bartenev at nginx.conf 2015, held in San Francisco in September.

Table of Contents

| 0:20 | Overview of the Protocol |

| 1:40 | Key Features of HTTP/2 |

| 3:08 | HTTP/2 Inside – Binary |

| 4:26 | HTTP/2 Inside – Multiplexing |

| 7:09 | Key Features of HTTP/2 – Header Compression |

| 8:40 | Key Features of HTTP/2 – Prioritization |

| 10:06 | History of HTTP/2 |

| 10:16 | Test Page |

| 10:52 | Test Environment |

| 11:02 | DOM Load |

| 11:48 | First Painting |

| 12:45 | Configuration |

| 14:20 | Q&A |

A couple of days ago, we released NGINX’s HTTP/2 module. In this talk, I’m going to give you a short overview of the new module.

0:20 Overview of the Protocol

First of all, I want to debunk some myths around the new protocol.

Many people think of HTTP/2 as a shiny, superior successor to HTTP/1. I do not share this opinion, and here’s why. HTTP/2 is actually just another transport layer for HTTP/1, which isn’t bad because as a result, you can use HTTP/2 without having to change your application – it works with the same headers. You can just switch on HTTP/2 in NGINX and NGINX will gently handle all the protocol stuff for you.

But HTTP/2 isn’t magic. It does have its advantages and disadvantages. There are use cases where it behaves well and also scenarios where it behaves poorly.

1:40 Key Features of HTTP/2

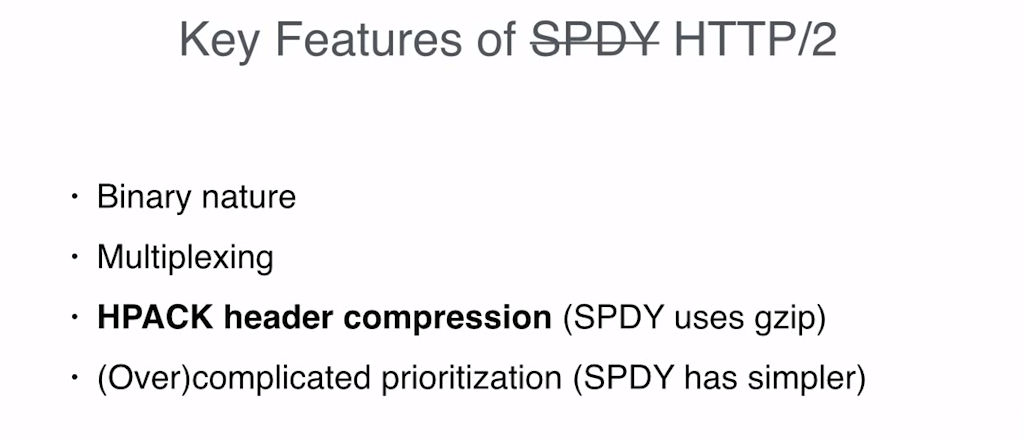

You can think of HTTP/2 as a new version of SPDY because it was based on SPDY and is a very similar protocol. SPDY is a protocol developed by Google a couple of years ago designed to accelerate web content delivery.

NGINX has supported SPDY for two years already, so you can check out the advantages of HTTP/2 by using the SPDY module. Some people would say HTTP/2 is just a polished version of SPDY 3.1.

If you are not familiar with SPDY, I’m going to go over some key points. And these key points are true for HTTP/2 as well, because they are basically just the same protocol with some difference in details.

The first key point is that the protocol itself is binary. I like binary protocols – they are easier to code and good binary protocols have some performance advantages. However, I also understand the drawbacks of this approach.

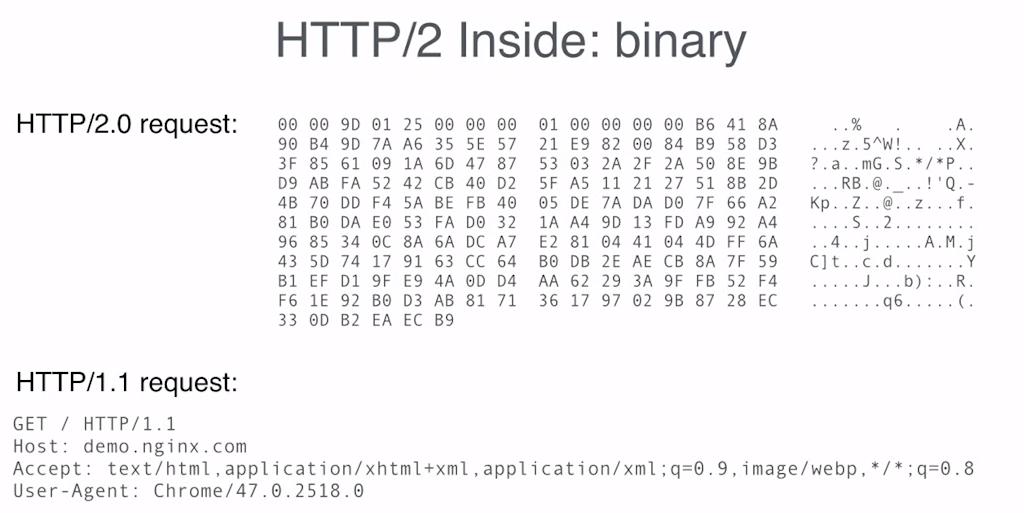

3:08 HTTP/2 Inside – Binary

Here is an HTTP/2 request. It looks pretty cool, and as you can see, it’s very easy to debug. No, I’m just kidding. It’s hard to debug. And that’s one of the disadvantages of binary protocols.

You may have to use a tool to decode the request or… well, sometimes, you may need to debug the binary manually because the tool can be broken, or the tool may not show you all the details hidden in the bits.

Fortunately, most of the time you can just throw HTTP/2 in NGINX and never deal with its binary nature. And fortunately, most of the requests will be handled by machines. Machines are much better at reading binary protocols than humans.

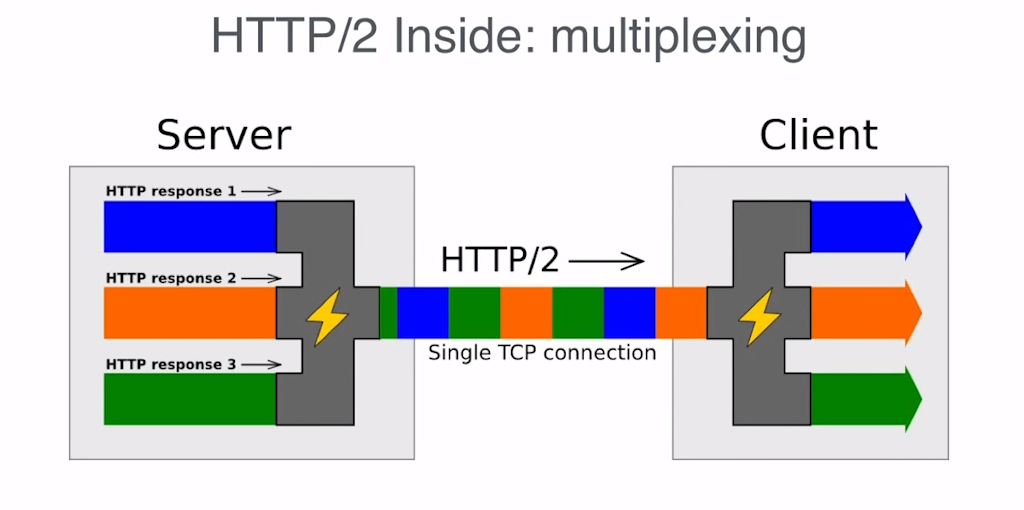

4:26 HTTP/2 Inside – Multiplexing

The next key point of HTTP/2 is multiplexing. Instead of sending and receiving responses and requests as separate streams of data over multiple connections, HTTP/2 multiplexes them over one stream of bytes or one stream of data. It slices data for different requests and for different responses, and each slice has its own identification and its size field, which is there so the endpoint can determine which data belongs to which request.

The disadvantage here is that since each chunk of data has its own identification, its own fields, there’s some metadata that transfers in addition to the actual data. So, it has some overhead. As a result, if you just have one stream of data, for example if you are watching a movie, then HTTP/2 isn’t a good protocol because all you will get is just additional overhead.

What are the benefits of multiplexing? The main benefit of multiplexing is that by using only a single connection you can save all the time you would spend with HTTP/1.x on handshaking when you need to create a new request.

Such delays are especially painful when you deal with TLS. That is why most clients now support HTTP/2 only over TLS. As far as I know, there are no plans to support HTTP/2 over plain TCP because there is not much benefit to it. This is because TCP handshakes aren’t as expensive as TLS handshakes so you don’t save much here by avoiding multiple connections.

7:09 Key Features of HTTP/2 – Header Compression

The next key point about HTTP/2 is its header compression. If you have very big cookies, this can save you hundreds of bytes per request or response, but in general most of the time you won’t benefit much from header compression. Because, even if you think about separate requests, eventually you will deal with a packet layer on the network and it doesn’t matter much if you send a hundred bytes or a hundred and a half‑bytes, as eventually it will still result in one packet.

The drawback of HTTP/2 header compression is that it’s stateful [Editor – For the tables used to store compression/decompression information]. As a result, for each connection, servers and clients should keep some kind of state and it costs much more memory than keeping state for HTTP/1 connections. So, as a result, each HTTP/2 connection will use much more memory.

8:40 Key Features of HTTP/2 – Prioritization

The last key point about HTTP/2 is its prioritization mechanics. This solves the problem that was introduced by multiplexing. When you have only one connection, you have only one pipe, and you should carefully decide which response you are going to put in this pipe next.

Prioritization is optional in HTTP/2, but without it you won’t get much benefit in performance. The HTTP/2 module in NGINX fully supports prioritization, and it supports priority based on weights and priority based on dependencies. From what we have seen so far, we currently have the fastest implementation of HTTP/2 at the moment. Those are the key points about HTTP/2.

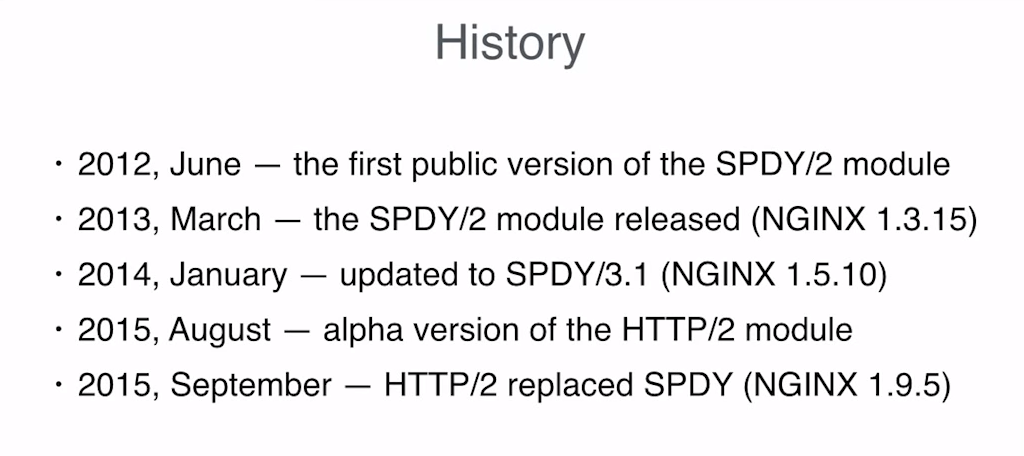

10:06 History of HTTP/2

Here’s a simple slide that goes through the history of HTTP/2. Fairly straightforward. Let’s go on to how HTTP/2 works in practice.

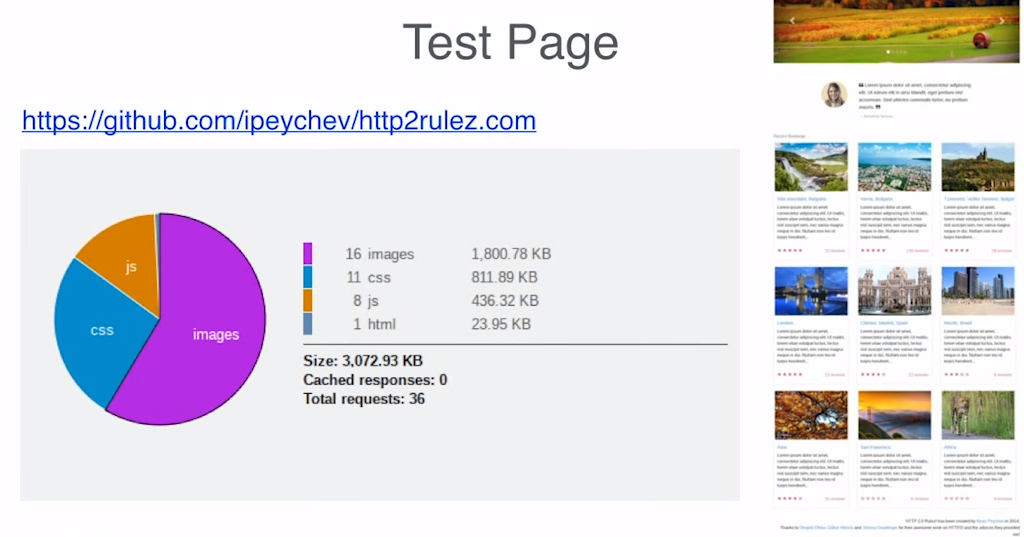

10:16 Test Page

In order to understand how HTTP/2 works under different network conditions, I’ve done some benchmarks on a typical webpage. This is just an HTTP/2 page that I found on Github. You can see how many resources it has and you can see how large each resource is. I think it’s fairly representative of a typical site.

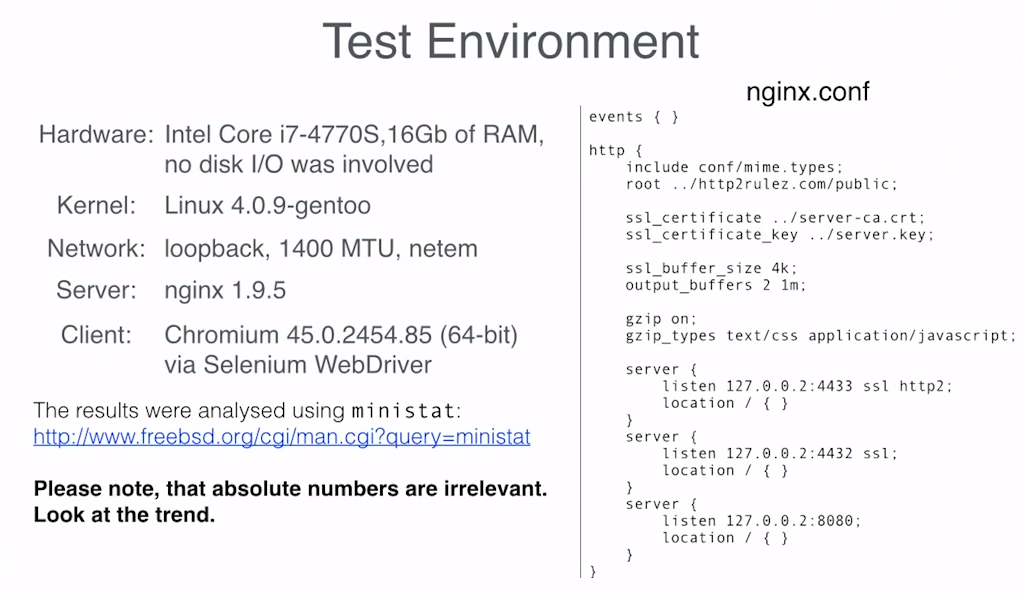

10:52 Test Environment

Here’s my test environment, in case you want to reproduce the test.

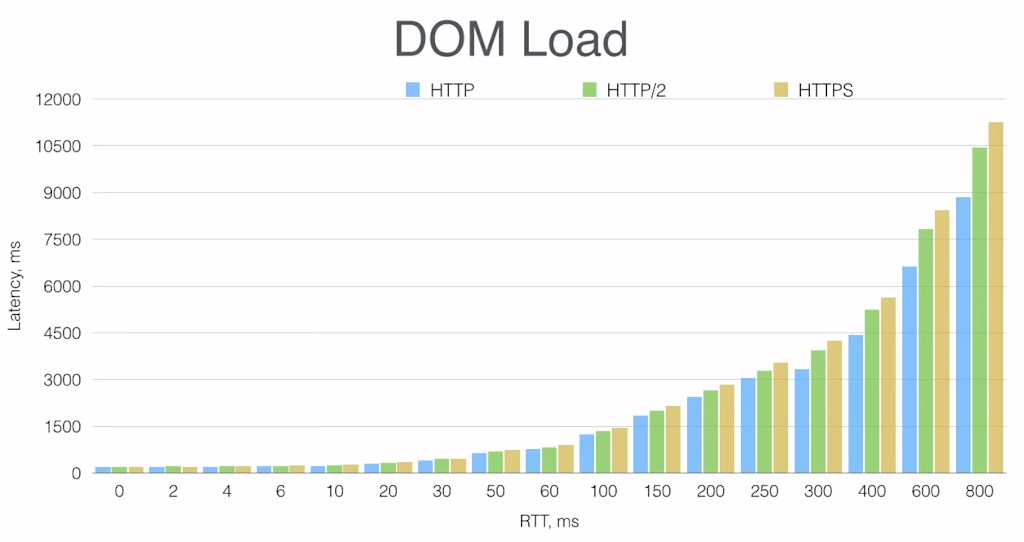

11:02 DOM Load

And here’s the result that I got. You can see that I have simulated different network latencies on the X‑axis as round‑trip times (RTT) in milliseconds, and then I measured the download time on the Y‑axis, also in milliseconds. And this is test is measuring page loading time as when all the resources of the page are fully loaded.

From the graph, you can see an obvious trend that for low latencies, there’s no significant difference between HTTP, HTTPS, and HTTP/2. For higher latencies, you can see that plain HTTP/1.x is the fastest choice. HTTP/2 comes second and HTTPS is the slowest one.

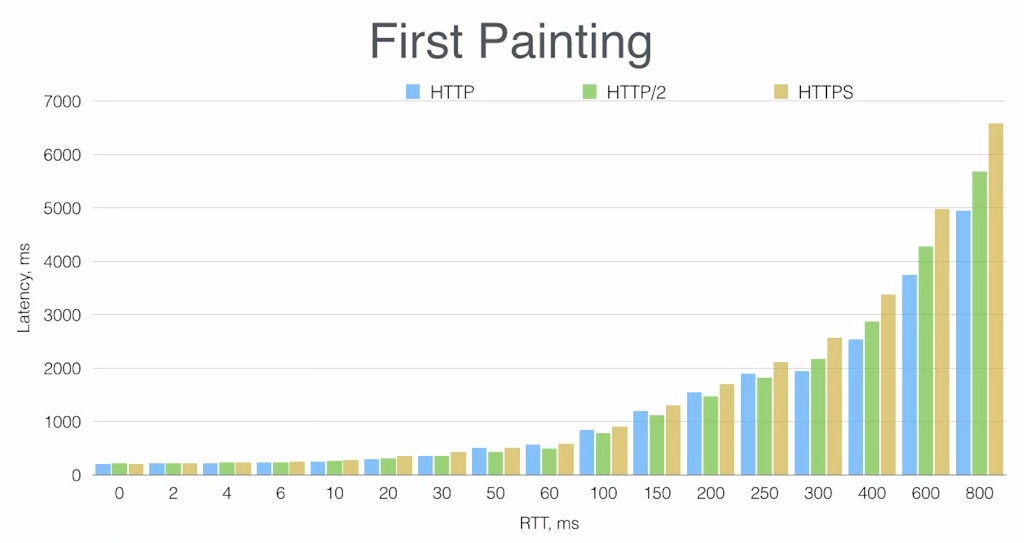

11:48 First Painting

What about “first painting” time? In many cases, it’s the most significant metric from the user’s perspective because this is when they actually see something on their screen. In many cases, it doesn’t matter as much how long it takes for the page to be loaded fully but it matters a lot how long it takes for the user to get to see something.

Here we see the same trend, but the interesting part here in our test is that for latencies in the middle of the range, from 30ms to 250ms, HTTP/2 is a bit faster than plain HTTP, though the difference is very small.

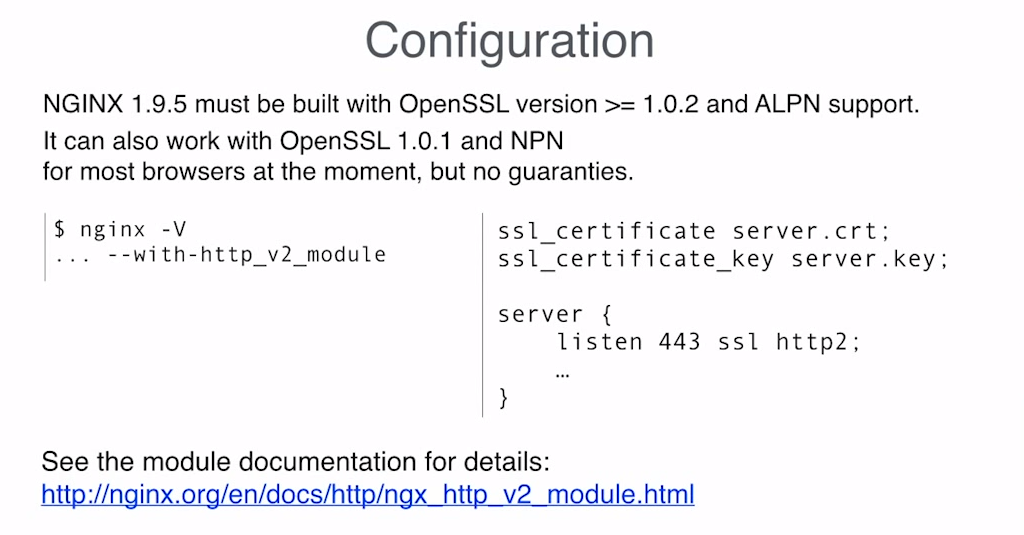

12:45 Configuration

So, those were our benchmarks, now let’s talk about how to configure HTTP/2 and NGINX.

It’s actually very simple. All you need to do is just add the http2 parameter to the listen directive. Probably the most complicated step here is to get the latest version of OpenSSL because HTTP/2 requires the ALPN [Application‑Layer Protocol Negotiation] extension, and the ALPN extension is only supported by OpenSSL 1.0.2.

We also have implemented NPN [Next Protocol Negotiation] for HTTP/2, which works with most clients at the moment, but they’re going to remove support for NPN as SPDY is deprecated in early 2016. That means you can use HTTP/2 with the OpenSSL version which is part of many Linux distributions at the moment, but you need to keep in mind that it will stop working in the near future.

So, that’s all about configuring NGINX for HTTP/2 and that wraps up my presentation. Thank you.

[Reference documentation for HTTP/2 module]

Q&A

Q: Will you support HTTP/2 on the upstream side as well, or only support HTTP/2 on the client side?

A: At the moment, we only support HTTP/2 on the client side. You can’t configure HTTP/2 with proxy_pass. [Editor – In the original version of this post, this sentence was incorrectly transcribed as “You can configure HTTP/2 with proxy_pass.” We apologize for any confusion this may have caused.]

But what is the point of HTTP/2 on the backend side? Because as you can see from the benchmarks, there’s not much benefit in HTTP/2 for low‑latency networks such as upstream connections.

Also, in NGINX you have the keepalive module, and you can configure a keepalive cache. The main performance benefit of HTTP/2 is to eliminate additional handshakes, but if you do that already with a keepalive cache, you don’t need HTTP/2 on the upstream side.

More HTTP/2 Resources

- HTTP/2 for Web Application Developers – White paper (PDF) covering everything you need to know about HTTP/2

- High Performance Browser Networking – Special edition of the ebook by Ilya Grigorik of Google

- What’s New in HTTP/2? – NGINX webinar describing key features and giving implementation advice

- 7 Tips to Improve HTTP/2 Performance – Blog post

- List of browsers that support HTTP/2 – Can I Use? website

Ready to try HTTP/2 with NGINX Plus in your environment? Start your free 30-day trial today or contact us to discuss your use cases.