A load balancer often acts as the single entry into a web application, which makes it a critical component in your application delivery infrastructure: load‑balancer downtime means application downtime. To minimize downtime and the user unhappiness that comes with it, you need to deploy your load balancer in a highly available (HA) manner.

This blog post compares several methods you can use to achieve HA for NGINX Plus as your AWS load balancer. It is possible that you are already familiar the keepalived‑based solution for HA that we provide for on‑premises deployments. However, due to networking limitations in AWS and cloud environments in general, you cannot use that solution in the cloud. Nevertheless, AWS offers other options for achieving HA for NGINX Plus. For example, you can modify the on‑premises keepalived solution to work in AWS, as detailed in our deployment guide.

[Editor – There is also a solution that combines a highly available active‑active deployment of NGINX Plus with the AWS Network Load Balancer (NLB).]

The methods discussed in this post apply to both NGINX Open Source and NGINX Plus, but for the sake of brevity we’ll refer only to NGINX Plus for the rest of the blog.

The table summarizes the four methods that we’ll describe in this post.

| Method | HA Type | IP Address Type | Notes |

|---|---|---|---|

| ELB | Active‑active | Dynamic; requires CNAME delegation |

ELB must be configured in TCP mode for WebSocket or HTTP/2 support |

| Route 53 | Active‑active or active‑passive | Static; DNS hosted in Route 53 | See our deployment guide |

Elastic IP address with keepalived |

Active‑passive | Static; DNS hosted anywhere | See our deployment guide |

| Elastic IP address with Lambda | Active‑passive | Static; DNS hosted anywhere | Configured by NGINX Professional Services |

This blog post concentrates on one aspect of NGINX Plus as your AWS load balancer – making it highly available. For detailed information on other AWS topics, see:

- Installing NGINX Plus AMIs on Amazon EC2

- NGINX Plus and Amazon Elastic Load Balancing on AWS

- Choosing the Right Load Balancer on Amazon: AWS Application Load Balancer vs. NGINX Plus

- NGINX Plus on AWS Cloud Quick Start

- Load Balancing AWS Auto Scaling Groups with NGINX Plus

- 5 Tips for Faster AWS Performance

High Availability for NGINX Plus Using ELB

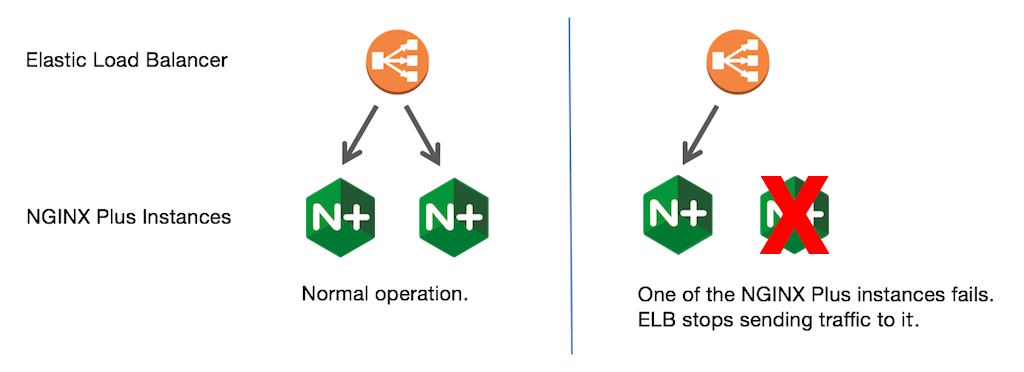

AWS’s basic built‑in load balancer, Elastic Load Balancer (ELB, now officially called Classic Load Balancer), is limited in features but it is highly available. For this reason, you can leverage ELB to make NGINX Plus highly available, as shown in the diagram:

You configure ELB to load balance traffic among all NGINX Plus instances (which then load balance the traffic to your application instances). If one of the NGINX Plus instances fails, ELB detects the failure and stops sending traffic to it. To help ELB quickly detect failure of NGINX Plus instances, it is important to configure ELB health checks.

ELB provides active‑active HA, meaning that all NGINX Plus instances are in use and receive their share of traffic. Not deploying standby NGINX Plus instances reduces the overall cost.

However, ELB deployed as an HTTP load balancer does not support the HTTP/2 or WebSocket protocols. If you need to use those protocols with NGINX Plus, you have to deploy ELB as a TCP (not HTTP) load balancer. In this case, if you need to pass the client IP address from ELB to NGINX Plus, you also have to configure the PROXY protocol in both ELB and NGINX Plus.

There are several downsides to using ELB for an HA deployment of NGINX Plus:

- ELB does not expose a static IP address, which is critical requirement for some applications.

- ELB adds an additional tier of load balancing, which increases complexity and cost.

- As ELB does not support UDP, you cannot use NGINX Plus to load balance UDP traffic.

- ELB doesn’t scale quickly, so large traffic spikes can result in dropped traffic.

- ELB IP addresses are published using a DNS

CNAMErecord; you cannot map a root domain (for example, example.com) to aCNAMEunless you delegate all DNS to Route 53.

You can also use the newer native AWS load balancer, Application Load Balancer (ALB), in a similar way. It offers more features and flexibility than ELB, but shares the downsides in the preceding list.

High Availability for NGINX Plus Using Route 53

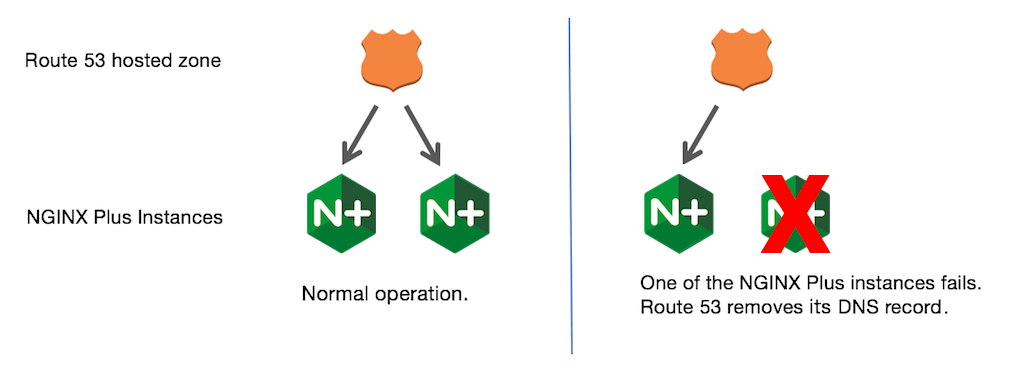

Like ELB, Route 53 allows you to create an active‑active NGINX Plus deployment, as shown in the figure:

You create a hosted zone and add records to enable routing traffic to all NGINX Plus instances. Make sure to configure Route 53 health checks of NGINX Plus instances. If one of the NGINX Plus instances fails, Route 53 excludes the record associated with that instance from its responses to DNS queries. However, clients and intermediate DNS servers cache records in accordance with the time‑to‑live (TTL) value in each record, which is set by the authoritative DNS server. They do not update the record until the TTL expires, and so do not immediately notice when the authoritative server removes the record for an NGINX Plus instance from its responses. To get around this, you need to set a minimal TTL value for NGINX Plus records.

For detailed instructions, see our Route 53 deployment guide.

In addition to an active‑active deployment, Route 53 allows you to create an active‑passive deployment or even more complicated failover combinations, as explained in the AWS documentation.

You can also use Route 53 in conjunction with the other methods described in this blog post.

High Availability for NGINX Plus Without ELB or Route 53

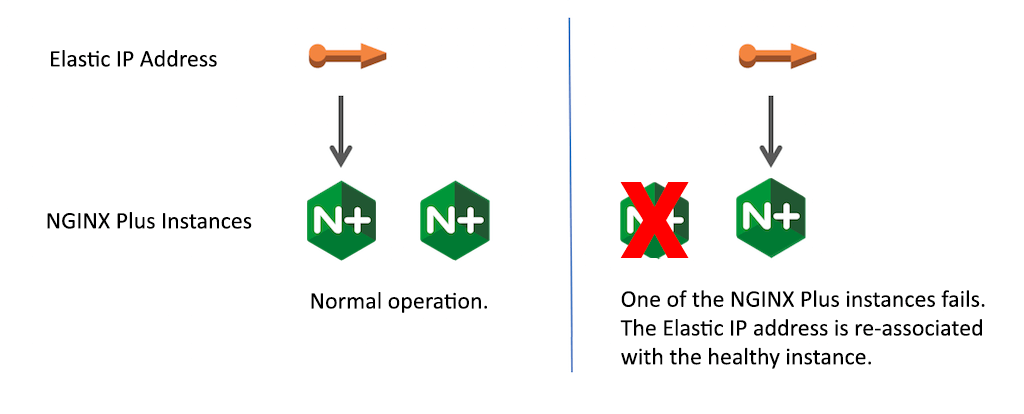

For a number of reasons, you may not want to rely on ELB or Route 53 to make NGINX Plus highly available. One alternative is to use AWS’ Elastic IP address feature in an active‑passive NGINX Plus deployment. Here you expose a static IP addresses for NGINX Plus and associate it with the primary (active or master) NGINX Plus instance. A second NGINX Plus instance in standby or backup mode does not handle traffic, but is ready to take over when the master NGINX Plus instance fails. During the takeover (failover), the Elastic IP address is reassigned to the second instance, which is now considered the master.

The downsides of the Elastic IP address method are as follows:

- The backup instance is not used most of the time, which increases the cost of your deployment.

- In contrast with a typical on‑premises deployment, re‑association of a static IP address is not instant. Our tests showed that it took up to 6 seconds to re‑associate the IP address with the new master instance.

- The deployment is more complicated than the ELB or Route 53 methods, as you must install and properly configure software that monitors the health of the master NGINX Plus instance and re‑associates the Elastic IP address if the master fails.

NGINX, Inc. provides two solutions that use an Elastic IP address. They differ in how they monitor health of the master NGINX Plus instance and re‑associate the Elastic IP address.

keepalived‑based solution. You can modify thekeepalived‑based solution for on‑premises deployments to support Elastic IP address re‑association in AWS, as we explain in our deployment guide. As in an on‑premises HA deployment, thekeepaliveddaemon on the backup NGINX Plus instance monitors the health of the master instance. The difference from the on‑premises solution is that when the master fails,keepalivedinvokes a custom script that calls the AWS API to re‑associate the Elastic IP address. Note that this solution is not covered by your NGINX Plus support contract.- AWS Lambda‑based solution. It uses a Lambda function to monitor the health of the NGINX Plus master instance and re‑associate the Elastic IP address if the master fails. This solution is available from our Professional Services team, which deploys and configures the solution for you and provides support.

[Editor – There is also a solution that combines a highly available active‑active deployment of NGINX Plus with AWS NLB.]

Summary

High availability is critical for an AWS load balancer. AWS gives you multiple methods for deploying NGINX Plus in a highly available manner, as we discussed in this blog post. The methods based on ELB or Route 53 are good starting points, and for cases when a static IP address is required, use the Elastic IP method based on keepalived or AWS Lambda.

New to NGINX Plus on AWS? Try it free for 30 days. For links to the available AMIs, see Installing NGINX Plus AMIs on Amazon EC2.