Are you thinking about moving to the cloud? There are a number of benefits of moving to cloud, as well as a few drawbacks. For some enterprises, hosting any data outside of the corporate firewall is a deal breaker. For most, however, the advantages of not having to manage your own hardware far outweigh downsides such as the loss of absolute control. Recent research indicates this is becoming increasingly apparent to IT directors – one third of enterprise IT spending is expected to go to the cloud over the next two years.

If you are looking to move to cloud, or even if you aren’t, now is a good time to begin thinking about your application architecture. Rather than blindly migrating an application to the cloud along with its well‑known problems, why not update your application stack to work more fluidly within this new type of environment? Your application delivery strategy is a great place to start the reevaluation process. Good application delivery is the difference between a loyal customer for you and a new customer for your competitors.

If you’re hosting your application on premise, you’re most likely using a hardware application delivery controller (ADC). While a hardware ADC might work well within an on‑premises deployment, you can’t take hardware to the cloud. Most legacy hardware ADC vendors do have virtual versions of their products, but if you are moving to the cloud why not try out a modern ADC that was written with the cloud in mind?

NGINX Plus is an enterprise‑grade software application delivery controller born in the modern web. NGINX Plus is lightweight with all the features you need to deliver your applications flawlessly, without forcing you to pay for features you’ll never use. NGINX Plus is available at a fraction of the cost of your hardware ADC, with no bandwidth or SSL limits to slow you down.

To help you get started on your investigation into cloud computing, here are some cloud architectures that leverage the power of NGINX Plus.

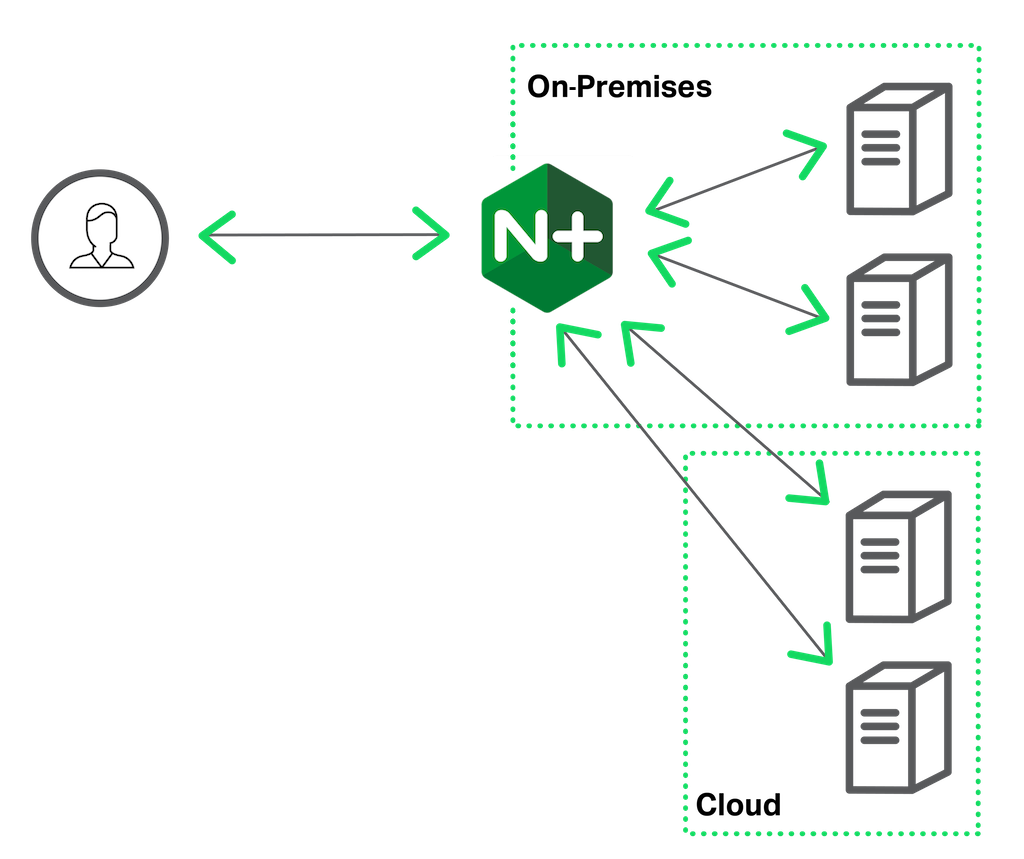

Simple Cloud Bursting

If migrating everything to the cloud at once seems too risky, consider a hybrid deployment as a less disruptive approach. In this type of deployment you use both on‑premises and cloud services to deliver your applications.

With NGINX Plus’ Least Time load-balancing algorithm you can create a “cloud bursting” deployment where traffic is sent to the cloud when all of your on‑premises servers are busy.

With the Least Time algorithm, NGINX Plus mathematically combines two metrics for each server – the current number of active connections and a weighted average response time for past requests – and sends the request to the server with the lowest value. Least Time tends to send more requests to the local servers because they respond faster. When the local servers get loaded to the point where the cloud servers respond more quickly even with the added network latency, NGINX Plus begins directing requests to the cloud servers. When local servers become less loaded again, NGINX Plus goes back to sending most requests to them.

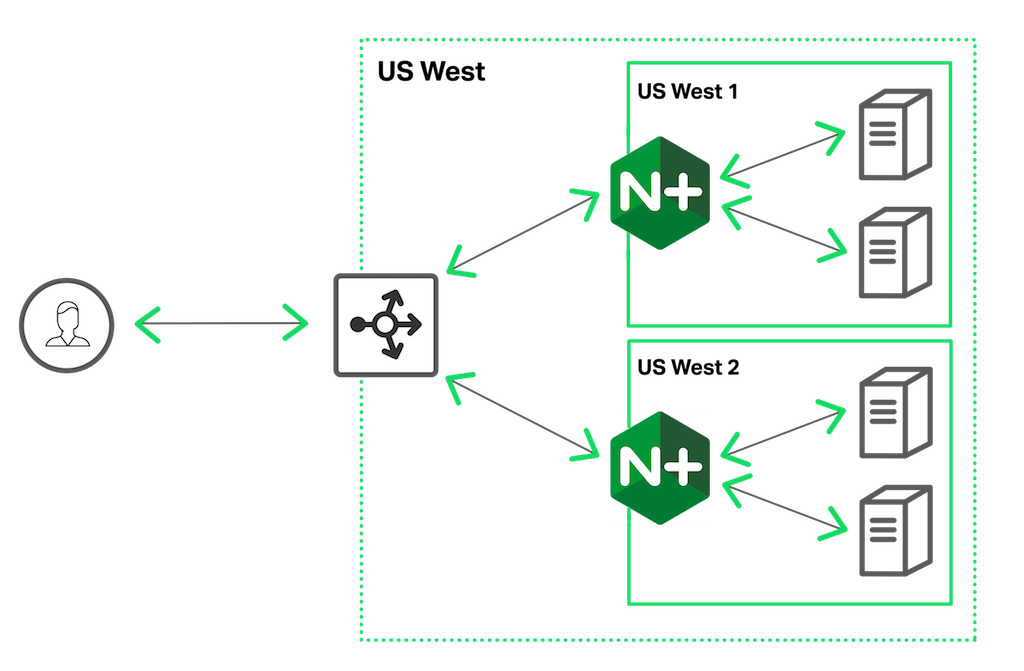

Local Load Balancing

Many public cloud vendors have regional data centers all over the world, and you can host your application in any or all of them. Amazon Web Services, for example, has nine data centers across North America, South America, Europe, and Asia. For fault tolerance, cloud vendors provision “availability zones” in each regional data center. An availability zone has its own physically isolated racks of hardware with separate power, networking, cooling, and so on. If one availability zone fails, for example by losing power, the availability zones that continue to run can pick up the slack. You make your application highly available by replicating it across availability zones.

In the cloud architecture diagram above, the cloud vendor’s native load balancer (ELB in Amazon Web Services, the Load Balancer on the Google Cloud Platform, or Azure Traffic Manager in Microsoft Azure) spreads traffic across the different availability zones. Within each availability zone, NGINX Plus load balances to your application servers. The application behind NGINX Plus can be a monolith, a microservices‑based application, or something in between.

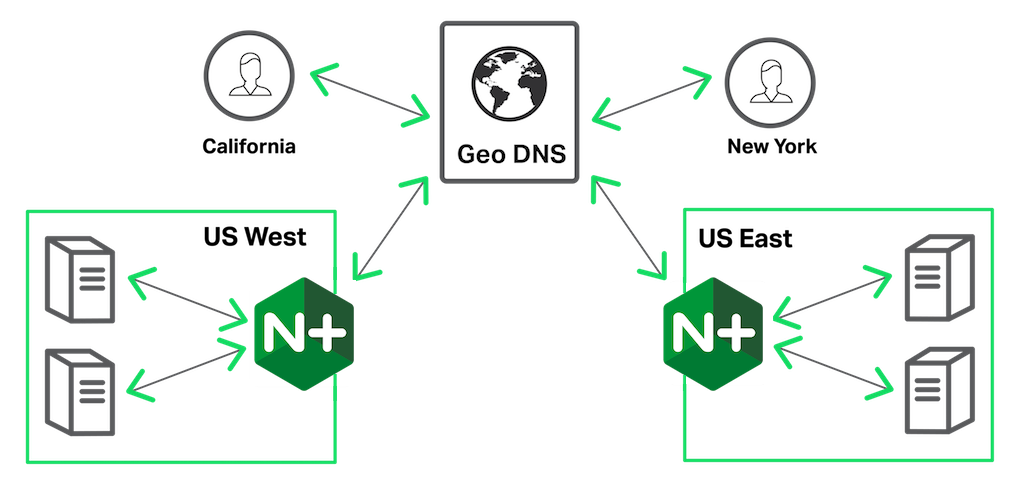

Global Load Balancing

Replicating your application across multiple availability zones provides great high availability within a region. The next step is to host your application in multiple regions. Moving the application closer to the user reduces the effects of network latency, leading to an overall better user experience. There are many ways to build a globally distributed application; one common method is using a geographic location‑based DNS (GeoDNS) solution. With a GeoDNS solution, the authoritative DNS server for a domain factors in the location of the client when forming its response, sending clients to the data center closest to them.

There are many GeoDNS solutions available, such as Route 53 from Amazon. Combining them with NGINX Plus you can create a globally distributed and scalable application. In the diagram above, GeoDNS directs users in California to the US West data center, while users in New York are directed to the US East data center. Within each data center, NGINX Plus distributes the incoming traffic across the application servers.

Conclusion

If you’re considering moving to the cloud, it makes sense to reconsider your application delivery strategy as well. Hardware ADCs might work well for on‑premises deployments, but they can’t be part of a cloud architecture. Though most hardware ADC vendors offer virtual versions of their appliances, they are unnecessarily expensive, forcing you to pay for features you don’t need in the cloud. Moreover, the virtual versions are usually supported only in select clouds, whereas NGINX Plus runs in any cloud.

NGINX Plus is a software ADC designed to run in the cloud. It’s available at a fraction of the cost of virtual hardware ADCs, but with all the functionality you’ll actually need.

Want to see for yourself how NGINX Plus fits into a cloud architecture? Start a free 30-day trial today.