This post is adapted from a presentation at nginx.conf 2016 by Nathan Moore of StackPath. This is the first of three parts of the adaptation. In this part, Nathan describes SPDY and HTTP/2, proxying under HTTP/2, HTTP/2’s key features and requirements, and NPN and ALPN. In Part 2, Nathan talks about implementing HTTP/2 with NGINX, running benchmarks, and more. Part 3 includes the conclusions and a Q&A.

You can view the complete presentation on YouTube.

Table of Contents

Nathan Moore: Good morning ladies and gentlemen, my name is Nathan. I work at a startup called StackPath.

3:56 What Is HTTP/2?

So RFC 7540 was ratified and it’s a new HTTP protocol. It’s supposed to do the same thing as the previous [HTTP] protocols which is: allow the transport of HTTP objects so you can do requests, you can do responses.

4:24 What Was SPDY?

It was built on technology from Google called SPDY. In fact, if you go to a lovely backup on GitHub it explicitly says that after a call for proposals in the selection process, SPDY 2 is chosen as the basis for H2 and that’s why it’s a binary protocol, that it supports all this other stuff that SPDY did, and it looks really, really similar.

It was built off of SPDY, a Google‑defined protocol from a couple years ago which was designed to help assist the delivery of content and to help pages load a little bit faster delivered from the server. But it doesn’t necessarily help other parts of the stack.

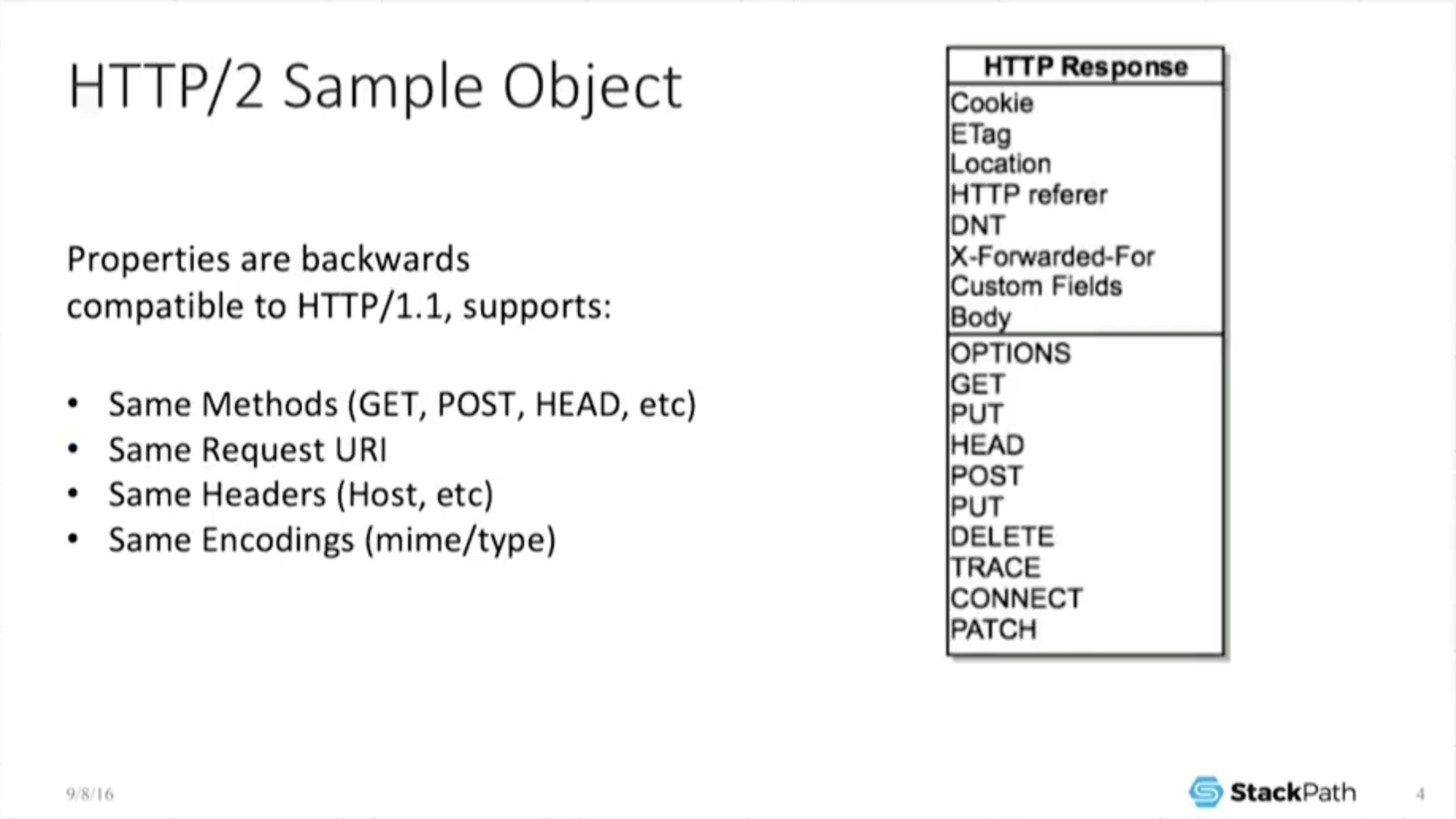

5:05 HTTP/2 Sample Object

So we’re here to [talk about] HTTP objects. “Great. What’s an HTTP object?” Well, under H2, it’s the same as under H1. You have the same methods, you have the same request URIs, the same headers, the same codings. It looks the same.

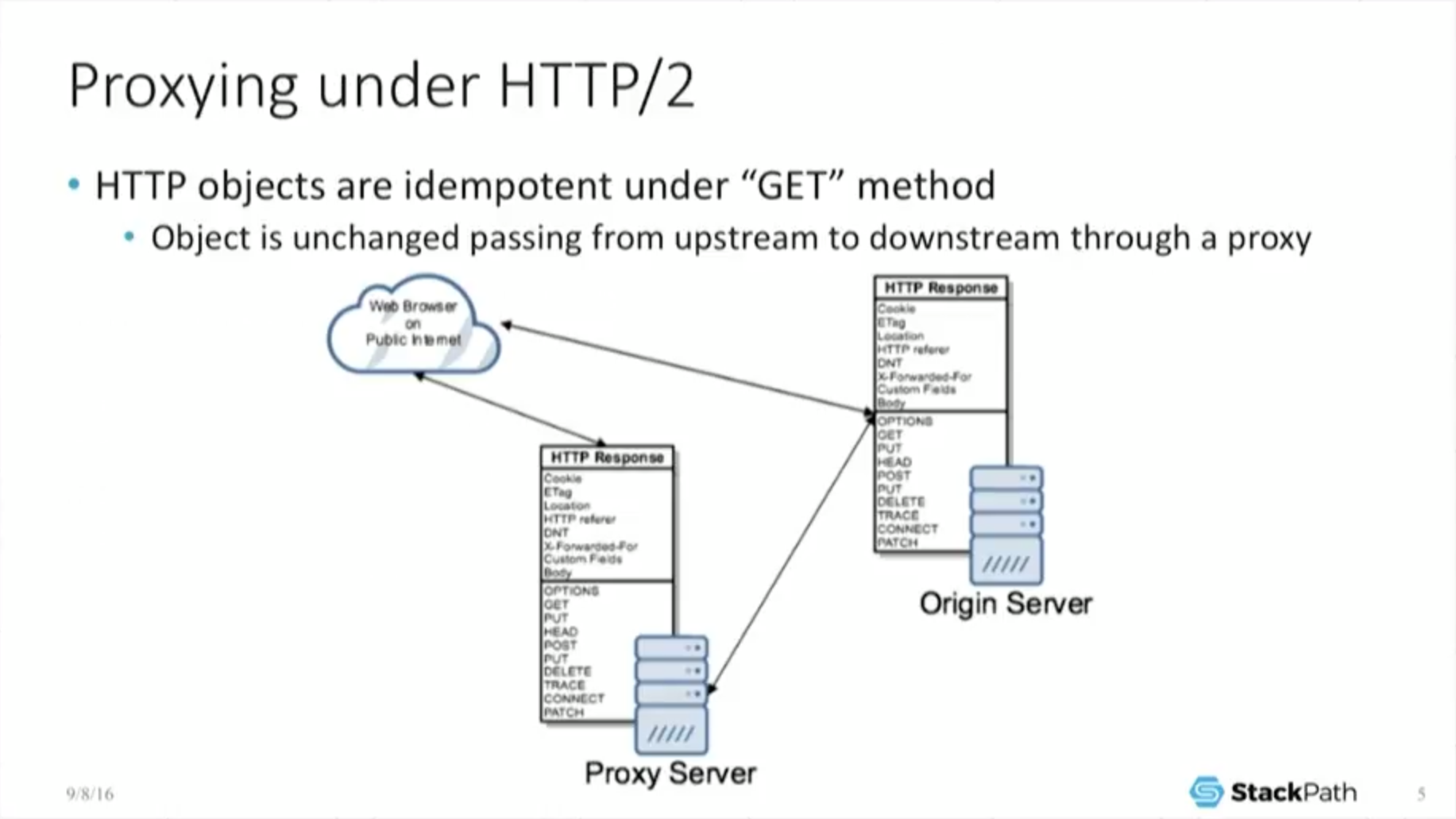

5:25 Proxying Under HTTP/2: GET Method

And that’s good because that’s ultimately how we [perform a] GET operation. So it has to interoperate with the rest of the Web. It cannot break the Web – it’s worthless as a protocol if it does. So if you look at this from a proxy perspective, I’m a cache. I care very deeply about proxying.

What this means is because the HTTP objects are the same, the spec for HTTP forces the object to be idempotent under the GET method which is a very, very fancy way of saying it is not allowed to change. So if I am out there on the public Internet and I am a web browser, if I make a call directly to an origin, I do a GET request and get the object, no problem.

But if I go through a proxy server, [the object must be] idempotent under the GET method, so the proxy server turns around, does its own GET back to the origin, takes that object and returns it back to the end user.

And because it was not allowed to change under the GET method, the object is exactly the same. The end user doesn’t care where he got it from, because he’s always getting the exact same object. And this is as true under H2 as it is under H1, which is great for me. It allows my business to still exist.

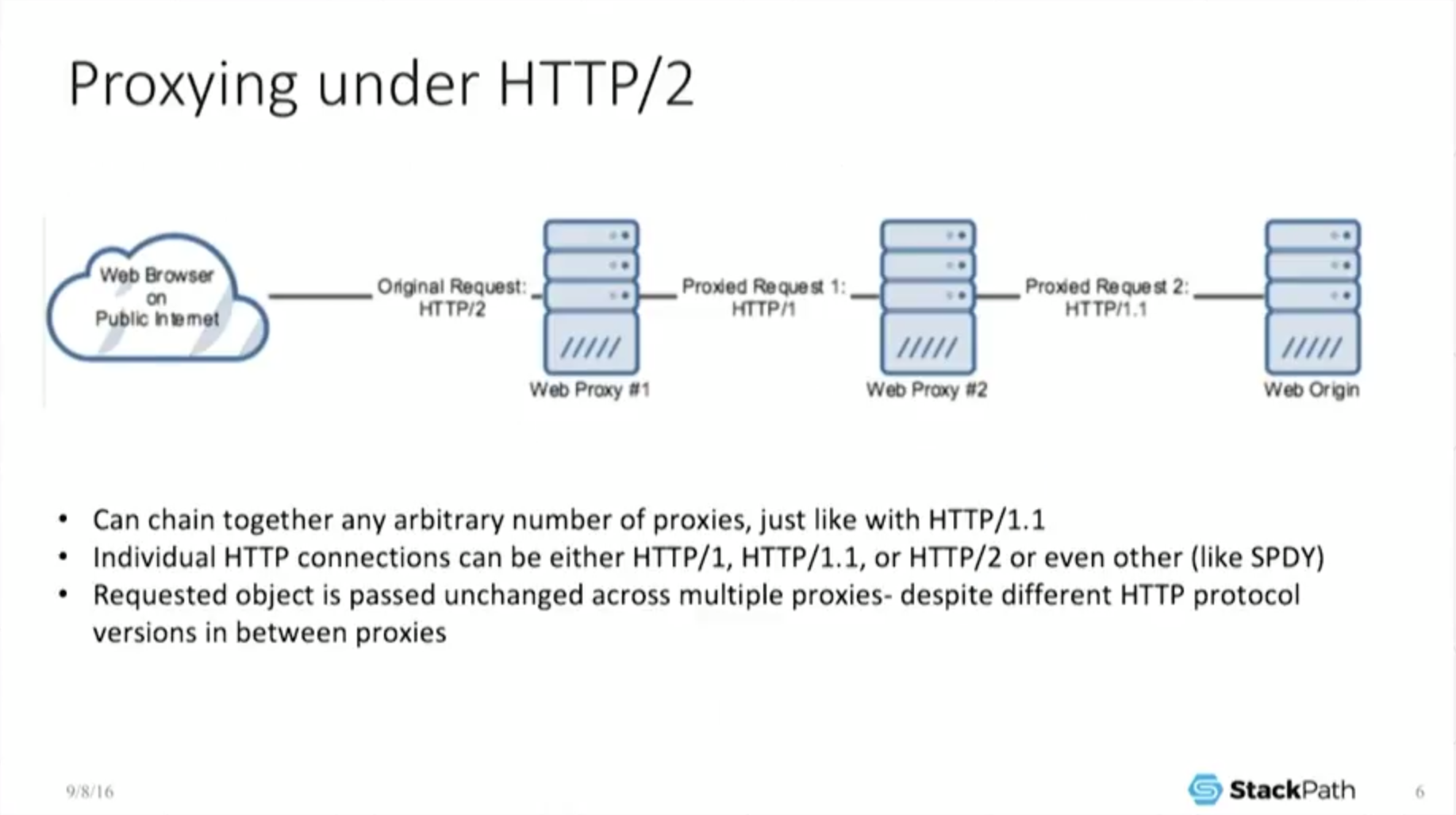

But it gets a little bit more fun than that because I can chain together an arbitrary number of proxies. So I can bounce this object, and because it’s idempotent under every single one of those, it’s always the same.

The end user never sees a different object, he gets the exact same thing back. So this is great. I can chain stuff together. The moment I can chain stuff together, I can now interoperate with previous versions of the protocol because each connection can negotiate to a different protocol. But because the object is the same under either protocol, I don’t care.

7:06 Proxying Under HTTP/2: Load Balancing

Now, I can interoperate under load balancing which is a very standard technology that everyone has been talking about at this conference – NGINX does load balancing very well.

And here’s an example where the web browser talks H2 to the load balancer, but then the load balancer internally talks H1 to some dynamic‑content servers that may [in turn] be doing a persistent 1.1 connection out to some static‑content server, and this is okay.

We’re getting back the object. Everything is getting put together. We look and work the same no matter which protocol we’re under, from a practical perspective, from the web browser’s perspective.

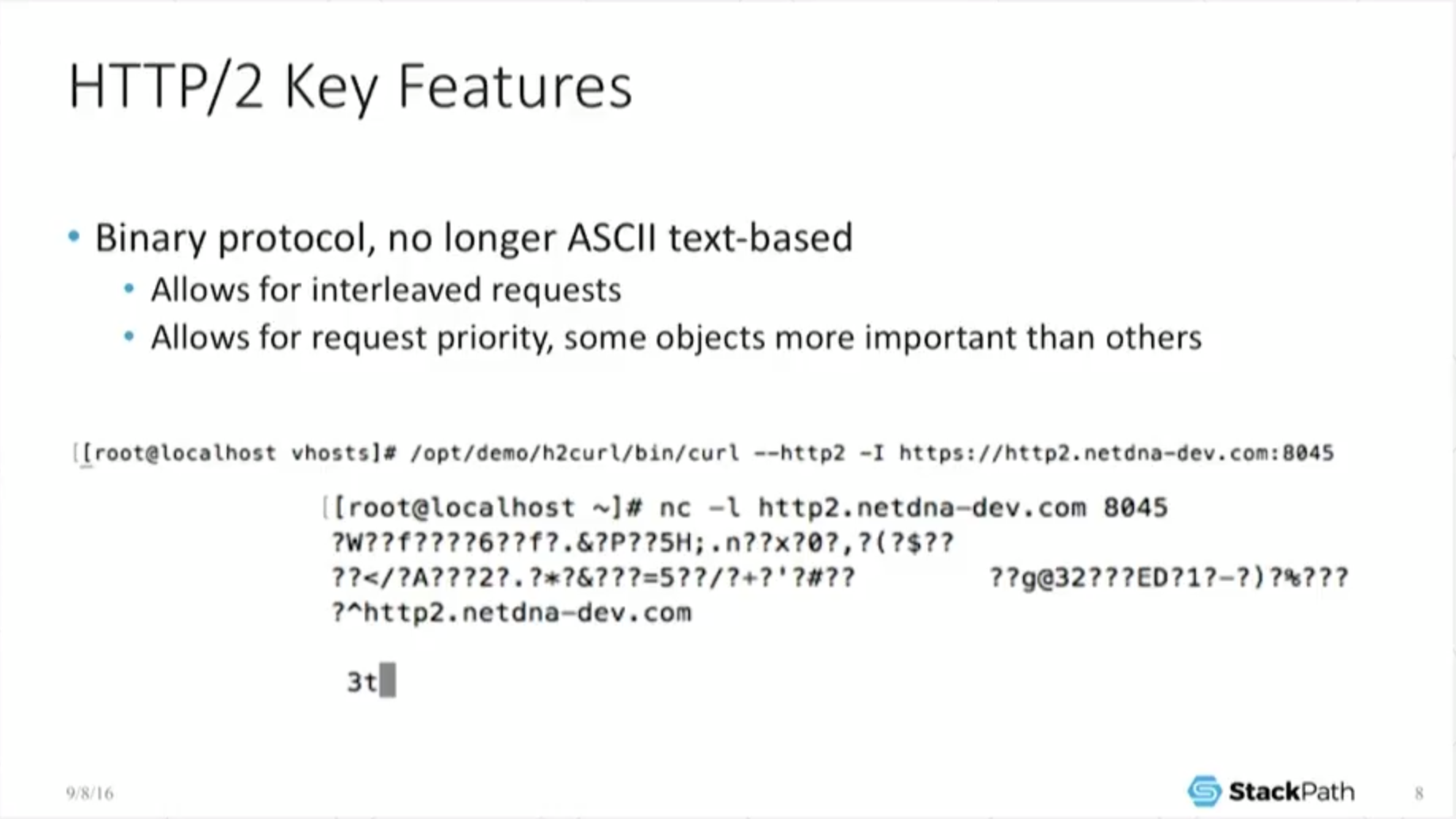

7:39 Key Features: Binary Protocol

So why do it? What are the benefits we’re actually getting out of this? Well, we’ve changed now from an ASCII‑text‑based protocol – one where you can just telnet into the port, do a simple ASCII GET, replicate the headers by hand, get back the expected result – and everything just magically works.

That’s no longer the case because [HTTP/2 is] a binary protocol. So my little stupid example is: I use curl with H2 support to make a call just to Netcat, just to see what it’s doing.

And you can see, well, it’s got all this weird stuff going on because it’s got all these weird binary frames it’s trying to pass in, and all this other stuff under the hood that it’s trying to do. So of course it’s no longer quite as easy.

On the other hand, because it’s a binary protocol, we can do some fun things, like interleave requests. We can set request priority. And so we can actually start playing a lot of games that you can’t do under H1, in particular head‑of‑line blocking.

8:32 Key Features: No Head-of-Line Blocking

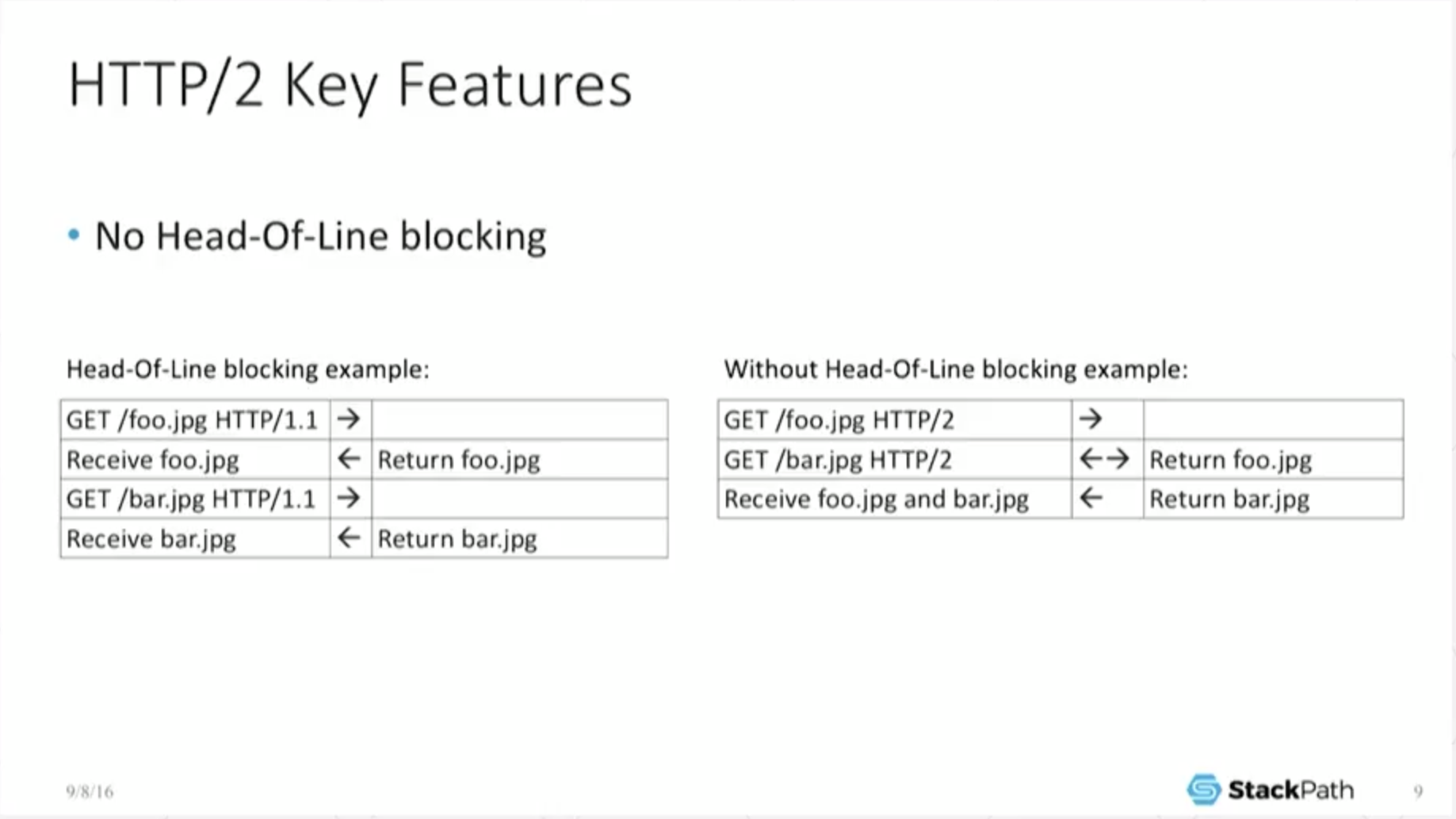

While your process can still block (you can still go through head‑of‑line blocking), within the protocol – down at that layer – there is no head‑of‑line blocking.

In the very, very simple example here, [I send an] H1.1 GET [for foo.jpg], the object has to respond [before] I can do the next one [the GET for bar.jpg], and the object responds. So it’s a FIFO queue.

You don’t have that anymore in the H2. I can actually do multiple GETs at the same time. While something [the first GET] is streaming down, I can issue another GET and start retrieving that at the same time over the same connection because it’s multiplexed and interleaved. So it does do some fun stuff.

9:09 Key Features: Only One Connection

Because we have these interleaved connections, all of a sudden we no longer need a flurry of connections to do something. If I have five calls, I only need one connection.

Most end users, they don’t necessarily care about this, and you have to go diving into some level of TCP optimization before it starts to make sense. In particular, [there’s a] discussion about congestion windows and the perils of TCP slow‑start. I have an appendix slide about it, and I’m not going to talk too much about it because I could waste a ton of time on it. But the long and short of it is: TCP slow‑start means that when you start a connection, you only allowed to send a little bit of data out. The longer the connection [lasts] and the better and cleaner your connection [is found to be through testing], the bigger that window ramps up and the more information you can keep in flight.

This means that an existing, already open connection with a huge congestion window can handle a huge amount of information on the exact same line. But if you have a brand‑new connection and you’re stuck going through slow‑start, you’re sipping data through a straw.

10:11 Key Features: Server Push

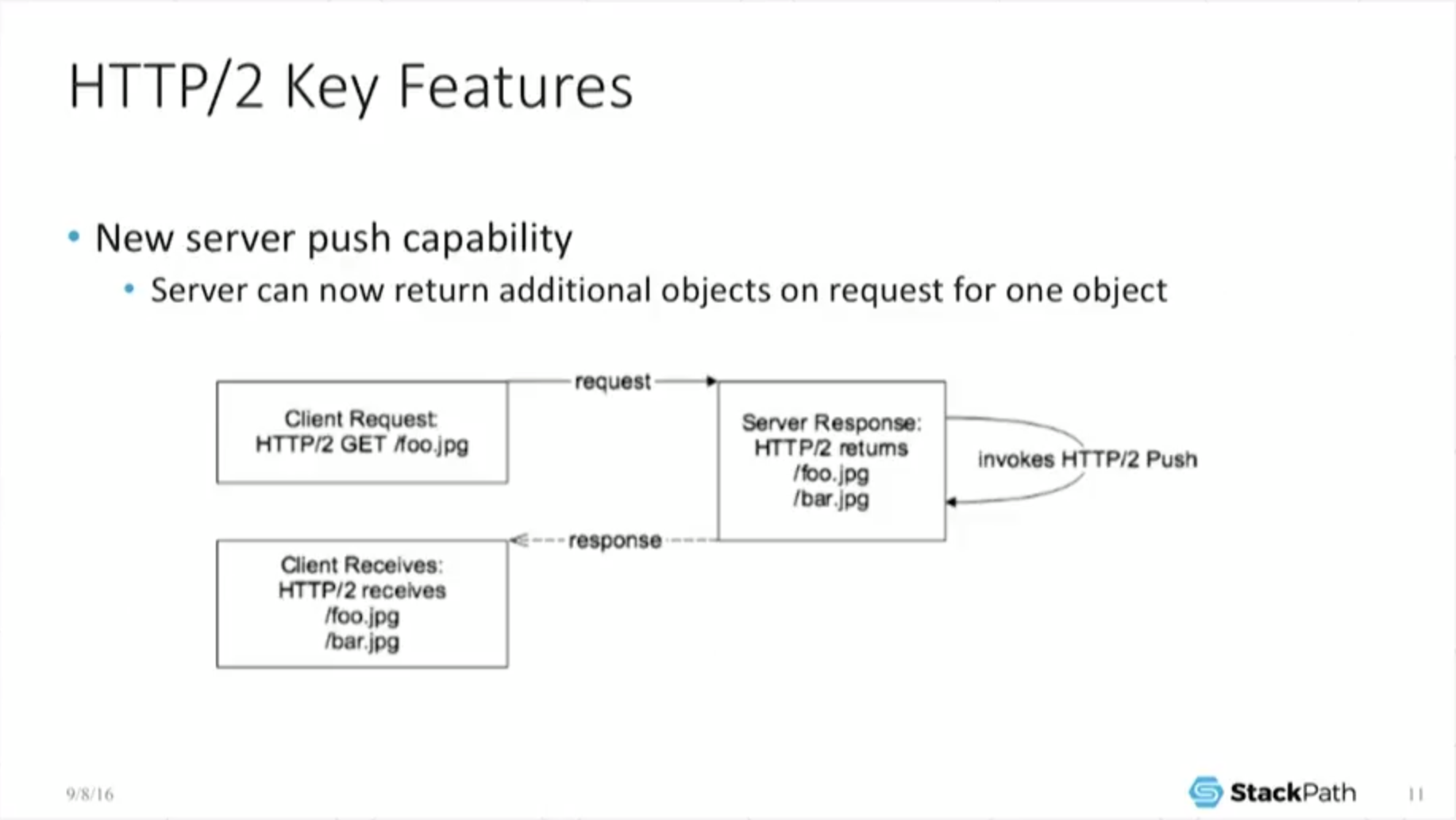

So in addition to all the sort of interleaving, we have another fun thing we can do: server push. And if I’m a content provider – I’m a cache – I love server push because … if I have a request come in for an object … and I’ve done the appropriate data crunching, I know that people who have downloaded foo also want to download bar.

Well, I don’t have to wait for the request for bar; I can actually push that at the exact same time as I’m downloading foo. So now the browser receives two files, even though he only asked for one, and if I’m remotely clever in the backend, I figured out what [the user wants]. A lot of the current push implementations sort of assume that you know exactly what you’re doing and that you can predefine this in your configuration, and it’s not very dynamic.

This is going to change in the future as this becomes a little bit more evolved, and this is one of the places where a lot of the providers are going to start differentiating themselves and offering a greater level of value.

11:04 Key Features: Header Compression

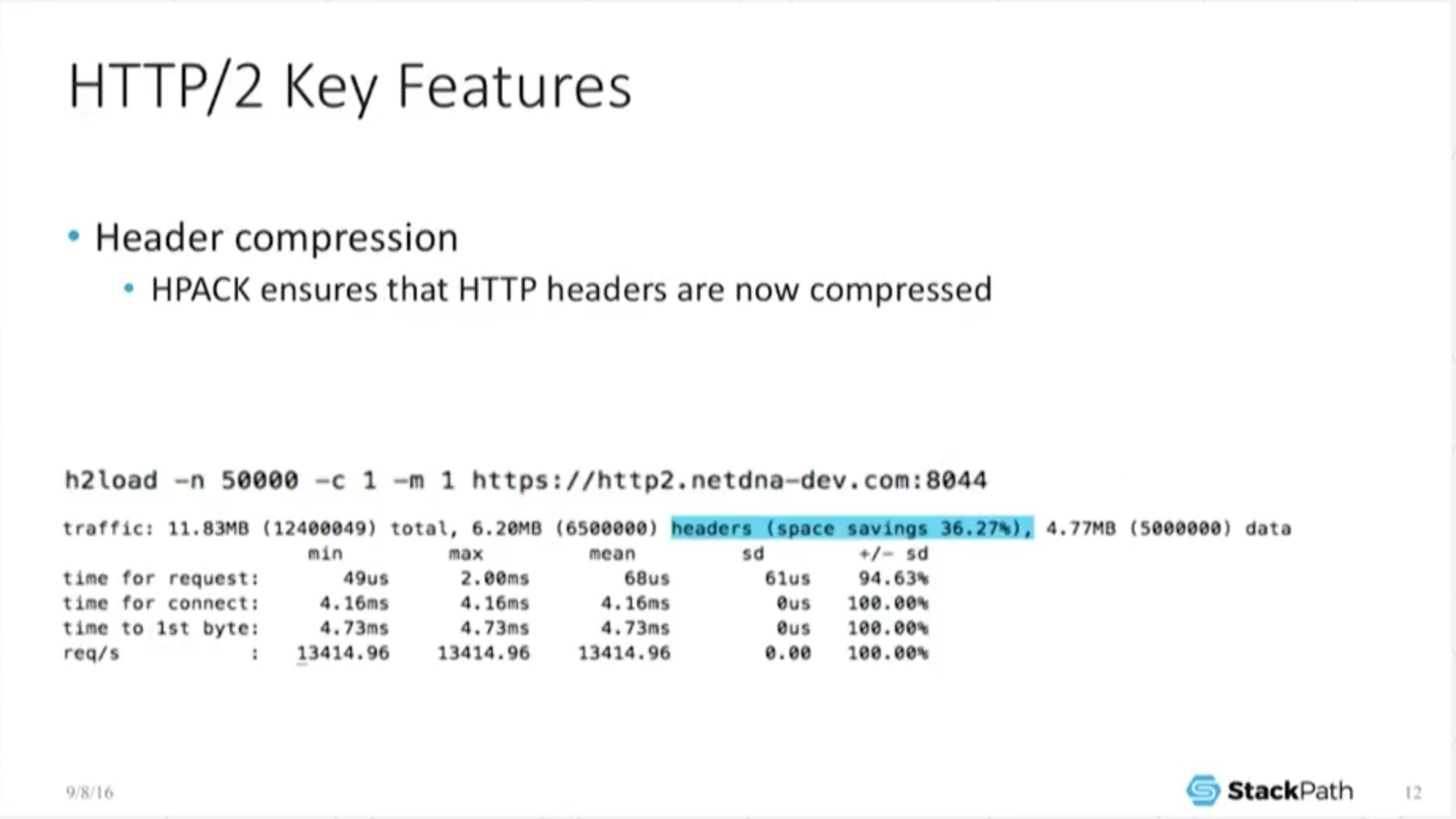

What else does it do? Well, it also does header compression. Anyone who’s familiar with HTTP objects [knows] there are a lot of perils to using custom headers and shoveling huge amounts of data into the header section because under H1, that’s uncompressed data – so all you’ve done is increase the amount of bandwidth required to [do a] download.

For what is basically metadata, if you can hide or encode it in some cleaner or easier way, so much the better. Well, that largely goes away under H2. Sure, it can still be a bottleneck, but because [headers] are now compressed, you can actually start shoveling more stuff in there and not have to worry as much as you did under [HTTP/1].

So this should actually start changing the way you think about a lot of application development. Now you can start making decisions on whatever it is – custom data, metadata – you’ve chosen to shovel into the headers, because you no longer pay such a huge penalty shipping them around.

So again, as a cache, am I happy about this? No, because I built for bandwidth. Why would I want this?

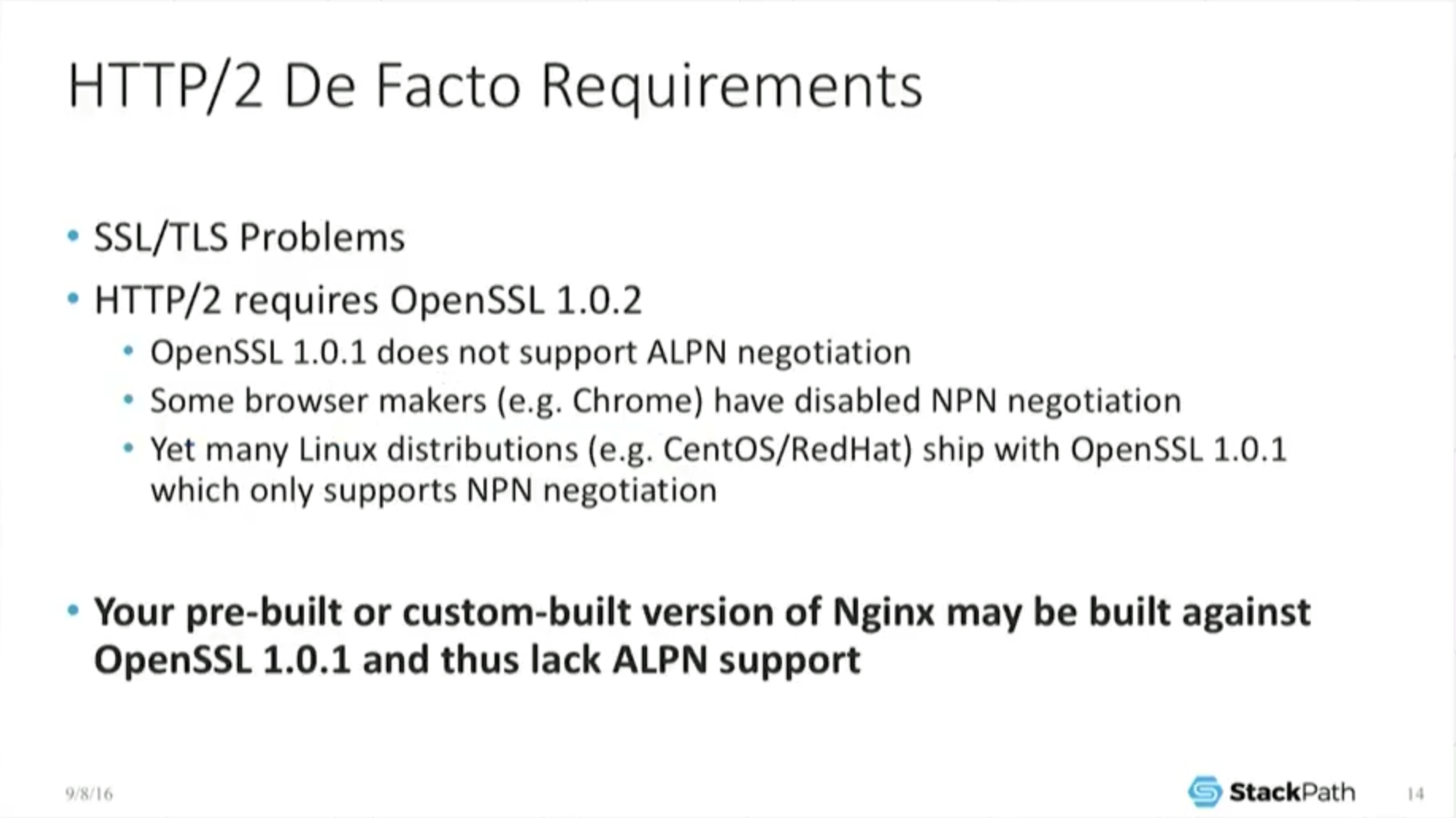

11:58 HTTP/2 De Facto Requirements

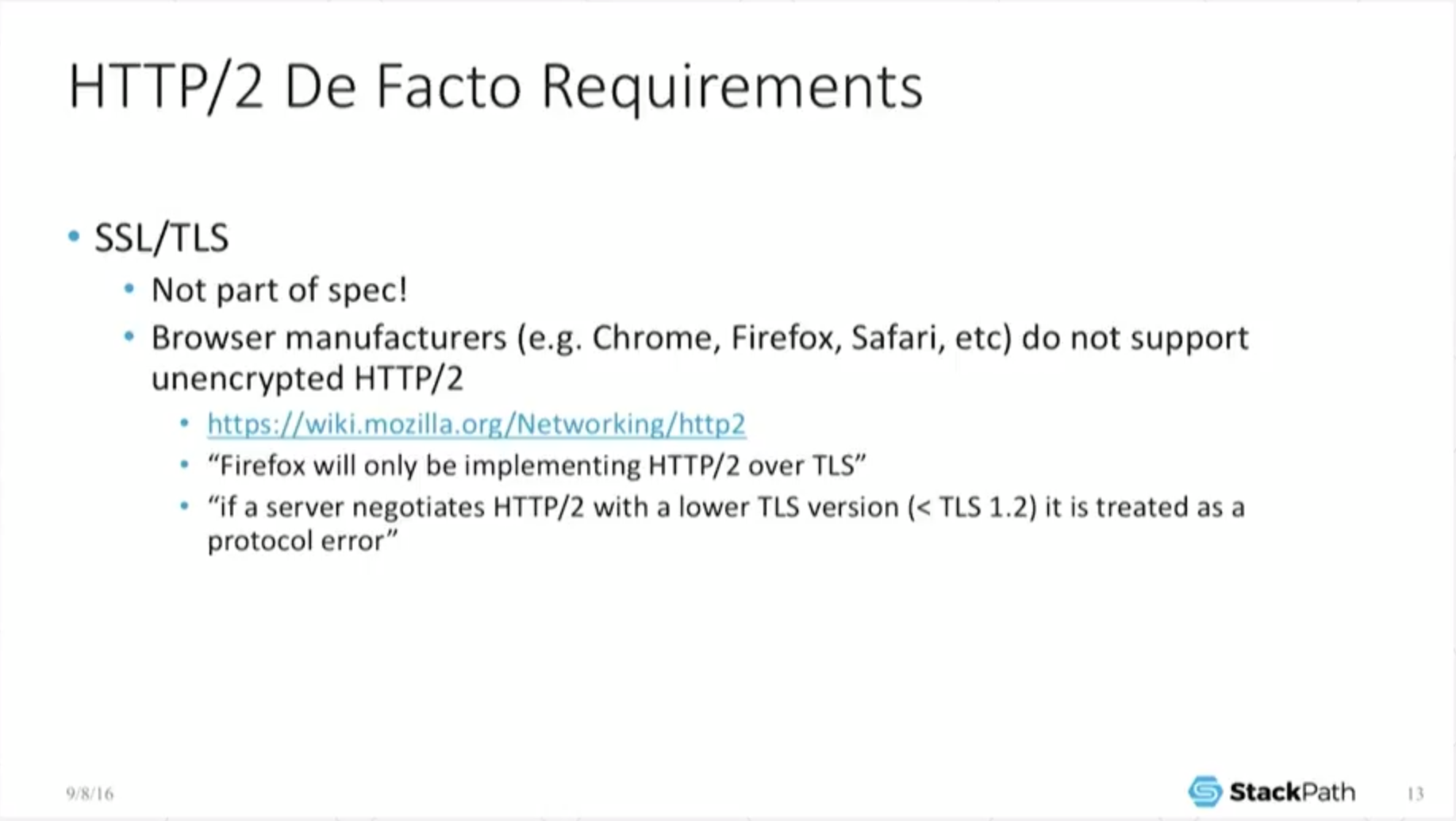

Okay, the real downside to this is that what wound up happening is: in the formal spec nobody could agree on whether to force SSL encryption or not. So all the browser manufacturers got together and decided we’re just not going to support unencrypted H2. So [encryption is] a de facto requirement. It is not part of the spec.

But if you intend to support it [HTTP/2], you have to do it [encryption] anyway. This makes the configuration a little bit more difficult, a little bit different, because now you’re stuck dealing with SSL even for pictures of cats or other things which may not necessarily require SSL encryption. It gets a little bit worse than that, and I quote from [provide a link to] Mozilla, and you can read that yourself, of course.

It’s not only that Firefox and other major browsers will only be implementing H2 over TLS. [The browsers also have implemented that] if the server negotiates H2 with a TLS version lower than 1.2, that is treated as a protocol error. So now you can’t support TLS 1.1 and expect your Firefox users to be able to negotiate an H2 connection to you.

Now that’s a protocol error, and of course it’s going to be different per browser. Some browsers will let you do it, some won’t.

Now it gets a little bit stranger than that … back in the SPDY days, SPDY implemented something called NPN, Next Protocol Negotiation (that’ll be the next slide). Unfortunately, [support for NPN is] bundled in OpenSSL 1.0.1, but H2 is unhappy with just NPN. Again, that’s not part of the spec – one of the browser manufacturers decided that they want a different protocol [ALPN, which is supported only in OpenSSL 1.0.2 and later].

Why is this a big deal? Well, most Linux distributions – like Red Hat, CentOS, even CentOS 7 – ship with OpenSSL 1.0.1. So, your prebuilt or custom‑built version of NGINX may be built against an old version of OpenSSL and thus lack the needed ALPN support which HTTP/2 de facto requires for some browsers.

[Editor – For more details about the effects of this requirement, see Supporting HTTP/2 for Website Visitors on our blog.

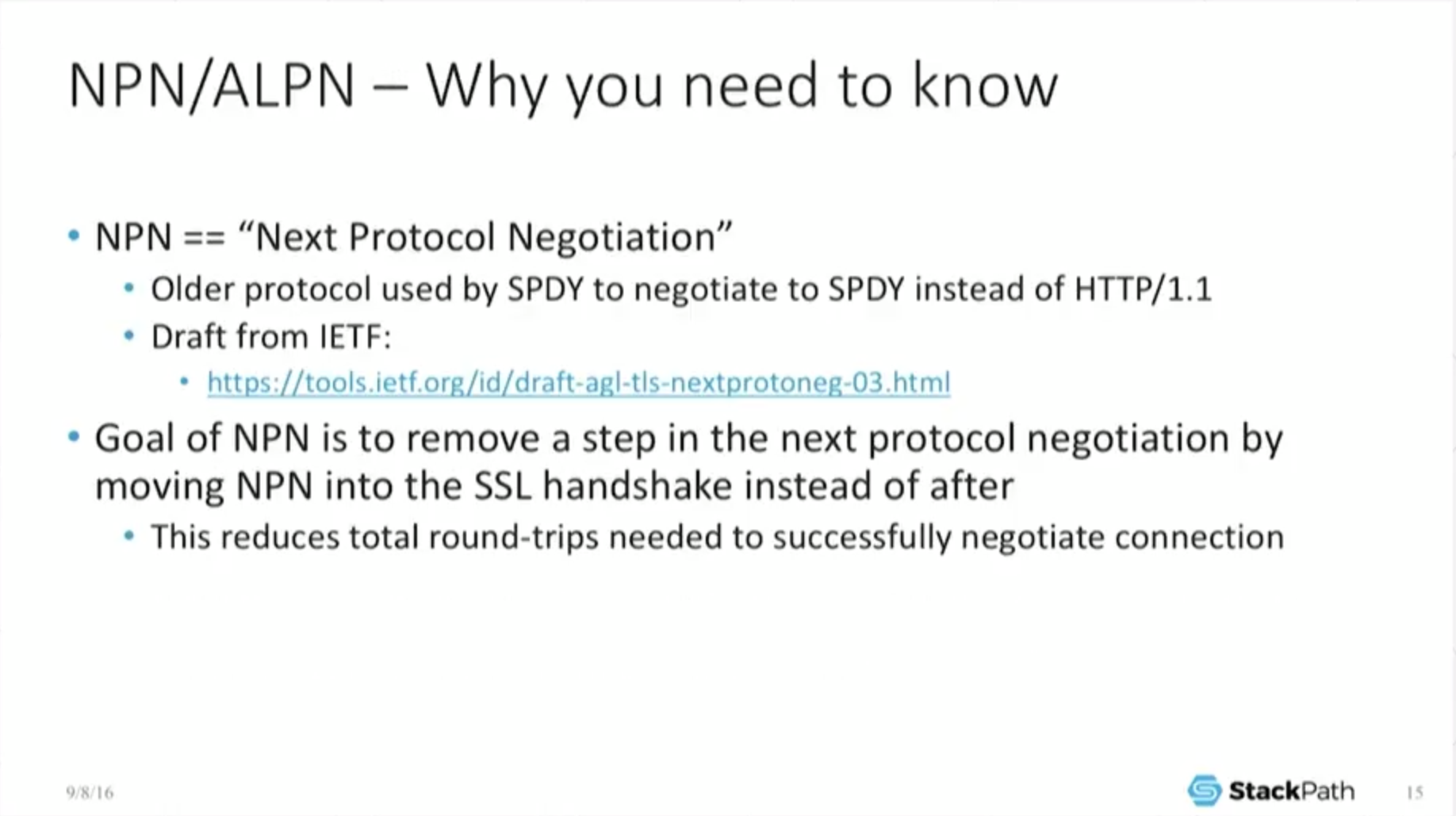

13:56 NPN/ALPN – Why You Need to Know

So NPN, Next Protocol Negotiation, had a very noble beginning. [It was created in recognition of] one of the problems…[with] SSL: …overhead. It takes a while to negotiate it, and the goal was to remove a step in the protocol negotiation by moving the next protocol negotiation into the SSL handshake itself, thus saving at least one, and possibly more, round trips.

So this was done for performance reasons. It’s not just [that NPN’s creators] felt like throwing in additional complexity for its own sake. So SPDY, the earlier protocol, relied on NPN to do [protocol negotation], but when H2 came along, it was recognized that NPN’s scope was way too narrow. It only applied basically to…SPDY.

What they wanted was something that was much more general, that you could apply to any possible application that may choose to use this in the future, hence Application Layer Protocol Negotiation, which is what ALPN actually stands for.

And [on the slide], I just have the abbreviated handshake, real quick, just to show how it works.

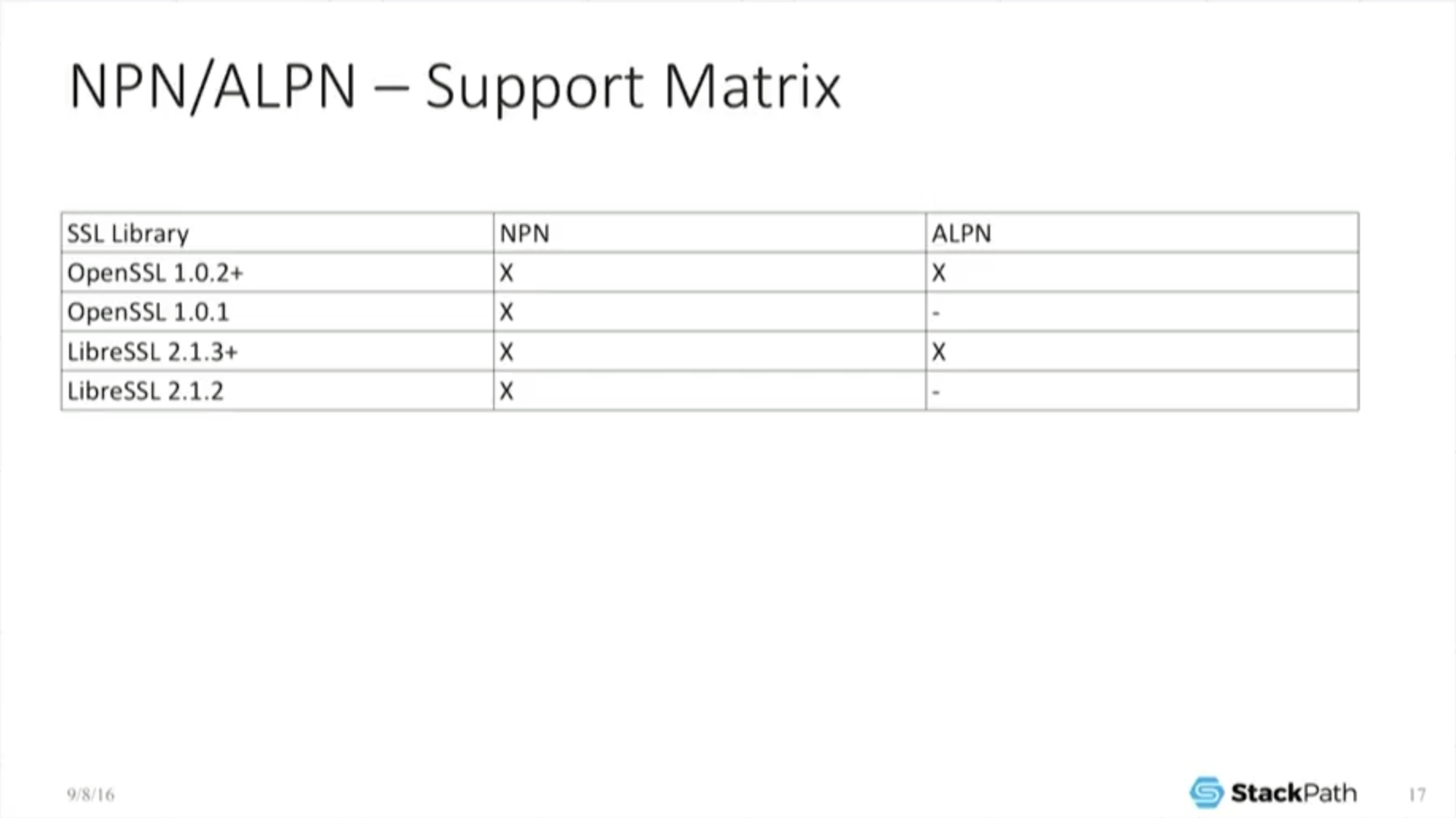

14:55 NPN/ALPN – Support Matrix

The IETF spec associated with NPN is [on the previous slide]. Since I know that a lot of people will be just looking at the slides later, I included the support matrix [on this slide] just to make it clear: you need the newer version of OpenSSL.

This post is adapted from a presentation at nginx.conf 2016 by Nathan Moore of StackPath. This is the first of three parts of the adaptation. In this part, Nathan describes SPDY and HTTP/2, proxying under HTTP/2, HTTP/2’s key features and requirements, and NPN and ALPN. In Part 2, Nathan talks about implementing HTTP/2 with NGINX, running benchmarks, and more. Part 3 includes the conclusions and a Q&A.

You can view the complete presentation on the YouTube.