Editor – This post is part of a 10-part series:

- Reduce Complexity with Production-Grade Kubernetes

- How to Improve Resilience in Kubernetes with Advanced Traffic Management

- How to Improve Visibility in Kubernetes

- Six Ways to Secure Kubernetes Using Traffic Management Tools

- A Guide to Choosing an Ingress Controller, Part 1: Identify Your Requirements

- A Guide to Choosing an Ingress Controller, Part 2: Risks and Future-Proofing

- A Guide to Choosing an Ingress Controller, Part 3: Open Source vs. Default vs. Commercial

- A Guide to Choosing an Ingress Controller, Part 4: NGINX Ingress Controller Options (this post)

- How to Choose a Service Mesh

- Performance Testing NGINX Ingress Controllers in a Dynamic Kubernetes Cloud Environment

You can also download the complete set of blogs as a free eBook – Taking Kubernetes from Test to Production.

According to the Cloud Native Computing Foundation’s (CNCF) Survey 2020, NGINX is the most commonly used data plane in Ingress controllers for Kubernetes – but did you know there’s more than one “NGINX Ingress Controller”?

A previous version of this blog, published in 2018 under the title Wait, Which NGINX Ingress Controller for Kubernetes Am I Using?, was prompted by a conversation with a community member about the existence of two popular Ingress controllers that use NGINX.

It’s easy to see why there was (and still is) confusion. Both Ingress controllers are:

- Called “NGINX Ingress Controller”

- Open source

- Hosted on GitHub with very similar repo names

- The result of projects that started around the same time

And of course the biggest commonality is that they implement the same function.

NGINX vs. Kubernetes Community Ingress Controller

For the sake of clarity, we differentiate the two versions like this:

- Community version – Found in the kubernetes/ingress-nginx repo on GitHub, the community Ingress controller is based on NGINX Open Source with docs on Kubernetes.io. It is maintained by the Kubernetes community.

- NGINX version – Found in the nginxinc/kubernetes-ingress repo on GitHub, NGINX Ingress Controller is developed and maintained by F5 NGINX with docs on docs.nginx.com. It is available in two editions:

- NGINX Open Source‑based (free and open source option)

- NGINX Plus-based (commercial option)

There are also a number of other Ingress controllers based on NGINX, such as Kong, but fortunately their names are easily distinguished. If you’re not sure which NGINX Ingress Controller you’re using, check the container image of the running Ingress controller, then compare the Docker image name with the repos listed above.

NGINX Ingress Controller Goals and Priorities

A primary difference between NGINX Ingress Controller and the Community Ingress controller (along with other Ingress controllers based on NGINX Open Source) are their development and deployment models, which are in turn based on differing goals and priorities.

- Development philosophy – Our top priority for all NGINX projects and products is to deliver a fast, lightweight tool with long‑term stability and consistency. We make every possible effort to avoid changes in behavior between releases, particularly any that break backward compatibility. We promise you won’t see any unexpected surprises when you upgrade. We also believe in choice, so all our solutions can be deployed on any platform including bare metal, containers, VMs, and public, private, and hybrid clouds.

- Integrated codebase – NGINX Ingress Controller uses a 100% pure NGINX Open Source or NGINX Plus instance for load balancing, applying best‑practice configuration using native NGINX capabilities alone. It doesn’t rely on any third‑party modules or Lua code that have not benefited from our interoperability testing. We don’t assemble our Ingress controller from lots of third‑party repos; we develop and maintain the load balancer (NGINX and NGINX Plus) and Ingress controller software (a Go application) ourselves. We are the single authority for all components of our Ingress controller.

- Advanced traffic management – One of the limitations of the standard Kubernetes Ingress resource is that you must use auxiliary features like annotation, ConfigMaps, and customer templates to customize it with advanced features. NGINX Ingress Resources provide a native, type‑safe, and indented configuration style which simplifies implementation of Ingress load‑balancing capabilities, including TCP/UDP, circuit breaking, A/B testing, blue‑green deployments, header manipulation, mutual TLS authentication (mTLS), and web application firewall (WAF).

- Continual production readiness – Every release is built and maintained to a supportable, production standard. You benefit from this “enterprise‑grade” focus equally whether you’re using the NGINX Open Source‑based or NGINX Plus-based edition. NGINX Open Source users can get their questions answered on GitHub by our engineering team, while NGINX Plus subscribers get best-in-class support. Either way it’s like having an NGINX developer on your DevOps team!

NGINX Open Source vs. NGINX Plus – Why Upgrade to Our Commercial Edition?

And while we’re here, let’s review some of the key benefits you get from the NGINX Plus-based NGINX Ingress Controller. As we discussed in How to Choose a Kubernetes Ingress Controller, Part 3: Open Source vs. Default vs. Commercial, there are substantial differences between open source and commercial Ingress controllers. If you’re planning for large Kubernetes deployments and complex apps in production, you’ll find our commercial Ingress controller saves you time and money in some key areas.

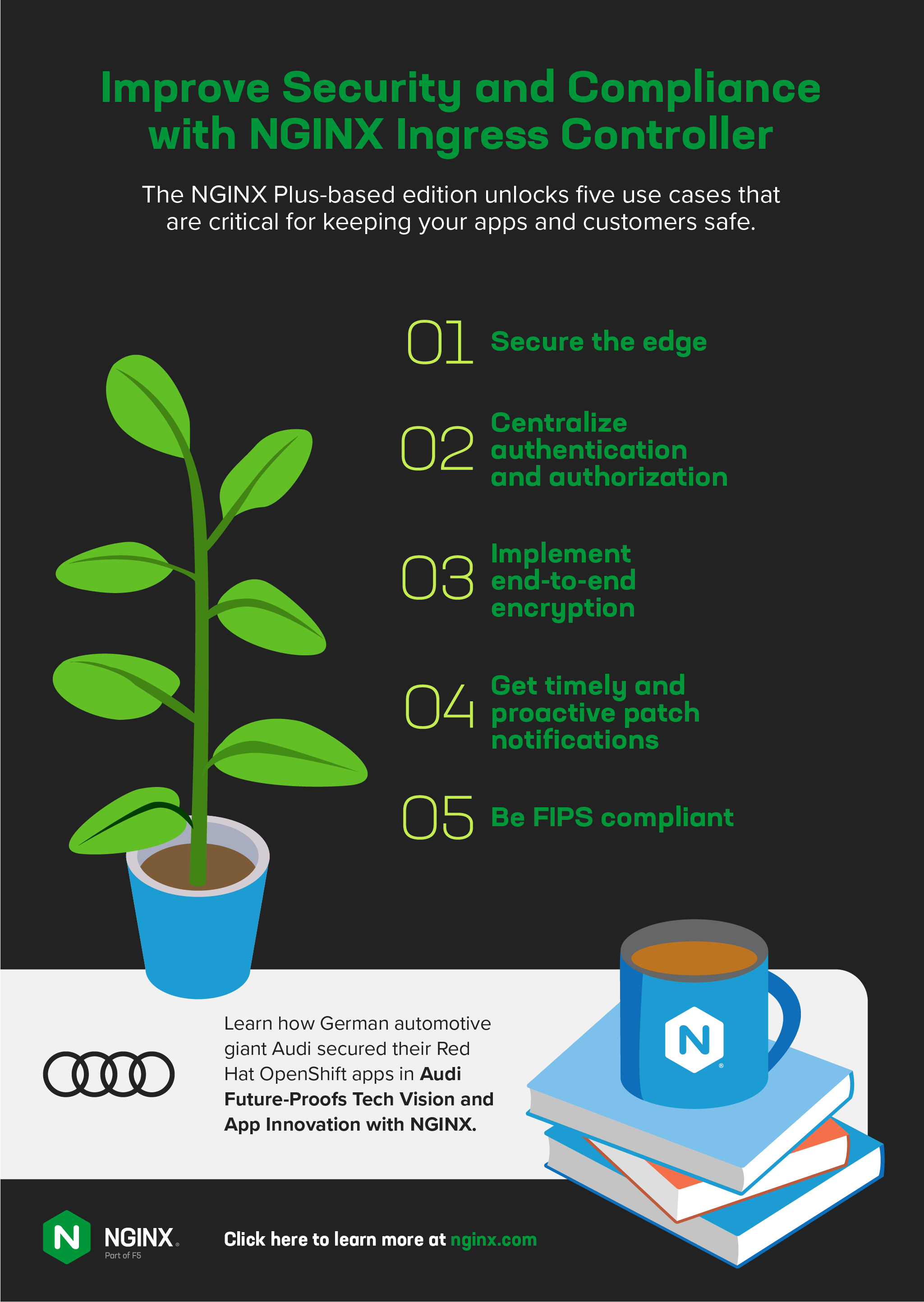

Security and Compliance

One of the main reasons many organizations fail to deliver Kubernetes apps in production is the difficulty of keeping them secure and compliant. The NGINX Plus-based NGINX Ingress Controller unlocks five use cases that are critical for keeping your apps and customers safe.

- Secure the edge – In a well‑architected Kubernetes deployment, the Ingress controller is the only point of entry for data plane traffic flowing to services running within Kubernetes, making it an ideal location for a web application firewall (WAF). NGINX App Protect integrates with NGINX Ingress Controller to protect your Kubernetes apps against the OWASP Top 10 and many other vulnerabilities, ensures PCI DSS compliance, and outperforms ModSecurity.

- Centralize authentication and authorization – You can implement an authentication and single sign‑on (SSO) layer at the point of ingress with OpenID Connect (OIDC) – built on top of the OAuth 2.0 framework – and JSON Web Token (JWT) authentication.

- Implement end-to-end encryption – When you need to secure traffic between services, you’re probably going to look for a service mesh. The always free NGINX Service Mesh integrates seamlessly with NGINX Ingress Controller, letting you control both ingress and egress mTLS traffic efficiently with minimal less latency than other meshes.

- Get timely and proactive patch notifications – When CVEs are reported, subscribers are proactively informed and get patches quickly. They can apply the patches right away to reduce the risk of exploitation, rather than having to be on the lookout for updates in GitHub or waiting weeks (even months) for a patch to be released.

- Be FIPS compliant – You can enable FIPS mode to ensure clients talking to NGINX Plus are using a strong cipher with a trusted implementation.

Learn how German automotive giant Audi secured their Red Hat OpenShift apps in Audi Future‑Proofs Tech Vision and App Innovation with NGINX.

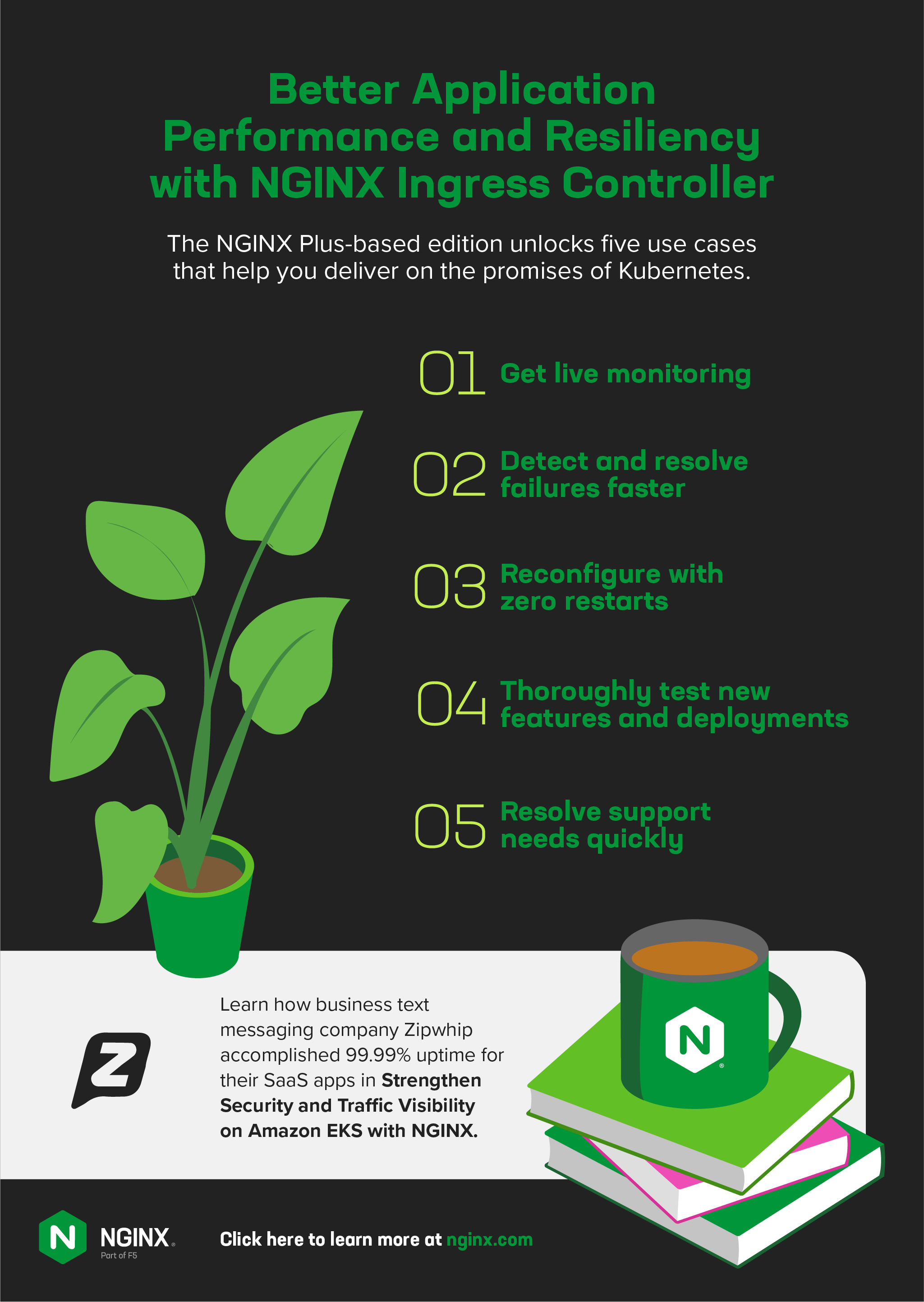

Application Performance and Resiliency

Uptime and app speed are often key performance indicators (KPIs) for developers and Platform Ops teams. The NGINX Plus-based NGINX Ingress Controller unlocks five use cases that help you deliver on the promises of Kubernetes.

- Get live monitoring – The NGINX Plus dashboard displays hundreds of key load and performance metrics so you can quickly troubleshoot the cause of slow (or down!) apps.

- Detect and resolve failures faster – Implement a circuit breaker with active health checks that proactively monitors the health of your TCP and UDP upstream servers.

- Reconfigure with zero restarts – Faster, non‑disruptive reconfiguration ensures you can deliver applications with consistent performance and resource usage and lower latency than the open source alternatives.

- Thoroughly test new features and deployments – Make A/B testing and blue‑green deployments easier to execute by leveraging the key‑value store to change the percentages without the need for reloads.

- Resolve support needs quickly – Confidential, commercial support is essential for organizations that can’t wait for the community to answer questions or can’t risk exposure of sensitive data. NGINX support is available in multiple tiers to fit your needs, and covers assistance with installation, deployment, debugging, and error correction. You can even get help when something just doesn’t seem “right”.

Learn how business text messaging company Zipwhip accomplished 99.99% uptime for their SaaS apps in Strengthen Security and Traffic Visibility on Amazon EKS with NGINX.

Next Step: Try NGINX Ingress Controller

If you’ve decided that an open source Ingress controller is the right choice for your apps, then you can get started quickly at our GitHub repo.

For large production deployments, we hope you’ll try our commercial Ingress controller based on NGINX Plus. It’s available for a 30-day free trial that includes NGINX App Protect.