Author’s note – This blog post is the first in a series:

- Introducing the NGINX Microservices Reference Architecture (this post)

- MRA, Part 2: The Proxy Model

- MRA, Part 3: The Router Mesh Model

- MRA, Part 4: The Fabric Model

- MRA, Part 5: Adapting the Twelve‑Factor App for Microservices

- MRA, Part 6: Implementing the Circuit Breaker Pattern with NGINX Plus

All six blogs, plus a blog about web frontends for microservices applications, have been collected into a free ebook.

Also check out these other NGINX resources about microservices:

- A very useful and popular series by Chris Richardson about microservices application design

- The Chris Richardson articles collected into a free ebook, with additional tips on implementing microservices with NGINX and NGINX Plus

- Other microservices blog posts

- Microservices webinars

- Microservices Solutions page

- Topic: Microservices

Introduction

NGINX has been involved in the microservices movement from the very beginning. The lightweight, high‑performance, and flexible nature of NGINX is a great fit for microservices.

The NGINX Docker image is the number one downloaded application image on Docker Hub, and most microservices platforms you find on the Web today include a demo that deploys NGINX in some form and connects to the welcome page.

Because we believe moving to microservices is crucial to the success of our customers, we at NGINX have launched a dedicated program to develop features and practices in support of this seismic shift in web application development and delivery. We also recognize that there are many different approaches to implementing microservices, many of them novel and specific to the needs of individual development teams. We think there is a need for models to make it easier for companies to develop and deliver their own microservices‑based applications.

With all this in mind, we here in NGINX Professional Services are developing the NGINX Microservices Reference Architecture (MRA) – a set of models that you can use to create your own microservices applications.

The MRA is made up of two components: a detailed description of each of the three Models, plus downloadable code that implements our example photo‑sharing program, Ingenious. The only difference among the three Models is the configuration code that’s used to configure NGINX Plus for each of them. This series of blog posts will provide overview descriptions of each of the Models; detailed descriptions, configuration code, and code for the Ingenious sample program will be made available later this year.

Our goal in building this Reference Architecture is threefold:

- To provide customers and the industry with ready‑to‑use blueprints for building microservices‑based systems, speeding – and improving – development

- To create a platform for testing new features in NGINX and NGINX Plus, whether developed internally or externally and distributed in the product core or as dynamic modules

- To help us understand partner systems and components so we can gain a holistic perspective on the microservices ecosystem

The Microservices Reference Architecture is also an important part of professional services offerings for NGINX customers. In the MRA, we use features common to both NGINX Open Source and NGINX Plus where possible, and NGINX Plus‑specific features where needed. NGINX Plus dependencies are stronger in the more complex models, as described below. We anticipate that many users of the MRA will benefit from access to NGINX professional services and to technical support, which comes with an NGINX Plus subscription.

An Overview of the Microservices Reference Architecture

We are building the Reference Architecture to be compliant with the principles of the Twelve‑Factor App. The services are designed to be lightweight, ephemeral, and stateless.

The MRA uses industry standard components like Docker containers, a wide range of languages – Java, PHP, Python, NodeJS/JavaScript, and Ruby – and NGINX‑based networking.

One of the biggest changes in application design and architecture when moving to microservices is using the network to communicate between functional components of the application. In monolithic apps, application components communicate in memory. In a microservices app, that communication happens over the network, so network design and implementation become critically important.

To reflect this, the MRA has been implemented using three different networking models, all of which use NGINX or NGINX Plus. They range from relatively simple to feature‑rich and more complex:

- Proxy Model – A simple networking model suitable for implementing NGINX Plus as a controller or API gateway for a microservices application. This model is built on top of Docker Cloud.

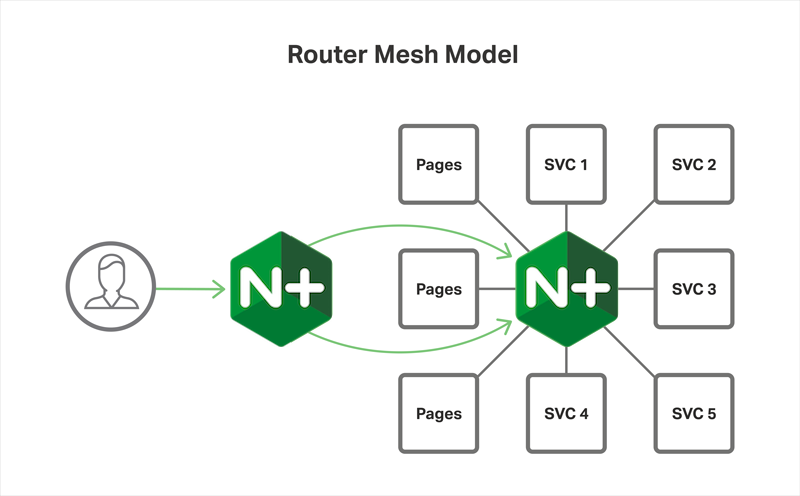

- Router Mesh Model – A more robust approach to networking, with a load balancer on each host and management of the connections between systems. This model is similar to the architecture of Deis 1.0.

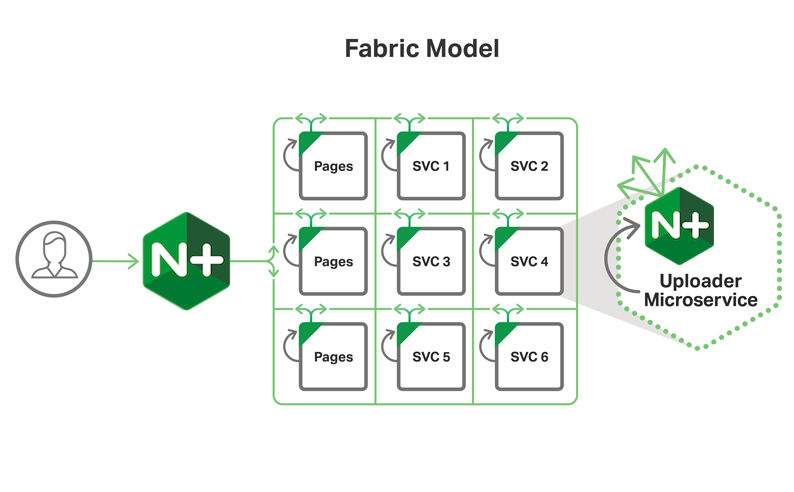

- Fabric Model – The crown jewel of the MRA, the Fabric Model features NGINX Plus in each container, handling all ingress and egress traffic. It works well for high‑load systems and supports SSL/TLS at all levels, with NGINX Plus providing reduced latency, persistent SSL/TLS connections, service discovery, and the circuit breaker pattern across all microservices.

Our intention is that you use these models as a starting point for your own microservices implementations, and we welcome feedback from you as to how to improve the MRA. (You can start by adding to the Comments below.)

A brief description of each model follows; we suggest you read all the descriptions to start getting an idea of how you might best use one or more of the models. Future blog posts will describe each of the models in detail, one per blog post.

The Proxy Model in Brief

The Proxy Model is a relatively simple networking model. It’s an excellent starting point for an initial microservices application, or as a target model in converting a moderately complex monolithic legacy app.

In the Proxy Model, NGINX or NGINX Plus acts as an ingress controller, routing requests to microservices. NGINX Plus can use dynamic DNS for service discovery as new services are created. The Proxy Model is also suitable for use as a template when using NGINX as an API gateway.

If interservice communication is needed – and it is by most applications of any level of complexity – the service registry provides the mechanism within the cluster. (See this blog post for a detailed list of interservice communications mechanisms.) Docker Cloud uses this approach by default; to connect to another service, a service queries the DNS and gets an IP address to send a request to.

Generally, the Proxy Model is workable for simple to moderately complex applications. It’s not the most efficient approach/Model for load balancing, especially at scale; use one of the models described below if you have heavy load balancing requirements. (“Scale” can refer to a large number of microservices as well as high traffic volumes.)

Editor – For an in‑depth exploration of this model, see MRA, Part 2 – The Proxy Model.

Stepping Up to the Router Mesh Model

The Router Mesh Model is moderately complex and is a good match for robust new application designs, and also for converting more complex, monolithic legacy apps that don’t need the capabilities of the Fabric Model.

The Router Mesh Model takes a more robust approach to networking than the Proxy Model, by running a load balancer on each host and actively managing connections between microservices. The key benefit of the Router Mesh Model is more efficient and robust load balancing between services. If you use NGINX Plus, you can implement active health checks to monitor the individual service instances and throttle traffic gracefully when they are taken down.

Deis Workflow uses an approach similar to the Router Mesh Model to route traffic between services, with instances of NGINX running in a container on each host. As new app instances are spun up, a process extracts service information from the etcd service registry and loads it into NGINX. NGINX Plus can work in this mode as well, using various locations and their associated upstreams.

Editor – For an in‑depth exploration of this model, see MRA, Part 3 – The Router Mesh Model.

And Finally – The Fabric Model, with Optional SSL/TLS

We here at NGINX are most excited about the Fabric Model. It brings some of the most exciting promises of microservices to life, including high performance, flexibility in load balancing, and ubiquitous SSL/TLS down to the level of individual microservices. The Fabric Model is suitable for secure applications and scalable to very large applications.

In the Fabric Model, NGINX Plus is deployed within each container and becomes the proxy for all HTTP traffic going in and out of the containers. The applications talk to a localhost location for all service connections and rely on NGINX Plus to do service discovery, load balancing, and health checking.

In our configuration, NGINX Plus queries ZooKeeper for all instances of the services that the app needs to connect to. With the DNS frequency setting, valid, set to 1 second, for example, NGINX Plus scans ZooKeeper for instance changes every second, and routes traffic appropriately.

Because of the powerful HTTP processing in NGINX Plus, we can use keepalives to maintain stateful connections to microservices, reducing latency and improving performance. This is an especially valuable feature when using SSL/TLS to secure traffic between the microservices.

Finally, we use NGINX Plus’ active health checks to manage traffic to healthy instances and, essentially, build in the circuit breaker pattern for free.

Editor – For an in‑depth exploration of this model, see MRA, Part 4 – The Fabric Model.

An Ingenious Demo App for the MRA

The MRA includes a sample application as a demo: the Ingenious photo‑sharing app. Ingenious is implemented in each of the three models – Proxy, Router Mesh, and Fabric. The Ingenious demo app will be released to the public later this year.

Ingenious is a simplified version of a photo storage and sharing application, a la Flickr or Shutterfly. We chose a photo‑sharing application for a few reasons:

- It is easy for both users and developers to grasp what it does.

- There are multiple data dimensions to manage.

- It is easy to incorporate beautiful design in the app.

- It provides asymmetric computing requirements – a mix of high‑intensity and low‑intensity processing – which enables realistic testing of failover, scaling, and monitoring features across different kinds of functionality.

Conclusion

The NGINX Microservices Reference Architecture is an exciting development for us, and for the customers and partners we’ve shared it with to date. Over the next few months we will publish a series of blog posts describing it in detail, and we will make it available later this year. We will also be discussing it in detail at nginx.conf 2016, September 7–9 in Austin, TX. Please give us your feedback, and we look forward to seeing you.

In the meantime, try out the MRA with NGINX Plus for yourself – start your free 30-day trial today or contact us to discuss your use cases.